The True Cost of Running Custom LLMs: Cloud API vs. Local Hosting in 2026

Table of Contents

- The Financial Mechanics of Proprietary Cloud APIs

- The Open-Weight Renaissance: The Llama 4 Paradigm

- The Hardware Imperative: NVIDIA Blackwell B200 vs. Hopper H100

- Evaluating Cloud Compute Costs for Local Hosting

- The CapEx Path: Bare-Metal Procurement and Data Center Colocation

- The Hidden Operational Expenses: MLOps and Infrastructure Maintenance

- Regulatory Compliance and Data Sovereignty

- Calculating the Break-Even Intersection

- Strategic Architecture for Cost Reduction

- Conclusion: Making the Definitive Infrastructure Choice

- Show all

The generative artificial intelligence landscape has fundamentally shifted. The initial rush to integrate large language models into business workflows has matured into a rigorous, uncompromising evaluation of unit economics. As enterprise artificial intelligence usage scales from isolated internal pilot programs to customer-facing, high-volume production systems, cloud API costs are skyrocketing. Chief Technology Officers and engineering leaders are no longer asking if these models are capable; they are actively researching the financial feasibility of shifting away from proprietary endpoints to hosting open-source models themselves.

At Tool1.app, we frequently consult with business leaders and enterprise architects who are experiencing this exact friction. They are drawn to the unprecedented reasoning intelligence of models like GPT-5 and Claude 4.5, but the unpredictable, token-based billing models threaten their gross margins. The question is no longer whether artificial intelligence can solve complex business problems, but how to deploy it sustainably without incurring catastrophic operational expenses. Understanding the true cost of hosting LLM vs API solutions requires an exhaustive deep dive into token economics, hardware procurement, cloud compute utilization rates, and the often-ignored hidden operational expenses of machine learning infrastructure.

The Financial Mechanics of Proprietary Cloud APIs

To establish a comprehensive baseline, we must first examine the commercial realities of utilizing proprietary models via application programming interfaces in 2026. Providers like OpenAI and Anthropic monetize their massive research and development investments through usage-based pricing models. These models charge based on tokens, which are fragments of words, numbers, or punctuation that the neural network processes as input and generates as output.

At first glance, the pricing per individual token appears negligible. However, in an enterprise production environment, these micro-transactions accumulate with staggering speed. A critical nuance of this pricing structure is that output tokens are universally more expensive than input tokens, and advanced reasoning capabilities demand premium pricing tiers.

Based on current 2026 pricing matrices, utilizing a frontier model like OpenAI’s GPT-5 incurs significant costs. Anthropic follows a similar, highly segmented tiered structure with its Claude 4.5 and 4.6 families. The following table illustrates the comparative token costs across leading proprietary models, converted to Euros to reflect the European operational context.

| Proprietary Model Provider | Input Price (per 1M tokens) | Cached Input (per 1M tokens) | Output Price (per 1M tokens) | Target Enterprise Use Case |

| OpenAI GPT-5 | €1.15 | €0.11 | €9.20 | Flagship reasoning, complex coding, multi-agent coordination |

| OpenAI GPT-5-mini | €0.23 | €0.02 | €1.84 | High-volume conversational agents, routine text processing |

| OpenAI o3-mini | €1.01 | €0.50 | €4.00 | Specialized mathematical and scientific reasoning |

| Anthropic Claude Opus 4.6 | €4.60 | €0.46 | €23.00 | Peak intelligence, mission-critical autonomous workflows |

| Anthropic Claude Sonnet 4.5 | €2.76 | €0.27 | €13.80 | Balanced performance, production workhorse, RAG applications |

| Anthropic Claude Haiku 4.5 | €0.92 | €0.09 | €4.60 | Speed-optimized tasks, high-throughput content moderation |

The Illusion of Cheap Tokens and Scaling Multipliers

The fundamental flaw in evaluating API costs based solely on the sticker price per million tokens is that the true expense rarely stems from a single, isolated request. API costs are driven by architectural necessities inherent to modern generative applications, most notably Retrieval-Augmented Generation and conversational memory management.

In a standard enterprise customer service chatbot, neural networks lack persistent memory. Every new message a user sends requires the application to re-submit the entire conversation history back to the API to maintain contextual awareness. A conversation that begins with a modest 100-token prompt can rapidly inflate. By the tenth conversational turn, the system might be transmitting 5,000 input tokens per message. Because users pay for these historical tokens repeatedly with every single interaction, the input costs scale geometrically rather than linearly.

Furthermore, Retrieval-Augmented Generation introduces massive overhead. To ground the model in proprietary enterprise data and prevent hallucinations, systems retrieve relevant corporate documents, chunk the text, and append it to the user’s prompt. Models like Claude Sonnet 4.5 boast context windows of up to 1 million tokens, allowing entire technical manuals or code repositories to be injected into a single prompt. While powerful, sending a 100,000-token context window to Claude Sonnet 4.5 costs €0.27 per query. If an enterprise application processes ten thousand queries an hour, the input costs alone amount to €2,700 per hour, or nearly €2 million per month, completely independent of the generated output.

The Impact of Extended Thinking Features

An additional cost multiplier introduced in late 2025 and popularized in 2026 is the concept of “Extended Thinking” or reasoning tokens. Models designed for extreme logical deduction, such as OpenAI’s o-series and Anthropic’s Claude 4.5 family, possess the ability to generate explicit internal reasoning blocks before outputting a final answer.

Crucially, these internal thought processes consume thousands of tokens, and providers bill these hidden reasoning tokens at the premium output rate. A user might ask a complex financial forecasting question, receive a concise 200-word summary, and yet be billed for 4,000 output tokens because the model spent significant computational effort “thinking” through the problem. If unregulated by hard token budgets within the API call, these reasoning capabilities act as a silent siphon on enterprise cloud budgets.

The Open-Weight Renaissance: The Llama 4 Paradigm

The intense financial strain of proprietary APIs has catalyzed a mass enterprise migration toward open-weight foundation models. Meta’s release of the Llama 4 series in April 2025 effectively closed the performance gap with closed-source giants, providing organizations with frontier-level intelligence that can be downloaded and operated on private infrastructure without recurring licensing fees.

The Llama 4 family introduces models that are natively multimodal and multilingual, utilizing a highly advanced Mixture of Experts architecture. In a Mixture of Experts system, the model is composed of multiple specialized sub-networks. For any given token generation, only a fraction of these experts are activated, vastly improving inference efficiency compared to dense models.

The two flagship models in this release define the self-hosting landscape:

- Llama 4 Scout: A 109-billion-parameter model with 16 experts (17 billion parameters active per token). It boasts an unprecedented 10-million-token context window. Due to its size, it can be quantized to 4-bit precision and theoretically run on smaller, more accessible GPU clusters, making it ideal for multi-document summarization and parsing extensive user activity logs.

- Llama 4 Maverick: A massive 400-billion-parameter model featuring 128 experts (with 17 billion active per token) and a 1-million-token context window. This model rivals GPT-5 in complex reasoning, autonomous agentic behavior, and high-performance text understanding.

While the software is free, bringing a 400-billion-parameter model to life within a corporate environment is an infrastructure challenge of the highest order. The sheer size of the model weights means it cannot fit into the memory of a standard server, nor even a standard enterprise-grade graphics processing unit.

The Hardware Imperative: NVIDIA Blackwell B200 vs. Hopper H100

To comprehend the infrastructure costs of self-hosting, one must understand the data center hardware required to execute these massive mathematical operations. In 2026, the artificial intelligence infrastructure landscape is defined by the transition from NVIDIA’s Hopper architecture (the H100) to the Blackwell architecture (the B200).

The computational demands of Llama 4 Maverick are immense. Even when weights are quantized to lower precisions to save space, the model requires vast amounts of Video Random Access Memory and astronomical memory bandwidth to generate text at speeds acceptable for human interaction.

The NVIDIA B200 represents a monumental leap in capability, specifically tailored for these massive Mixture of Experts models. The B200 features 192 gigabytes of HBM3e memory and a memory bandwidth of 8 terabytes per second, compared to the older H100’s 141 gigabytes and 4.8 terabytes per second. Furthermore, the Blackwell architecture introduces native support for FP4 and FP8 precision formats natively within its Tensor Cores, effectively doubling throughput for models optimized for these data types.

Benchmarking Throughput: The Token Per Second Metric

In the realm of local hosting, performance is measured in Output Tokens Per Second, representing the system’s ability to generate text, and Time to First Token, representing latency.

Independent benchmarking by Artificial Analysis in late 2025 and early 2026 demonstrated that a single NVIDIA DGX node containing eight B200 GPUs can achieve over 1,000 tokens per second per user on the 400-billion-parameter Llama 4 Maverick model. Specialized hardware competitors, such as Cerebras, have pushed this even further, breaking the 2,500 tokens per second barrier.

When optimized with inference engines like TensorRT-LLM and employing techniques like speculative decoding, an 8x B200 cluster can achieve maximum system throughputs of up to 40,000 total output tokens per second across thousands of concurrent requests. By contrast, the previous generation H100 clusters struggle to maintain even half of that throughput under similar concurrent loads, suffering from severe memory bandwidth bottlenecks when attempting to serve a 400-billion-parameter model. For enterprises looking to self-host at scale, the B200 is not merely an upgrade; it is a fundamental requirement to achieve the economies of scale necessary to undercut API pricing.

Evaluating Cloud Compute Costs for Local Hosting

When an organization decides to take ownership of its generative infrastructure, it transitions from a purely operational expense model governed by token volume to a model characterized by high fixed infrastructure costs. The most common approach to self-hosting is to lease bare-metal or dedicated GPU instances from cloud providers.

This approach mitigates the massive upfront capital expenditure of buying physical servers while providing the control of a self-hosted environment. The cost of hosting LLM vs API logic here hinges entirely on the hourly rental rate of the hardware and the efficiency with which the enterprise utilizes it.

In 2026, the pricing for high-end accelerator instances varies significantly based on the provider, geographic region, and commitment terms. Hyperscalers like Amazon Web Services and Google Cloud Platform command a premium for reliability and ecosystem integration, while specialized European Sovereign AI Cloud providers like Nebius, Scaleway, and OVHcloud offer aggressive pricing to capture market share and provide data localization guarantees.

The following table outlines the estimated hourly costs for renting dedicated GPU instances capable of running frontier open-source models. Note that running Llama 4 Maverick requires at least an 8-GPU cluster to accommodate its massive memory footprint.

| Cloud Provider & Architecture | GPU Configuration | VRAM Capacity | Estimated Hourly Rate (On-Demand) | Estimated Hourly Rate (3-Year Reserved) |

| AWS EC2 (P5en instance) | 8x NVIDIA H200 | 1,128 GB | €36.80 | €27.60 |

| AWS EC2 (P6 instance) | 8x NVIDIA B200 | 1,536 GB | €46.00 | €34.50 |

| Google Cloud Platform | 8x NVIDIA H100 | 640 GB | €26.50 | €19.88 |

| Nebius AI Cloud | 1x NVIDIA B200 | 192 GB | €5.06 | Custom Enterprise Pricing |

| Nebius AI Cloud | 8x NVIDIA B200 | 1,536 GB | €40.48 | Custom Enterprise Pricing |

| Scaleway | 8x NVIDIA H100 | 640 GB | €22.08 | €16.56 |

Renting an 8x B200 node from a competitive provider at approximately €40.00 per hour translates to a staggering €28,800 per month if running continuously for 30 days. While this figure induces sticker shock compared to a €50 monthly API subscription, it buys the enterprise a finite but massive capacity. The financial viability of this €28,800 monthly bill depends entirely on pushing as many tokens through that hardware as physically possible. If the server is idle for 12 hours a day, the cost per generated token doubles, rapidly destroying the economic advantage over proprietary APIs.

The CapEx Path: Bare-Metal Procurement and Data Center Colocation

For global enterprises with massive, sustained, and highly predictable generative workloads, renting cloud instances is financially inefficient over a multi-year horizon. These organizations opt for physical procurement, purchasing the hardware outright and colocating it within specialized data centers.

A single NVIDIA B200 GPU carries an enterprise purchase price of roughly €23,000 to €25,000. An 8x HGX node, complete with the necessary NVLink switches, high-performance CPUs, terabytes of system RAM, and massive NVMe storage arrays, requires a capital expenditure exceeding €360,000.

However, hardware depreciation is only a fraction of the total cost of ownership. The physical realities of artificial intelligence infrastructure dictate massive ongoing expenses for power and cooling. The Blackwell architecture is incredibly power-dense, with a single 8x B200 node drawing upwards of 7 to 10 kilowatts under full load.

European Energy Economics and Data Center Efficiency

Colocating this equipment in Europe subjects the enterprise to the continent’s volatile wholesale electricity markets. In early 2026, electricity prices vary wildly across jurisdictions. France, benefiting from its extensive nuclear fleet, boasts prices around €10.51 per Megawatt-hour (MWh), or approximately €0.01 per kilowatt-hour (kWh). Conversely, nations relying heavily on imported natural gas, such as Italy or Poland, see prices hovering between €80.00 and €85.00 per MWh (€0.08 per kWh).

Furthermore, data centers are billed based on their Power Usage Effectiveness. A PUE of 1.0 means perfect efficiency, where all energy goes to computing equipment. The European average PUE in 2026 sits around 1.6, with older facilities in Southern Europe performing worse due to elevated ambient temperatures requiring intense mechanical chilling. New hyperscale facilities in the Nordics operate much closer to a PUE of 1.15.

If an enterprise deploys a cluster of ten 8x B200 nodes (drawing 100 kW continuously) in a German facility with a PUE of 1.5, the facility draws 150 kW from the grid. At an average German industrial rate of €0.06 per kWh, the raw electricity cost alone surpasses €6,400 per month. When factoring in rack space rental, high-speed fiber cross-connects, and physical security, the monthly colocation bill can easily exceed €15,000. Yet, for an organization that can amortize the €3.6 million hardware investment over a three-year lifespan, the cost per token drops to fractions of a cent, rendering proprietary API pricing mathematically obsolete for their use case.

The Hidden Operational Expenses: MLOps and Infrastructure Maintenance

A critical error frequently observed in enterprise budget forecasts is the assumption that self-hosting merely involves paying for hardware and electricity. The most profound hidden cost in the cost of hosting LLM vs API equation is human capital.

An API is a fully managed service. If OpenAI’s servers fail in the middle of the night, their engineering teams resolve the outage. If an enterprise self-hosts, it assumes complete responsibility for uptime, latency optimization, and infrastructure resilience. Deploying a 400-billion-parameter model is not a simple software installation; it requires profound expertise in Machine Learning Operations.

The Talent Acquisition Premium

MLOps engineers capable of optimizing distributed inference across multi-GPU nodes are among the most sought-after professionals in the 2026 labor market. They must possess deep knowledge of advanced serving frameworks like vLLM, TensorRT-LLM, and SGLang. They must configure dynamic batching, manage Key-Value (KV) cache memory allocation, and orchestrate complex load balancers to ensure the expensive GPUs are constantly fed with data.

In the European tech ecosystem, salaries reflect this scarcity. In tech hubs such as Berlin, Munich, or Amsterdam, mid-level MLOps engineers command average annual salaries between €68,000 and €76,000. Senior engineers and Directors of Machine Learning Infrastructure routinely surpass €120,000 to €150,000 annually. A resilient production environment requires a dedicated team of at least three to four engineers to ensure 24/7 reliability, manage system updates, and fine-tune model weights as new data becomes available. This introduces an operational expenditure of €300,000 to €500,000 annually simply to maintain the infrastructure that hosts the “free” open-source model.

At Tool1.app, we mitigate this burden for our clients by providing managed MLOps services, allowing businesses to leverage custom open-source deployments without inflating their internal payroll with highly specialized, hard-to-retain talent.

Regulatory Compliance and Data Sovereignty

Financial calculations alone do not dictate the infrastructure strategy of modern European enterprises. Regulatory compliance and data sovereignty have emerged as paramount concerns, frequently acting as the decisive factor forcing organizations away from US-based hyperscalers and APIs.

The enforcement of the European Union Artificial Intelligence Act, coupled with the rigorous standards of the General Data Protection Regulation, places immense liability on corporations processing sensitive data. When an organization utilizes a proprietary API, proprietary data—be it patient health records, internal financial projections, or intellectual property—leaves the corporate firewall and is transmitted to external servers. Even with zero-retention enterprise agreements, legal and compliance departments view this external transmission as an unacceptable risk vector.

Self-hosting an LLM ensures that data remains strictly within the organization’s controlled environment. The model processes the data locally, generates the response, and purges the context, guaranteeing total data sovereignty. However, this compliance is not free.

Building a legally compliant data layer for a self-hosted environment involves deploying enterprise-grade encryption for data at rest and in transit, implementing rigid identity and access management controls, and establishing comprehensive, immutable audit trails. Maintaining these compliance architectures, alongside securing SOC2 or ISO 27001 certifications for the custom infrastructure, can cost an enterprise between €46,000 and €92,000 annually in software licensing and third-party auditing fees.

To bridge this gap, many European organizations are turning to Sovereign AI Cloud providers like Scaleway, Orange, and Iliad. These providers guarantee that the physical servers reside within EU borders and are wholly owned by European entities, shielding the data from foreign jurisdictions like the US Cloud Act. Partnering with these providers slightly increases the hourly compute cost but drastically reduces the legal friction of deploying generative technologies in highly regulated sectors like banking, healthcare, and insurance.

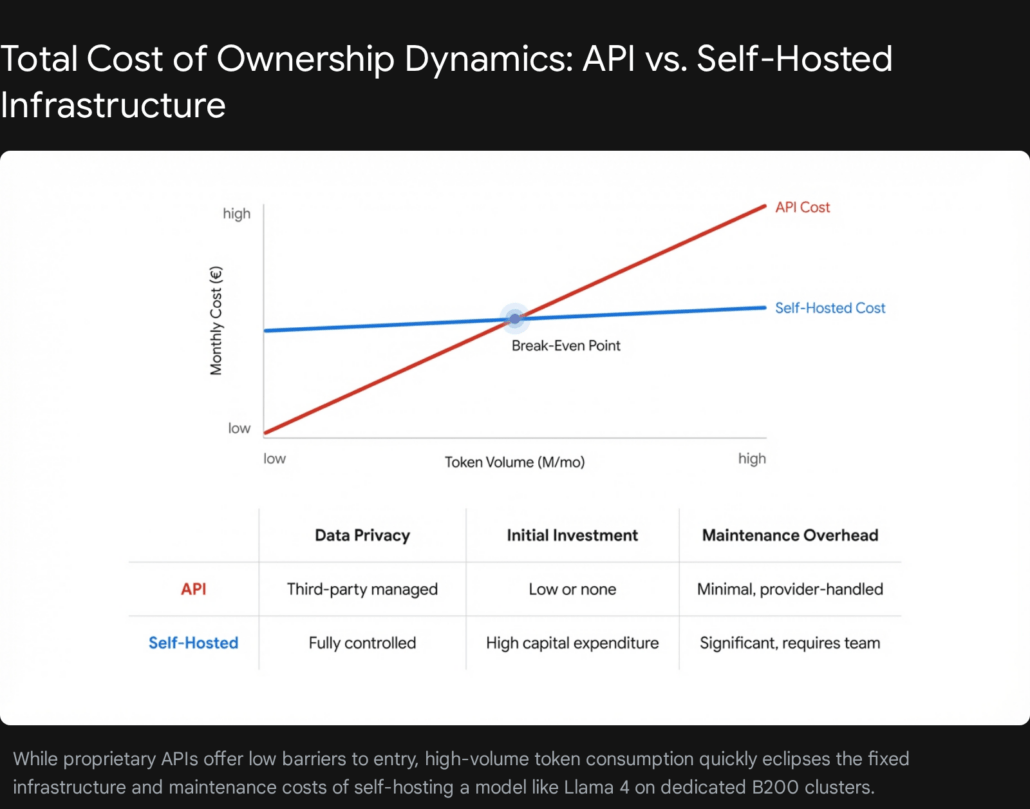

Calculating the Break-Even Intersection

To make a mathematically sound infrastructure decision, CTOs must calculate the exact intersection where the high fixed costs of a self-hosted GPU node fall below the linear, variable costs of an API. This requires an intimate understanding of model throughput, concurrency, and hardware utilization rates.

Let us construct a rigorous financial model comparing the GPT-5 API to a self-hosted Llama 4 Maverick deployment on an 8x B200 cloud instance.

The API Baseline:

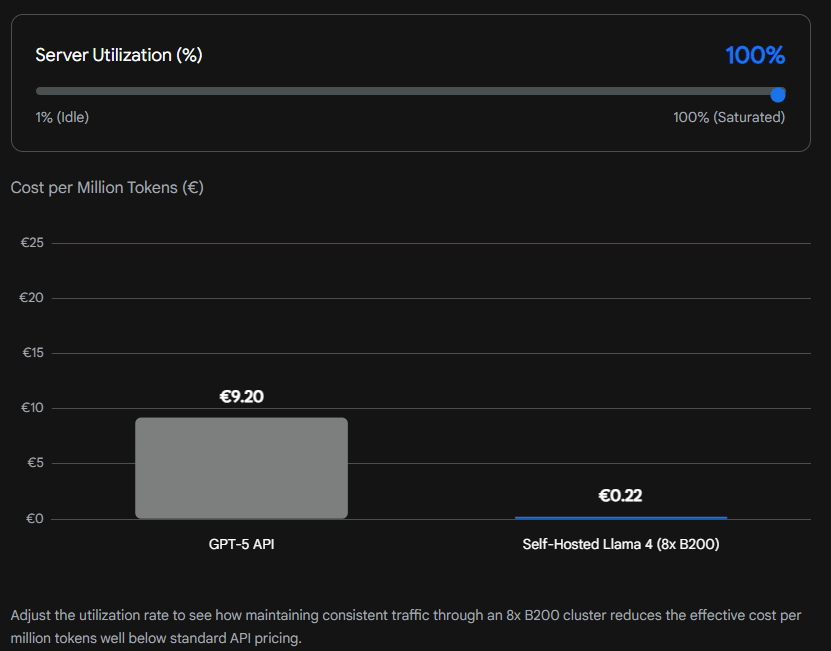

As established, GPT-5 output tokens cost €9.20 per million. Input tokens cost €1.15 per million. For simplicity in this model, assuming a typical enterprise workload heavily weighted toward generation and complex reasoning, we will use a blended average cost of €7.00 per million mixed tokens.

The Self-Hosted Baseline:

Renting an 8x B200 node costs approximately €40.00 per hour.

NVIDIA’s benchmarks indicate that an optimized 8x B200 node running Llama 4 Maverick can achieve peak throughputs approaching 40,000 output tokens per second under maximum concurrent batching. This equates to an astonishing 144 million tokens per hour.

The Mathematical Intersection:

If the enterprise runs the hardware at 100% maximum capacity, processing 144 million tokens every hour, the math is simple:

Hourly Server Cost (€40.00) divided by Hourly Token Production (144 million) results in an effective cost of €0.27 per million tokens.

Compared to the API’s €7.00 per million, the self-hosted solution is over 25 times cheaper. At this volume, the API would cost over €1,000 per hour, making the €40.00 server rental look like a rounding error.

However, real-world enterprise traffic is never perfectly consistent. Traffic spikes during business hours and drops to near zero overnight. If the server is only utilized at a 5% capacity rate throughout the month, it processes only 7.2 million tokens per hour.

Hourly Server Cost (€40.00) divided by 7.2 million tokens results in an effective cost of €5.55 per million tokens.

At a 5% utilization rate, the self-hosted solution is only marginally cheaper than the API, and once the salaries of the MLOps team are factored in, it becomes a severe financial liability. The break-even point is entirely dependent on sustained, high-volume utilization.

Effective Cost per Million Tokens: The Impact of Hardware Utilization

Strategic Architecture for Cost Reduction

Regardless of whether an enterprise ultimately chooses the managed API route or the sovereign self-hosted route, the economic burden of artificial intelligence can be heavily mitigated through strategic software engineering. Deploying a model is merely the first step; orchestrating how the application interacts with that model is where true cost efficiency is achieved. Partnering with a specialized software development agency like Tool1.app ensures these deep optimizations are built into the foundation of your digital infrastructure from day one.

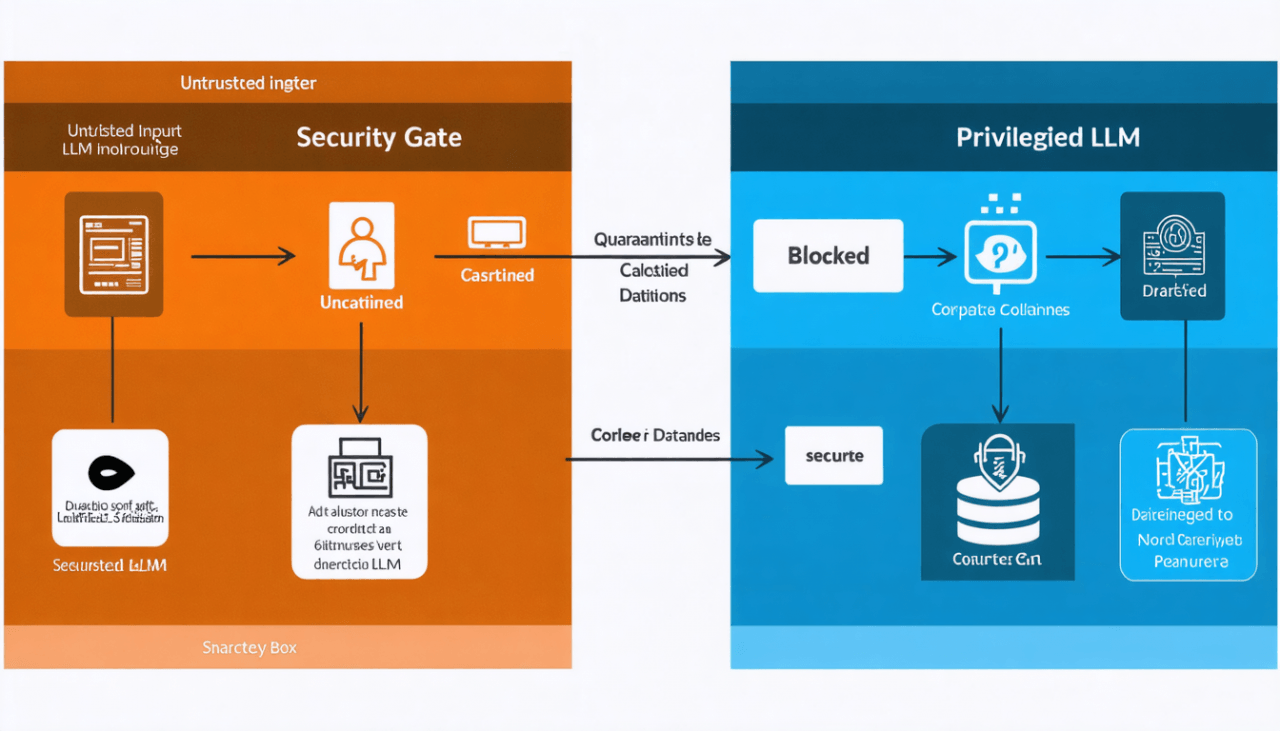

Implementing Semantic Routing

One of the most effective strategies for reducing overall inference costs is semantic routing. It is computationally wasteful to invoke a 400-billion-parameter model to execute a simple task, such as extracting a date from a receipt or determining the sentiment of a basic customer review.

An optimized architecture utilizes a rapid, lightweight classification model to analyze incoming user queries. If the query requires complex logical reasoning, multi-step planning, or deep coding generation, the router directs the payload to the expensive, massive model (e.g., GPT-5 or Llama 4 Maverick). If the query is simple, it routes the payload to a much smaller, highly efficient model (e.g., Llama 3.1 8B or GPT-5-mini). This dynamic dispatching can slash aggregate compute costs by up to 80% without degrading the user experience, reserving the costly compute cycles strictly for tasks that require them.

Maximizing Throughput via Disaggregated Serving

For enterprises operating advanced self-hosted setups, standard deployment configurations often leave expensive hardware underutilized. The process of text generation occurs in two distinct phases: the prefill phase, where the model ingests and analyzes the prompt, and the decode phase, where the model generates the response token by token. The prefill phase is highly parallel and compute-bound, while the decode phase is sequential and memory-bandwidth-bound.

By implementing disaggregated serving, MLOps teams separate these two phases onto different hardware clusters. A cluster of older, cheaper GPUs can handle the heavy prefill computing, and pass the Key-Value cache over a high-speed network to the premium B200 cluster, which specializes in rapid decoding. This ensures that the €40-per-hour B200 instances are never bottlenecked waiting for prompts to be processed, drastically increasing overall system throughput and lowering the effective cost per token.

Practical Implementation: vLLM and Tensor Parallelism

To realize theoretical cost savings on self-hosted hardware, the deployment software must be flawlessly configured. Standard, out-of-the-box execution scripts are catastrophically inefficient for massive models. Utilizing an optimized inference engine like vLLM, which employs PagedAttention to minimize memory fragmentation, is mandatory.

Furthermore, executing a model that exceeds the memory capacity of a single GPU requires precise Tensor Parallelism. This technique slices the model’s weight matrices and distributes them across multiple GPUs. When a matrix multiplication occurs, each GPU calculates a portion of the result simultaneously, communicating via high-speed interconnects like NVLink to combine the final output.

The following is a conceptual representation of how a dedicated engineering team might launch a high-throughput, API-compatible server for the Llama 4 109B model utilizing vLLM, specifically optimized for the Blackwell architecture.

Bash

# Example deployment of Llama 4 109B (Scout) using vLLM optimized for an 8x B200 node

python3 -m vllm.entrypoints.openai.api_server

--model meta-llama/Llama-4-Scout-109B-Instruct

--tensor-parallel-size 8

--max-model-len 32768

--gpu-memory-utilization 0.95

--enforce-eager

--dtype fp8

By explicitly configuring --tensor-parallel-size 8, the system distributes the immense computational load equally across all eight GPUs in the node. Applying the --dtype fp8 parameter takes direct advantage of the NVIDIA B200’s native support for 8-bit floating-point precision, drastically accelerating token generation speed while halving the memory footprint compared to standard 16-bit precision, all without a perceptible degradation in the quality of the generated response. It is this exact caliber of low-level infrastructure optimization that empowers a self-hosted environment to mathematically outperform proprietary APIs.

Conclusion: Making the Definitive Infrastructure Choice

The decision between utilizing a proprietary cloud API and hosting a custom open-weight model locally is arguably the most consequential architectural choice a modern enterprise will face in this decade. Cloud APIs offer unparalleled ease of deployment, zero infrastructure maintenance overhead, and immediate access to the absolute cutting edge of artificial reasoning. For startups, proof-of-concept projects, and businesses with highly sporadic traffic patterns, the API model remains the only logical choice.

However, the economics fundamentally invert as enterprise adoption matures. As digital products scale and internal workflows become heavily automated, token consumption inevitably reaches the hundreds of millions or billions per month. At this threshold, the variable financial burden of APIs becomes unsustainable, effectively penalizing a company for its own growth. Concurrently, the lack of sovereign data control presents regulatory risks that multinational corporations simply cannot absorb.

Self-hosting open-source frontier models like Llama 4 on premium hardware like the NVIDIA B200 redefines the unit economics of intelligence. It requires an intimidating commitment to high fixed infrastructure costs and demands the recruitment of specialized engineering talent. Yet, for high-volume, enterprise-grade applications, it drops the marginal cost per generated token to near zero. It grants absolute control over data privacy, insulates the business from arbitrary vendor pricing shifts, and transforms artificial intelligence from a runaway operational expense into a manageable, scalable, and highly proprietary corporate asset.

Architect Your AI Future with Expert Precision

Navigating the labyrinthine complexities of hardware procurement, multi-GPU model optimization, MLOps pipeline orchestration, and GDPR-compliant infrastructure requires specialized, hard-to-find expertise. An enterprise should not have to gamble its engineering budget attempting to perfect this transition internally.

Let Tool1.app analyze your current generative artificial intelligence infrastructure and build a cost-effective, hyper-optimized custom LLM solution tailored specifically to your exact business volume, latency requirements, and security mandates. Whether your product roadmap necessitates a heavily optimized semantic routing layer for existing API integrations, or a fully sovereign, self-hosted deployment running on European data centers, our team of expert software developers and machine learning engineers will ensure your intelligent systems scale seamlessly and profitably. Stop paying a premium for intelligence. Contact us today to secure your technological advantage and transform your infrastructure into a strategic competitive moat.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.