Claude 3.5 vs. Gemini 1.5 Pro: Which LLM is Best for Enterprise Customer Support?

Table of Contents

- The Architectural Divide in Memory Management

- Database Retrieval Versus Infinite Context Ingestion

- Analytical Performance and Factual Groundedness

- Function Calling: The Anatomy of an Autonomous Support Agent

- Economics of Scale: Enterprise Pricing Structures

- Multilingual Support and Global Enterprise Reach

- Data Privacy, Security, and Compliance Standards

- Latency, Throughput, and the Real-Time User Experience

- Strategic Verdict: Architecting for the Future

- Transform Your Customer Experience with Custom Solutions

- Show all

The landscape of enterprise customer support is undergoing a massive architectural transformation. Gone are the days of rigid, decision-tree chatbots that frustrate users and escalate queries to human agents at the slightest deviation from a pre-programmed script. Today, highly advanced foundational models are capable of processing complex, multi-turn customer issues, querying internal databases in real-time, and generating human-like responses that resolve tickets autonomously. For chief technology officers, IT directors, and business owners, the question is no longer whether to implement artificial intelligence, but which foundational infrastructure to build upon to maximize return on investment while safeguarding corporate data.

In the current enterprise arena, two foundational systems have emerged as the dominant choices for complex logic and agentic workflows: Anthropic’s Claude 3.5 Sonnet and Google’s Gemini 1.5 Pro. Both systems offer exceptional intelligence, but they represent fundamentally different engineering philosophies regarding context memory, pricing economics, and ecosystem integration. Choosing the right engine dictates not only your operational costs but also the latency of your customer interactions, the accuracy of your automated resolutions, and your compliance with strict global data privacy frameworks.

At Tool1.app, a software development agency specializing in mobile and web applications, custom websites, Python automations, and advanced artificial intelligence solutions for business efficiency, we frequently guide enterprises through this exact architectural decision. We architect custom applications that bridge the gap between raw algorithmic capabilities and tangible business value. This comprehensive engineering and business analysis will dissect Claude 3.5 Sonnet and Gemini 1.5 Pro across context window limitations, analytical performance benchmarks, tool-use precision, pricing structures, and European data compliance, providing a definitive guide on which system is best suited for your enterprise customer support infrastructure.

The Architectural Divide in Memory Management

The most glaring technical distinction between Claude 3.5 Sonnet and Gemini 1.5 Pro lies in their context window capacity. The context window is the active memory of the system—the maximum amount of text, code, or data it can hold in its working memory during a single interaction before it begins to lose track of earlier information. Understanding how each provider approaches memory is the first step in architecting a robust customer service platform.

Claude 3.5 Sonnet operates with a 200,000-token context window. To put this in perspective, 200,000 tokens equate to roughly 150,000 words, or about 500 pages of standard text. This is more than sufficient for standard customer support interactions, allowing the application to ingest the user’s current query, a lengthy history of past interactions, and several pages of relevant product documentation retrieved on the fly. For the vast majority of consumer-facing inquiries—such as checking a shipping status, troubleshooting a software glitch, or processing a return—this capacity provides ample cognitive workspace.

Gemini 1.5 Pro, however, fundamentally disrupts traditional memory constraints by offering an unprecedented 1 million to 2 million-token context window. This capacity represents a paradigm shift in how data can be processed. Two million tokens can encompass entire corporate codebases, hour-long customer service video call transcripts, or an enterprise’s entire library of standard operating procedures, technical manuals, and legal compliance guidelines.

Database Retrieval Versus Infinite Context Ingestion

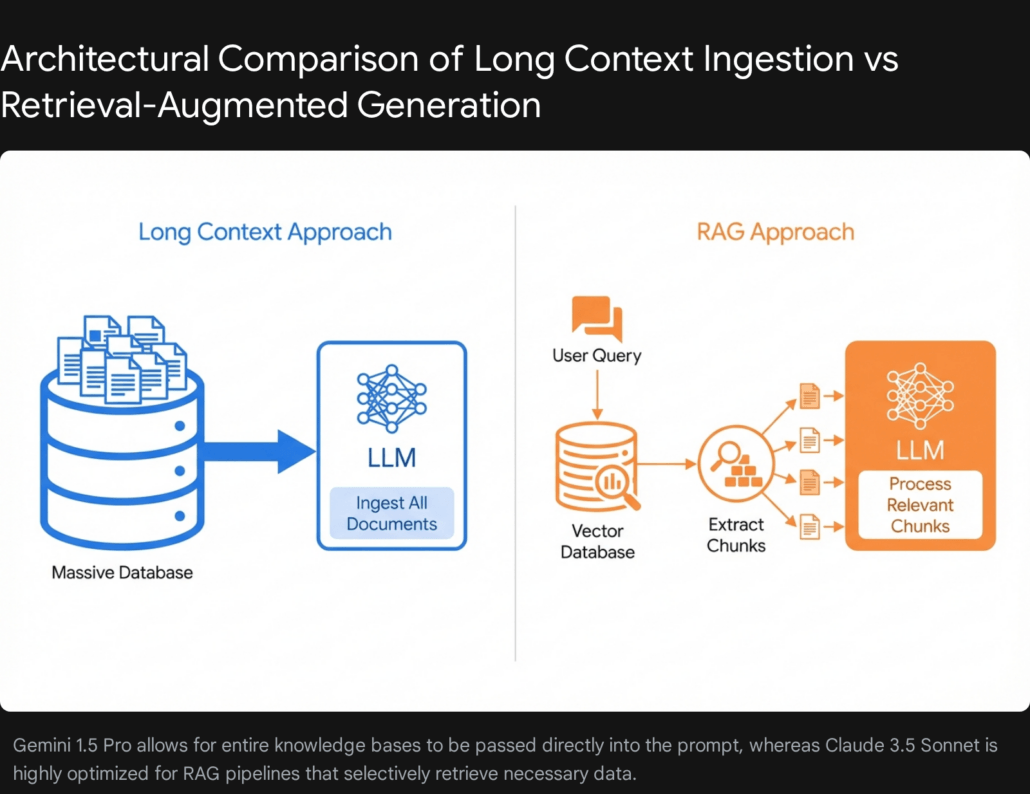

The advent of massive context windows has sparked a fierce debate among software engineers regarding the necessity of complex database retrieval systems. Retrieval-Augmented Generation is a sophisticated technique where a system searches a secure corporate database for specific, relevant snippets of information and feeds only those highly relevant snippets to the application to answer a user’s question.

For enterprise customer support, relying solely on a massive context window—feeding the entire company knowledge base into the prompt for every single user query—is technically possible but practically flawed for real-time applications. Processing millions of tokens for every chat message incurs severe latency penalties. While tests indicate that systems can maintain recall capabilities across massive datasets, the time required to generate the first word of a response can extend to 30 or 40 seconds when processing a million tokens. In a live customer support chat widget on an e-commerce website, a 40-second delay is catastrophic for user experience, leading to high abandonment rates and frustrated clients. Furthermore, the computational cost of repeatedly processing an entire technical manual for a simple password reset query is economically unsustainable at scale.

Conversely, Claude 3.5 Sonnet is arguably the industry’s premier foundational system for retrieval-based architectures. By coupling Claude with a fast vector database, businesses can retrieve the exact paragraph needed to solve a customer’s problem and pass only a few thousand tokens to the application. This results in sub-second response times and exponentially lower operational costs. Industry testing indicates that retrieval-based systems can achieve a 1250x lower cost per query compared to pure long-context approaches while maintaining predictable, ultra-low latency.

Therefore, for asynchronous support scenarios—such as analyzing a massive email thread spanning several months with dozens of attached server log files—a massive context window is unparalleled. For real-time, instantaneous chat interfaces, a well-architected retrieval system remains the superior engineering choice. At Tool1.app, we specialize in building these precise data pipelines, ensuring your customer support automation accesses your proprietary data instantly without breaking your operational budget or sacrificing response speeds.

Analytical Performance and Factual Groundedness

Customer support is rarely a straightforward discipline. Customers frequently submit convoluted complaints, demand exceptions to established policies, and present highly specific edge-case scenarios that confuse even veteran human agents. The value of an automated system is directly tied to its cognitive performance—its ability to understand nuance, follow complex multi-step corporate instructions, and avoid hallucinating false information.

Anthropic engineered Claude 3.5 Sonnet with a heavy emphasis on advanced analytical performance, nuance, and strict instruction following. In industry-standard evaluations testing graduate-level comprehension, coding proficiency, and complex logic, the updated versions of Claude 3.5 Sonnet consistently set the high-water mark among mid-tier and flagship models alike. When dealing with frustrated customers, this system exhibits a marked capability in grasping humor, frustration, and complex constraints. It is exceptionally skilled at maintaining a natural, relatable, and empathetic tone out of the box, which is a critical soft skill for customer-facing applications.

Furthermore, Anthropic’s unique training methodology makes its systems highly resistant to manipulation. In customer support, an automated agent cannot invent a refund policy that does not exist or offer unauthorized discounts. The system demonstrates rigorous factual grounding; if it does not know the answer or if the provided documentation lacks the necessary information, it is highly trained to politely admit its limitations and escalate the ticket to a human agent, rather than fabricating a plausible but incorrect resolution.

Gemini 1.5 Pro is an exceptionally powerful analytical engine as well, particularly excelling in multimodal tasks. If your customer support workflow involves users uploading images of broken physical products, video clips of software bugs, or audio recordings of strange engine noises, Gemini 1.5 Pro natively processes these inputs with extraordinary accuracy. Google has heavily optimized its architecture to synthesize data across different media types simultaneously, making it an incredible asset for omnichannel support desks that deal in visual diagnostics.

However, in pure text-based logical deduction and adherence to strict, multi-layered corporate policies, developers often note that Anthropic’s systems require significantly less prompt engineering to maintain strict boundary adherence. To illustrate the performance differences clearly, we can evaluate their capabilities across a structured matrix.

| Feature Area | Claude 3.5 Sonnet | Gemini 1.5 Pro |

| Context Limit | 200,000 Tokens | 1,000,000 to 2,000,000 Tokens |

| Logical Deduction | Exceptional; highly resistant to prompt injection | Strong; excels in synthesis across massive documents |

| Multimodal Input | Native Image Support | Native Image, Video, and Audio Support |

| Tone & Empathy | Highly natural, expressive, and conversational | Factual, direct, and highly informative |

| Factual Grounding | Strict adherence to provided context | Very strong, aided by real-time search capabilities |

| Primary Strength | Complex, multi-step backend routing and logic | Cross-referencing massive amounts of disparate data |

Function Calling: The Anatomy of an Autonomous Support Agent

A chatbot that only answers questions is merely a conversational search engine. A true autonomous customer support agent must take action: it needs to check shipping statuses via an external interface, issue refunds through a secure payment gateway, or update a user’s billing address in a Customer Relationship Management platform. This requires a technical capability known as Function Calling or Tool Use.

Both systems support this capability, allowing developers to define a set of external tools via structured schemas that the application can decide to use. When the user asks, “Where is my order?”, the system recognizes it needs external data, pauses its text generation, outputs a structured request to call a specific internal function, waits for the developer’s server to return the data, and then formulates a natural language response to the user.

Claude 3.5 Sonnet is widely considered best-in-class for function calling precision. Independent developer consensus highlights its ability to handle complex, nested schemas without missing required parameters or hallucinating unsupported actions. It excels at multi-step workflows—for instance, first querying a user database to find an account identifier, then using that identifier to query a separate billing platform, and finally calculating a prorated refund based on the combined results.

Gemini 1.5 Pro also supports robust function calling and offers the unique advantage of built-in internet search grounding, allowing it to pull real-time web data natively without custom integrations. However, when orchestrating strict internal enterprise databases, Claude’s adherence to exact formatting and its error-recovery logic—the ability to recognize that an internal system threw an error and autonomously try a different parameter—is highly resilient.

Implementation Architecture for Automated Workflows

To illustrate the practical value of these capabilities, consider how a Python backend orchestrates an interaction. When developing custom software at Tool1.app, we utilize official software development kits to create seamless, multi-turn execution loops. Below is a conceptual implementation demonstrating how an application evaluates a user’s query, decides to use a defined tool, and processes the result to resolve a customer ticket.

Python

import anthropic

import json

# Initialize the secure client connection

client = anthropic.Anthropic(api_key="SECURE_API_KEY")

# Define the custom corporate tool the application can utilize

corporate_tools =

}

}

]

# A simulated backend function representing a corporate database lookup

def execute_get_order_status(order_id):

# In a production environment, this queries an ERP or e-commerce backend

mock_logistics_database = {

"ORD-9921": "Status: Shipped. Courier out for delivery today.",

"ORD-1102": "Status: Processing. Expected warehouse dispatch tomorrow."

}

return mock_logistics_database.get(order_id, "Error: Order ID not found in the logistics system.")

def handle_customer_inquiry(user_message):

conversation_history = [{"role": "user", "content": user_message}]

# Step 1: Send the query and the tool definitions to the processing engine

initial_response = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

tools=corporate_tools,

messages=conversation_history

)

# Step 2: Determine if the engine requested an action

if initial_response.stop_reason == "tool_use":

tool_call = initial_response.content[-1]

if tool_call.name == "get_order_status":

extracted_order_id = tool_call.input["order_id"]

# Step 3: Execute the local Python function on the secure server

database_result = execute_get_order_status(extracted_order_id)

# Step 4: Append the action result back into the conversation history

conversation_history.append({"role": "assistant", "content": initial_response.content})

conversation_history.append({

"role": "user",

"content": [

{

"type": "tool_result",

"tool_use_id": tool_call.id,

"content": database_result

}

]

})

# Step 5: Generate the final natural language response for the customer

final_resolution = client.messages.create(

model="claude-3-5-sonnet-20241022",

max_tokens=1024,

tools=corporate_tools,

messages=conversation_history

)

return final_resolution.content.text

# Example Execution Trigger

customer_input = "Hi support, can you please tell me where order ORD-9921 is? I need it by tonight."

print(handle_customer_inquiry(customer_input))

This architecture allows the system to act as the cognitive brain of your operations, interfacing dynamically with your existing software ecosystem. The application does not guess the shipping status; it deterministically routes the request to your actual logistics database and translates the raw data into a polite, conversational update. Building this middleware robustly is paramount for enterprise success.

Economics of Scale: Enterprise Pricing Structures

When deploying automated solutions to handle tens of thousands of customer support tickets monthly, processing economics become a primary operational expense. Both providers have aggressively priced their mid-tier offerings to be cost-effective for enterprise adoption, but their billing structures differ significantly, especially when considering advanced memory caching features.

To evaluate the financial impact accurately, we must analyze the operational cost per one million processed units. As corporate budgeting in European markets requires strict financial forecasting, all technical pricing figures have been converted directly to EURO (€) based on standard enterprise conversion metrics for the 2026 fiscal landscape.

Claude 3.5 Sonnet Financial Architecture

Anthropic utilizes a highly optimized pricing model, balancing frontier intelligence with mid-tier processing costs.

| Billing Component | Cost per 1 Million Units |

| Standard Input Processing | €2.76 |

| Standard Output Generation | €13.80 |

| Memory Cache Write (Storage) | €3.45 |

| Memory Cache Read (Recall) | €0.28 |

To mitigate costs for repetitive workflows—such as sending the exact same 50-page company policy manual to the system in every single customer interaction—Anthropic introduced advanced Prompt Caching. If your customer support platform references a static knowledge base, caching that data drops the continuous input cost by roughly 90%. Instead of paying €2.76 repeatedly, the system reads the cached data for a mere €0.28. This makes the architecture incredibly efficient for high-volume applications where the foundational instructions remain constant across thousands of parallel chats.

Gemini 1.5 Pro Financial Architecture

Google’s pricing matrix is tiered based on the sheer size of the prompt, rewarding smaller interactions while uniquely accommodating massive document ingestions without breaking the system constraints.

| Billing Component | Cost per 1 Million Units |

| Input Processing (≤ 200k tokens) | €1.15 |

| Input Processing (> 200k tokens) | €2.30 |

| Output Generation (≤ 200k tokens) | €9.20 |

| Output Generation (> 200k tokens) | €13.80 |

| Context Cache Storage | €4.14 (per hour of retention) |

| Context Cache Read | €0.12 |

https://1ie8udfmy11dbdzubmodk56jzlnxyordcm2hpqci9zdkbcri9e-h871335608.scf.usercontent.goog/gemini-code-immersive/shim.html?origin=https%3A%2F%2Fgemini.google.com&cache=1

From a pure baseline cost perspective, Gemini 1.5 Pro is undeniably the more frugal choice for un-cached interactions, offering text inputs at less than half the cost (for prompts under 200k units) and output generation that is roughly 33% cheaper. If your support volume is massive and operates largely without static, highly repetitive background data, this architecture will yield a significantly lower monthly expenditure.

However, if your customer support automation relies heavily on large, static system prompts, detailed user personas, and extensive standard operating procedures that can be aggressively cached, the cached-read economics level the financial playing field dramatically. A comprehensive cost-benefit analysis must factor in not just the raw unit price, but the specific architectural caching strategy employed by your software development team.

Multilingual Support and Global Enterprise Reach

For enterprises operating across international borders, an automated customer support platform must communicate flawlessly in the native language of the user. Translation delays, rigid phrasing, or grammatical errors immediately degrade trust and signal to the user that they are dealing with an inferior, highly automated system.

Google’s historical legacy as the world’s premier search and translation infrastructure heavily influences its architectural superiority in this domain. Gemini 1.5 Pro supports over 40 languages natively. It has been trained on a massive, globally diverse dataset, allowing it to understand local idioms, cultural nuances, and complex grammatical structures in languages ranging from Spanish and French to Japanese, Korean, and Arabic. If your customer base is heavily localized across multiple non-English speaking regions, this system provides a seamless, highly fluent experience out of the box.

Claude 3.5 Sonnet also possesses strong multilingual capabilities and handles major European languages with exceptionally high proficiency. Developers consistently note that it is particularly adept at translating technical documentation, software code, and formal corporate communications. However, for sheer breadth of language support and the fluidity of conversational dialects in less common regional languages, Google’s infrastructure retains a distinct edge.

| Linguistic Capability | Claude 3.5 Sonnet | Gemini 1.5 Pro |

| Primary Languages (English, German, French) | Exceptional fluency and nuance | Exceptional fluency and nuance |

| Total Native Language Support | Strong across major global languages | 40+ languages natively supported |

| Cultural Idioms and Dialects | Good, but skews formal | Excellent, highly trained on diverse regional data |

| Translation Speed | Very fast for primary languages | Highly optimized across all supported dialects |

We advise clients to perform a rigorous audit of their user demographics. If 90% of your support tickets are in English, German, and French, Claude 3.5 Sonnet will perform impeccably and offer superior backend logic. Conversely, if you are a global e-commerce brand handling long-tail languages across Southeast Asia and Latin America, leaning into a system trained extensively on diverse global data minimizes translation friction and improves customer satisfaction scores.

Data Privacy, Security, and Compliance Standards

Perhaps the most critical hurdle in deploying automated platforms in customer support is data security. Support tickets routinely contain Personally Identifiable Information, payment details, physical addresses, and sensitive account histories. Feeding this data into a public system that uses it to train future versions is a catastrophic violation of the General Data Protection Regulation (GDPR), the Health Insurance Portability and Accountability Act (HIPAA), and standard corporate governance protocols.

Fortunately, both providers offer enterprise-grade compliance frameworks, but their deployment mechanisms and specific certifications dictate how they must be integrated into your corporate infrastructure.

Cloud Security and Regional Data Residency

Deploying Gemini 1.5 Pro via Google Cloud provides deep integration into an established enterprise security ecosystem. Google offers explicit Data Processing Agreements and supports healthcare compliance via Business Associate Agreements. For European businesses operating under the strict parameters of the GDPR, Google Cloud offers robust regional data residency controls. Administrators can legally lock data processing and storage to specific European Union regions, such as the europe-west4 data center located in the Netherlands or the europe-west3 center in Frankfurt. Google guarantees that corporate prompts and generated responses are strictly isolated and not used to train their foundational systems. Furthermore, this architecture integrates natively with Virtual Private Cloud Service Controls and allows for Customer-Managed Encryption Keys, ensuring that your support data never traverses the public internet and remains entirely under your cryptographic control.

Anthropic is equally committed to enterprise security, maintaining critical certifications including SOC 2 Type II, ISO 27001, and ISO 42001. By default, Anthropic’s commercial service does not use corporate data for system training. They also offer Zero-Data-Retention configurations for highly strict compliance environments, meaning that incoming prompts are processed entirely in temporary memory and instantly purged without ever being written to persistent disk storage.

For European data residency, Anthropic partners with major infrastructure providers. Claude 3.5 Sonnet can be deployed through Amazon Web Services Bedrock or Google Cloud Vertex AI. By provisioning the system within an EU-based cloud region, European enterprises can utilize Anthropic’s superior analytical models while legally keeping all data processing within the borders of the European Union, fully satisfying strict GDPR requirements.

| Compliance & Security Feature | Anthropic (via Enterprise API / Cloud) | Google Cloud (Gemini Enterprise) |

| Model Training on User Data | Strictly prohibited by default | Strictly prohibited by default |

| GDPR & EU Data Residency | Supported via AWS/GCP region locking | Supported (e.g., europe-west4) |

| SOC 2 Type II & ISO 27001 | Certified | Certified |

| HIPAA Compliance (BAA) | Available for enterprise tiers | Available for enterprise tiers |

| Zero-Data-Retention Option | Available for strict compliance | Configurable via cloud admin policies |

Deploying these systems securely is not merely about toggling a configuration setting in a dashboard; it requires architecting secure gateways, managing encryption key lifecycles, and ensuring Personally Identifiable Information is rigorously redacted before it ever hits the processing engine. When building custom websites and mobile applications at Tool1.app, we implement stringent middleware layers that scrub sensitive data in transit, ensuring that your deployment is both incredibly intelligent and legally impregnable.

Latency, Throughput, and the Real-Time User Experience

In software engineering, there is a fundamental truth: speed is a feature. In customer support, latency directly correlates to user frustration. When a customer sends a message detailing a critical issue, they expect the typing indicator to appear almost instantly, followed by a rapid, coherent stream of text.

When evaluating these systems for real-time applications, two operational metrics take precedence:

- Time to First Token: How long it takes for the system to process the input and begin generating the very first word of the response.

- Tokens Per Second: The speed at which the system generates the remainder of the answer once it has started.

Claude 3.5 Sonnet was engineered specifically to operate at highly accelerated speeds compared to previous generations. In independent evaluations, the system consistently registers exceptionally low latency, often achieving a Time to First Token of under one second and generating output at speeds exceeding 60 tokens per second. This remarkable throughput makes the platform feel incredibly snappy and responsive in a live chat environment, mimicking the cadence of a fast-typing human agent.

Gemini 1.5 Pro is also highly performant, particularly when utilizing smaller context windows. However, because its underlying architecture is uniquely designed to accommodate massive data ingestion, engineers sometimes note a slightly higher variance in initial response times depending on the server load and the sheer volume of the prompt. Google does offer lighter variations specifically designed for sub-second response times, but sacrificing the flagship tier means a slight reduction in complex analytical capabilities.

For the ultimate live-chat user experience, streaming the response—displaying the text word-by-word on the frontend as it is generated by the backend—is mandatory. Both providers support real-time streaming protocols. Combining rapid processing capabilities with a modern, asynchronous frontend guarantees a conversational flow that feels entirely organic and instantaneous.

Strategic Verdict: Architecting for the Future

The decision between Claude 3.5 Sonnet and Gemini 1.5 Pro cannot be made in a vacuum; it must be driven by the specific architectural needs, security postures, and global demographic realities of your customer support operations.

Opt for Claude 3.5 Sonnet if:

- You are building a real-time, live-chat interface where ultra-low latency, conversational empathy, and a natural tone are paramount to the brand experience.

- Your software architecture relies heavily on database retrieval pipelines to fetch specific, isolated knowledge base articles rather than ingesting entire manuals simultaneously.

- Your automated agent needs to execute complex, multi-step backend actions—such as routing through billing APIs and updating CRMs—with extreme precision and minimal formatting errors.

- You have a vast library of static instructions and personas that can heavily leverage memory caching to drastically reduce operational costs.

Opt for Gemini 1.5 Pro if:

- You require the system to ingest and analyze massive, unstructured documents, entire user manuals, or weeks of historical chat logs in a single prompt without the overhead of building a complex database retrieval infrastructure.

- Your support tickets frequently involve multimodal inputs, requiring the application to seamlessly understand user-uploaded images of defective products, diagnostic screenshots, or audio files.

- Your user base is highly international, requiring native, fluid understanding of a vast array of global languages and regional dialects.

- You are heavily integrated into the Google Cloud infrastructure ecosystem and want native access to integrated security controls and enterprise compliance tools.

- You want to minimize baseline processing costs for large-scale operations that do not rely on static cached data.

Transform Your Customer Experience with Custom Solutions

The raw processing power of these advanced foundational systems is undeniable, but an intelligent algorithm is merely a highly capable engine; it requires a meticulously engineered vehicle to drive actual business value. Integrating these systems securely into your existing Customer Relationship Management platform, orchestrating robust data retrieval pipelines to access your proprietary information, and building intuitive, branded user interfaces requires specialized software development expertise.

If your enterprise is ready to transition from legacy support systems to intelligent, autonomous resolution platforms, navigating the complexities of data privacy, latency optimization, and backend tool integration is critical. Tool1.app can consult, design, and build the perfect automated solution for your company. As a premier software development agency specializing in Python automations, advanced system integrations, and custom applications, we have the technical depth to transform your customer support from an operational cost center into a strategic, highly efficient asset. Contact Tool1.app today to discuss your next custom software development project and bring the future of business efficiency to your organization.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.