Connecting the ChatGPT API to Your Internal CRM: A Developer’s Guide

Table of Contents

- The Strategic Value of AI in Customer Relationship Management

- Architecting a Secure Integration Layer

- Data Preparation, PII Scrubbing, and Privacy Compliance

- Mastering the Context Window with Tiktoken

- Prompt Engineering for Deterministic Data Extraction

- Implementing Resilient API Calls and Handling Rate Limits

- Event-Driven Webhooks for Real-Time Synchronization

- Forecasting the Economics of API Usage in 2026

- The Future of the Intelligent Workspace

- Elevate Your Business Operations Today

- Show all

The era of manual data entry, disconnected customer interactions, and reactive sales strategies is rapidly coming to a close. As artificial intelligence transitions from a technological novelty to a core operational necessity, businesses are looking far beyond off-the-shelf, generic chatbots. Today, forward-thinking enterprises demand deep, structural integrations. They want the sophisticated reasoning engine of large language models embedded directly into their existing operational hubs. When organizations integrate ChatGPT API CRM workflows, they are executing a fundamental transformation that turns a static, passive database into an active, intelligent assistant capable of summarizing complex meeting notes, drafting context-aware follow-ups, and triaging support tickets in real time.

However, building an enterprise-grade integration requires substantially more effort than simply sending a text prompt to an external endpoint. Developers and technical architects must navigate a highly complex landscape of strict data privacy laws, token optimization strategies, rigid rate limits, and asynchronous event-driven architectures. A poorly designed or hastily implemented API connection can easily lead to leaked credentials, exorbitant billing overages, and severe compliance violations.

At Tool1.app, we specialize in custom software development and AI solutions designed specifically for business efficiency. We frequently audit, rescue, and rebuild integrations that fail to scale under production loads. This exhaustive guide provides a comprehensive blueprint for developers and technical leaders to securely, efficiently, and elegantly connect OpenAI’s API to any internal Customer Relationship Management platform, utilizing best practices optimized for the modern technology landscape.

The Strategic Value of AI in Customer Relationship Management

Before diving into the technical architecture, it is essential to understand the business imperatives driving this integration. A CRM is the lifeblood of an organization’s revenue operations, storing every interaction, transaction, and behavioral data point related to a customer. Historically, extracting actionable insights from this massive repository required human analysts to spend hours cross-referencing notes, emails, and call logs.

By integrating conversational AI and large language models, businesses can automate the extraction of these insights, drastically reducing administrative overhead. In the realm of sales process automation, intelligent agents can streamline routine tasks along the pipeline. For example, when a discovery call concludes, the AI can instantly analyze the transcript, identify the core pain points discussed, and draft a highly personalized follow-up email that reference specific client concerns. This capability allows account executives to focus on relationship-building and complex negotiations rather than administrative data entry.

In customer support environments, the technology enables predictive triaging and rapid resolution. Support tickets often contain long threads of frustrated communication. An integrated model can digest a fifty-message thread in milliseconds, summarizing the historical context for the human agent, identifying the customer’s overall sentiment, and even suggesting technical solutions based on the company’s internal knowledge base. Furthermore, marketing departments leverage these integrations to craft highly targeted, personalized campaigns by having the AI analyze historical purchasing behaviors and demographic data stored within the CRM. The return on investment for these capabilities is profound, often resulting in double-digit percentage increases in team productivity and customer satisfaction scores.

Architecting a Secure Integration Layer

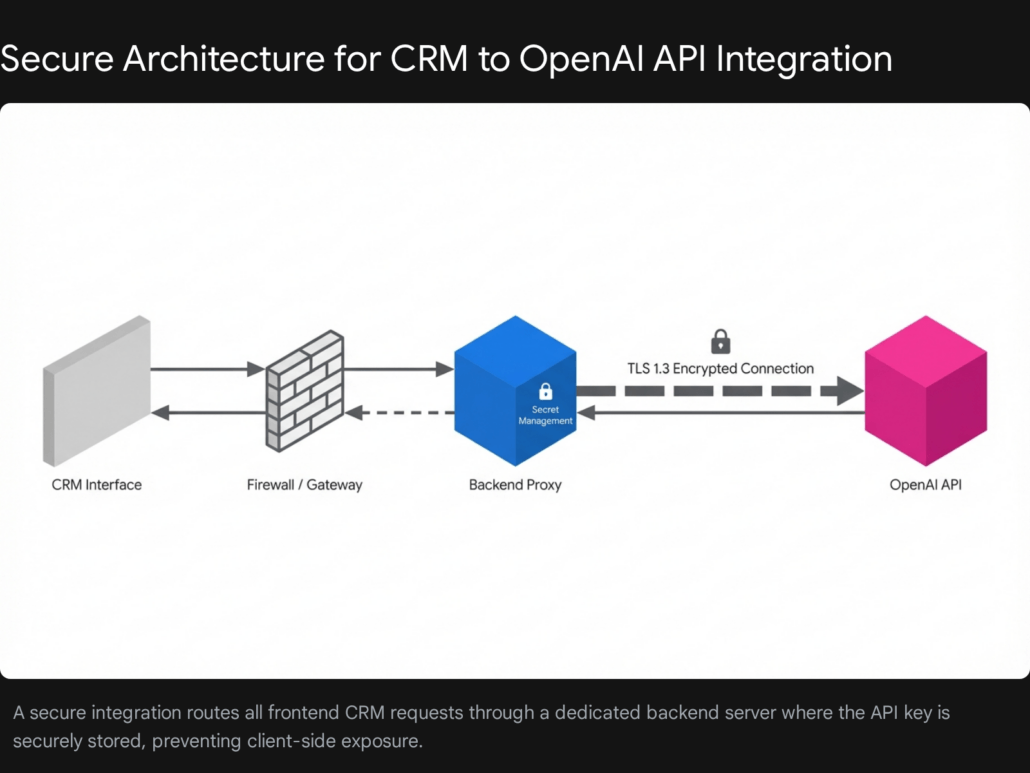

The most fundamental rule of integrating any third-party artificial intelligence service into proprietary business software is that the client-side application must never communicate directly with the language model provider.

Embedding an API key within a frontend application—whether it is a React-based web dashboard, a mobile application, or a client-side CRM extension—exposes that highly privileged credential to anyone who inspects the network traffic or decompiles the source code. Malicious actors routinely deploy automated bots to scrape public repositories, decompiled mobile application packages, and exposed frontend bundles to harvest these keys. Once compromised, an exposed key can be used to hijack computational resources, which can lead to thousands of euros in unexpected charges in a matter of hours, alongside the potential compromise of sensitive account data.

The Backend Proxy Pattern

To secure your infrastructure and protect your organization’s resources, you must implement a backend proxy server. In this architecture, the frontend CRM interface sends a localized request to your internal server—typically built with robust backend technologies such as Node.js, Python, or Go. This internal server then authenticates the user, processes and sanitizes the payload, and makes the secure, server-to-server call to the OpenAI API on the user’s behalf.

This architectural pattern provides several mandatory security and operational benefits that simply cannot be achieved through direct client-side requests.

First, it enables centralized, secure secret management. API keys can be injected into the backend environment at runtime using enterprise-grade secrets management tools like AWS Secrets Manager, HashiCorp Vault, or encrypted environment variables. This ensures that the credentials exist only in volatile memory and are never committed to version control systems or exposed to end-users.

Second, a backend proxy allows developers to implement robust Role-Based Access Control (RBAC). Before a request ever reaches the external AI provider, your server verifies the user’s active session token, typically via a JSON Web Token (JWT) or a secure session cookie. This ensures that a sales representative can only request summaries for deals and contacts they explicitly have permission to view within the CRM, enforcing your organization’s existing data governance policies at the integration layer.

Third, this intermediary layer empowers developers to implement safety identifiers. By taking a unique user attribute—such as hashing the user’s email address or their internal CRM database ID—and passing it to the external API via the dedicated safety identifier parameter, you can track potential abuse and monitor usage on a highly granular level. Because the identifier is cryptographically hashed, you achieve this observability without exposing any personally identifiable information to the third-party language model provider.

Data Preparation, PII Scrubbing, and Privacy Compliance

Feeding raw, unfiltered CRM data directly into an external commercial API presents a severe security risk and a potential legal liability. Customer Relationship Management platforms are, by definition, repositories of vast amounts of Personally Identifiable Information (PII). This includes full names, direct email addresses, physical addresses, sensitive financial histories, and unfiltered communication logs.

Under stringent privacy frameworks such as the General Data Protection Regulation (GDPR) in Europe, the California Consumer Privacy Act (CCPA), and various industry-specific regulations, businesses are legally obligated to practice data minimization. The principle of data minimization stipulates that an organization should only process and transmit the exact, minimum amount of data strictly necessary for the application to perform its designated task.

Implementing Proactive PII Scrubbing

Before any customer data leaves your secure internal network, it must be rigorously sanitized. If your functional goal is to have the artificial intelligence summarize the emotional sentiment of a support ticket, the language model absolutely does not need to know the customer’s social security number, their credit card details, or even their real name.

In Python-based development ecosystems, engineers can utilize highly effective open-source packages to automate this sanitization. Libraries designed for PII removal utilize a combination of regular expressions and advanced Machine Learning algorithms to detect sensitive strings. When sensitive data is detected, these tools automatically replace the specific information with generic, non-identifiable placeholder tokens. For example, a string reading “Contact John Doe at john.doe@example.com” would be programmatically transformed into “Contact at”.

Furthermore, compliance is not just about what data you send, but how long the receiving party retains it. For enterprise compliance, administrators must navigate the data retention policies of the API provider. While some providers may retain API request payloads for up to thirty days to monitor for trust and safety violations, enterprises handling highly sensitive data can often request or configure a zero-retention agreement. Enabling a zero-retention mode ensures that your prompts, the context data, and the generated outputs are never stored on external servers in persistent storage, nor are they ever utilized to train future iterations of foundational models.

Finally, compliance requires securing data in transit and at rest. You must ensure that your backend server communicates with the external API exclusively over encrypted channels utilizing TLS 1.3 protocols, and that any summarized insights saved back into your CRM are encrypted at rest using AES-256 encryption standards.

Mastering the Context Window with Tiktoken

A fundamental concept that developers must grasp when working with large language models is that these systems do not process text in the same way humans do. They do not read characters or distinct words; instead, they process text as discrete units called “tokens.” A token can represent a single character, a common chunk of a word, or an entire short word.

The algorithm responsible for breaking text down into these tokens is known as Byte Pair Encoding (BPE). BPE operates by incrementally merging the most frequent byte sequences in a training dataset to create subword tokens. This approach elegantly balances the overall vocabulary size with the model’s expressiveness, allowing it to handle rare words by breaking them down into known sub-components, while processing common words as single, efficient tokens. On average, in the English language, one token corresponds to roughly four characters of text.

Every API request has a strict context window limit—a maximum number of tokens it can ingest and generate in a single call—and a financial cost directly associated with the volume of tokens processed. If a customer success manager attempts to summarize an email thread that spans three years of continuous back-and-forth communication, the raw text may easily exceed the model’s maximum context limit. If this occurs, the API will reject the request, resulting in a crashed process, a failed background job, and a highly frustrated end-user.

Programmatic Token Counting

To prevent context window overflow and to accurately forecast API consumption costs, you must programmatically count the tokens on your backend before dispatching the payload over the network. OpenAI provides a highly optimized, open-source Python library designed specifically for this purpose, which mirrors the exact byte-pair encoding utilized by their production models.

Different generations of models utilize distinct encoding standards. For instance, legacy models might use standard encodings, while the latest flagship and mini models utilize an updated, highly compressed encoding schema designed to handle larger vocabularies and multilingual text more efficiently.

Here is a robust Python implementation demonstrating how to orchestrate basic data scrubbing and safely truncate a long CRM interaction history to guarantee it fits within a predefined token limit:

Python

import tiktoken

import re

def scrub_and_truncate_crm_data(raw_text: str, max_tokens: int = 4000, model_name: str = "gpt-5-mini") -> str:

"""

Sanitizes raw CRM text by removing basic PII patterns (e.g., email addresses),

and precisely truncates the resulting text to fit within the strict token limits

required by the specified language model.

"""

# Step 1: Execute basic data scrubbing via regular expressions

# This replaces standard email patterns with a safe placeholder token

scrubbed_text = re.sub(r'[A-Za-z0-9.-+_]+@[A-Za-z0-9.-+_]+.[A-Za-z]+', '', raw_text)

# Step 2: Initialize the correct Byte Pair Encoding for the target model

try:

# Attempt to load the specific encoding mapped to the requested model

encoding = tiktoken.encoding_for_model(model_name)

except KeyError:

# Provide a reliable fallback encoding if the specific model string is unrecognized

encoding = tiktoken.get_encoding("cl100k_base")

# Step 3: Encode the sanitized text string into a list of integer tokens

tokens = encoding.encode(scrubbed_text)

# Step 4: Evaluate token count and truncate if the threshold is exceeded

if len(tokens) > max_tokens:

# Log the truncation event for internal system monitoring

print(f"Warning: Truncating payload from {len(tokens)} tokens down to {max_tokens} tokens.")

# Slice the token array to the maximum allowable length

tokens = tokens[:max_tokens]

# Step 5: Decode the integer token array back into a human-readable string format

safe_and_sized_text = encoding.decode(tokens)

return safe_and_sized_text

# Example implementation within a backend pipeline

crm_historical_notes = "Customer john.smith@enterprise-corp.com called regarding the Q3 invoice discrepancies..."

safe_payload = scrub_and_truncate_crm_data(crm_historical_notes)

By predicting the exact token count locally, developers can forecast costs programmatically and gracefully handle oversized requests. Instead of allowing the application to crash, the backend can truncate the least relevant historical data, or gracefully return an error to the frontend, prompting the user to select a narrower date range for their summary request.

Prompt Engineering for Deterministic Data Extraction

Perhaps the most profound architectural shift in AI integration over the past two years is the migration away from unstructured text generation toward deterministic, structured data extraction.

When a typical consumer interacts with an artificial intelligence through a web interface, they expect fluid, conversational paragraphs. However, when an AI is integrated into the backend architecture of a CRM system, natural language output is generally useless. The output is intended to update specific relational database fields, trigger conditional automated workflows, or populate precise analytical dashboard widgets. To achieve this, you need structured data, almost universally formatted as JavaScript Object Notation (JSON).

JSON prompting creates a rigid, programmatic contract between your application code and the non-deterministic language model. By defining a precise, exhaustive schema within your system prompt, you drastically reduce ambiguity, minimize the risk of the model hallucinating conversational filler, and ensure that the response can be safely and consistently parsed by your backend logic.

The Customer Summarization Prompt Template

Imagine a common enterprise scenario where a highly paid customer success manager finishes a complex, forty-five-minute discovery call with a prospective client. Instead of requiring that manager to spend twenty minutes manually typing updates into the CRM database, an integrated workflow handles the task. The Voice over IP (VoIP) system generates a transcript and fires a payload to your backend.

To extract actionable, perfectly formatted data from this unstructured transcript, your prompt must instruct the AI to adopt a specific persona, execute analytical reasoning, and return a rigidly formatted JSON object.

Python

system_prompt_configuration = """

You are an expert, highly analytical Customer Relationship Management data extraction system.

Your primary function is to meticulously analyze the provided customer interaction transcript and extract key business insights.

CRITICAL INSTRUCTIONS:

1. You must respond ONLY with a valid, perfectly formatted JSON object.

2. Your response must strictly adhere to the exact schema provided below.

3. Under no circumstances should you include markdown formatting, conversational filler, preambles, or post-analysis explanations.

4. If a data point is not mentioned in the transcript, return a null value or an empty array as appropriate; do not invent information.

EXPECTED JSON SCHEMA:

{

"executive_summary": "A concise, professional 2-sentence summary of the core interaction.",

"overall_sentiment": "Must be exactly one of: 'Positive', 'Negative', or 'Neutral'.",

"churn_risk_score": "A floating-point number between 0.0 (no risk) and 1.0 (imminent churn).",

"action_items_for_rep": [

"An array of specific, actionable follow-up tasks."

],

"mentioned_competitors": [

"An array of any competitor companies mentioned. Empty if none."

],

"upsell_opportunity_identified": true/false

}

"""

When you combine this robust system prompt with the user’s raw transcript payload, the API is highly constrained to return a stringified JSON payload. Your backend application can immediately parse this string using standard libraries (such as json.loads() in Python or JSON.parse() in JavaScript) and map the resulting object properties directly to your CRM’s database columns. This seamless data translation is the cornerstone of truly effective automation.

Implementing Resilient API Calls and Handling Rate Limits

In any high-volume enterprise environment, external network reliability is never guaranteed. Language model providers enforce strict rate limits based on your organization’s billing tier and historical usage. These limits dictate both the maximum Requests Per Minute (RPM) and the maximum Tokens Per Minute (TPM) your application is permitted to consume.

For example, a standard mid-tier organizational account might be limited to 500 requests per minute and 1,000,000 tokens per minute. If a developer accidentally triggers a massive batch process to summarize 5,000 legacy CRM records simultaneously, the infrastructure will immediately hit the ceiling. The API provider will reject the overwhelming traffic with an HTTP 429 “Too Many Requests” status code. If your backend application lacks built-in resilience, the entire batch process will crash, leading to severe data loss and manual engineering intervention.

Exponential Backoff and Jitter

The absolute industry standard for handling rate limit rejections and transient network anomalies is an algorithmic strategy known as “exponential backoff with jitter.”

When an outgoing HTTP request fails due to a rate limit or a temporary server-side error (such as an HTTP 500 or 503 error), the application does not simply give up, nor does it immediately retry. Instead, it pauses the execution thread for a short baseline period before attempting the call again. If it fails a second time, the wait time is doubled. If it fails a third time, it is doubled again.

Crucially, developers must add “jitter” to this mathematical formula. Jitter introduces a randomized variation to the calculated wait time. Without jitter, if a widespread network event causes one hundred concurrent requests to fail simultaneously, the exponential backoff would cause all one hundred requests to retry at the exact same millisecond in the future, instantly triggering another catastrophic rate limit event—a phenomenon known in distributed systems as the “thundering herd” problem. Jitter disperses these retries over a wider temporal window, smoothing out the traffic spike.

Our engineers at Tool1.app strongly recommend leveraging specialized, battle-tested libraries rather than attempting to write custom retry loop logic from scratch. In the Python ecosystem, the backoff library provides exceptionally powerful and elegant function decorators for this exact purpose.

Python

import openai

import backoff

import json

# Initialize the secure client using environment variables

client = openai.OpenAI(api_key="YOUR_SECURE_ENV_VARIABLE")

# Decorator to apply exponential backoff on specific known exceptions

@backoff.on_exception(

backoff.expo, # Use the exponential algorithm

(openai.RateLimitError, openai.APIConnectionError, openai.InternalServerError),

max_time=120, # Absolute maximum time to keep retrying (seconds)

max_tries=6 # Maximum number of discrete retry attempts

)

def extract_crm_insights_with_resilience(transcript: str, system_instruction: str) -> dict:

"""

Transmits the transcript payload to the external API with robust error handling,

automatic retries, and jitter implementation.

"""

response = client.chat.completions.create(

model="gpt-5-mini",

messages=[

{"role": "system", "content": system_instruction},

{"role": "user", "content": transcript}

],

temperature=0.0, # Zero temperature enforces maximum deterministic output

response_format={"type": "json_object"} # Architecturally force JSON mode

)

# Extract the stringified JSON payload from the API response object

raw_content = response.choices.message.content

# Parse the string into a native Python dictionary

return json.loads(raw_content)

try:

insights = extract_crm_insights_with_resilience("Customer expressed severe frustration over alternative pricing...", system_prompt_configuration)

print(f"Calculated Churn Risk: {insights['churn_risk_score']}")

except Exception as e:

# This block only executes if all configured retries are exhausted

print(f"Critical System Failure: Unable to process transcript after maximum retries. Error: {e}")

For development teams building infrastructure in Node.js, the equivalent robust setup utilizes packages such as axios-retry. By deeply intercepting outgoing requests before they throw fatal application errors, axios-retry can automatically pause the asynchronous event loop and safely re-execute the HTTP call, often directly respecting the specific wait times dictated by the Retry-After headers occasionally provided by the overloaded API server.

Event-Driven Webhooks for Real-Time Synchronization

While traditional batch processing architectures are highly useful for analyzing vast amounts of historical data, modern sales, marketing, and support teams demand real-time, instantaneous intelligence. This requirement is where event-driven webhook architecture becomes essential, effectively bridging the gap between disparate communication platforms, the language model reasoning engine, and your central CRM database.

Consider a modern enterprise technology stack utilizing a cloud-based communication provider and a highly customized CRM dashboard. When a critical sales call concludes, the communication provider immediately fires an outbound webhook payload containing the raw audio URL or the fully transcribed text directly to your internal server’s listening endpoint.

Because LLM processing is computationally intensive—often taking several seconds, or even up to a minute for massive context windows—you cannot process this incoming webhook synchronously. If your receiving server keeps the HTTP connection open while it waits for the AI API to return a summary, the originating communication service will likely assume a network timeout has occurred. Believing the delivery failed, it will repeatedly retry sending the webhook, causing a massive cascade of redundant API calls that will swiftly consume your rate limits and inflate your operational costs.

Asynchronous Message Queue Architecture

To solve this architectural bottleneck, the integration must be decoupled and fundamentally asynchronous. When your backend endpoint receives the incoming webhook payload, it must immediately respond with an HTTP 202 “Accepted” status code, instantly closing the connection and acknowledging receipt without waiting for the data to be processed.

Simultaneously, the backend places the raw payload into an asynchronous message queue. Industry standards for this include technologies like RabbitMQ, AWS Simple Queue Service (SQS), or Redis-backed Celery workers.

Once the data is safely queued, independent background worker processes pick up the jobs sequentially. These workers execute the PII scrubbing algorithms, calculate the token thresholds, execute the external API call wrapped in the exponential backoff logic, and finally utilize the CRM’s internal API to update the specific customer contact record with the generated insights.

Furthermore, robust webhook systems must implement idempotency keys. Because network glitches can occasionally cause the originating system to fire the same webhook twice despite receiving a 202 Accepted response, your system must track the unique ID of every processed event. If a worker detects a duplicate ID, it simply discards the job, ensuring that the CRM is not updated twice with identical AI summaries.

When enterprise clients approach Tool1.app to design custom websites and complex Python automations, this highly resilient, asynchronous queue system is precisely the architecture we deploy to guarantee high availability, rapid response times, and absolute zero data loss during massive high-traffic events.

Forecasting the Economics of API Usage in 2026

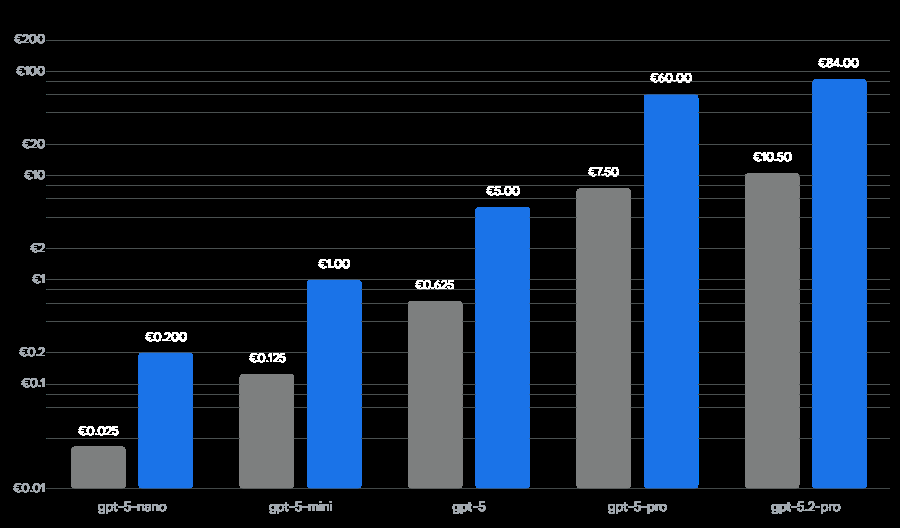

A persistent hesitation among technical leaders and chief financial officers regarding widespread LLM integration is the pervasive fear of unpredictable, skyrocketing operating expenses. However, the economics of API usage have matured and improved dramatically. As of early 2026, the introduction of highly optimized “mini” and “nano” tier models, alongside advanced architectural features like prompt caching and batch discounting, has driven the cost of inference down to near-zero for the vast majority of routine business tasks.

When calculating the potential Return on Investment (ROI) for an integration, architects must understand that API pricing is strictly bifurcated. You are charged separately for input tokens (the instructions, context, and historical data you send to the model) and output tokens (the novel text or JSON data the model generates in response). Output tokens are historically much more expensive due to the massive computational power required for autoregressive generation.

The following data outlines the estimated costs—converted to the Euro (€) for European market planning—per one million tokens for the current generation of enterprise-ready models:

| Language Model | Input Cost per 1M Tokens | Output Cost per 1M Tokens | Optimal Business Use Case |

| GPT-5-nano | €0.02 | €0.18 | Extreme high-volume micro-tasks, basic sentiment tagging, language detection. |

| GPT-5-mini | €0.12 | €0.92 | Standard CRM routing, JSON data extraction, fast conversational responses. |

| GPT-4o-mini | €0.14 | €0.55 | Legacy application workflows, balanced cost-to-performance summarization. |

| GPT-5 | €0.58 | €4.60 | Complex logical reasoning, deep contract analytics, nuanced negotiation analysis. |

| o3-pro | €9.20 | €77.28 | Advanced autonomous multi-step agents, complex financial or legal reasoning. |

Note: Pricing figures are approximate conversions based on standard 2026 currency exchange rates. Implementing advanced prompt caching features can further reduce the listed input costs by 50% to 90% for highly repetitive queries.

2026 OpenAI Model Cost Comparison per One Million Tokens

Cost efficiency varies drastically across models. For high-volume CRM tasks like data summarization, lightweight models like GPT-5-mini offer significant savings compared to flagship variants.

For the vast majority of CRM data summarization and ticket routing tasks, the mini class models are more than capable of providing perfect accuracy. To contextualize this expense: if your enterprise CRM processes 10,000 unique customer interactions every single day, and each interaction utilizes roughly 1,500 input tokens of context and generates 200 output tokens of JSON insights, your daily consumption equates to 15 million input tokens and 2 million output tokens.

Utilizing the GPT-5-mini architecture, this massive scale of enterprise automation would cost your organization less than €4.00 per day. When weighed against the thousands of human hours saved from manual data entry and reading historical logs, the economic argument for integration is undeniable.

Ensuring Long-Term Stability and Observability

To guarantee that your AI integration remains stable and economically viable as your business scales, you must treat your AI infrastructure like any other mission-critical microservice.

Implement rigorous observability and monitoring. Do not merely log application errors; aggressively log endpoint latency and token usage attributed to specific users or departments. If a specific sales team is suddenly triggering massive API requests due to a poorly optimized bulk process, your monitoring dashboards should flag the anomaly immediately. By tracking these metrics over time, you may discover optimization opportunities. For example, migrating certain non-urgent, highly repetitive nightly workflows to the asynchronous Batch API—which typically offers a 50% discount on token costs in exchange for a 24-hour processing window—can drastically cut your monthly infrastructure bill.

Furthermore, it is a strategic imperative to decouple your core business logic from the specific language model provider. While this comprehensive guide focuses on OpenAI’s highly capable ecosystem, the artificial intelligence landscape is evolving at a breakneck pace. By wrapping your API calls in internal, abstract service classes and interfaces, you maintain the agility to swap out underlying models or migrate entirely to competing providers in the future, without having to undergo a massive, costly rewrite of your entire CRM codebase.

The Future of the Intelligent Workspace

The deep integration of large language models into internal systems represents a profound paradigm shift in enterprise software development. We are rapidly moving away from legacy software that merely stores and retrieves passive data, and moving toward dynamic software that actively understands, analyzes, and contextualizes the information it holds.

By prioritizing a secure backend architecture, rigorously respecting data privacy and GDPR compliance, mastering the nuances of JSON schemas for deterministic outputs, and implementing robust retry logic, software developers can build CRM integrations that are not only highly innovative but incredibly resilient. The businesses that adopt these methodologies today will operate with a level of speed, personalization, and efficiency that organizations relying on manual workflows simply cannot match.

Elevate Your Business Operations Today

Want to make your CRM smarter, faster, and infinitely more capable? Tool1.app specializes in architecting custom LLM API integrations, building sophisticated Python automations, and developing end-to-end software solutions designed to eliminate operational bottlenecks and scale your business. Whether you are looking to construct a secure backend proxy infrastructure from the ground up, or you need expert guidance to optimize your current token expenditures and latency issues, our elite development team has the deep technical expertise required to deliver flawless, enterprise-grade results. Contact Tool1.app today to schedule a consultation and discover exactly how custom software development can revolutionize your internal workflows.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.