The Future of Search: A Business Owner’s Guide to ALLAI, GEO, SAO, and VSO

Table of Contents

- Defining the Acronyms of the AI Era

- Navigating GEO vs SAO vs VSO Search Optimization

- The AI Paradigm Shift: How Large Language Models Retrieve Data

- Actionable Development Strategies for the AI-First Web

- Deep Dive into Search Agent Optimization (SAO)

- Voice Search Optimization in the Generative Era

- Python Automations for Tracking AI Visibility

- The Business Case: Costs, ROI, and Industry Impact

- Embracing the AI Discovery Era

- Secure Your Place in the Future of Search

- Show all

Ranking number one on traditional search engine results pages used to be the holy grail of digital marketing success. If your website hit the top spot for your target keyword, you could reasonably expect a predictable, lucrative flood of organic traffic. However, in today’s digital landscape, that paradigm is no longer sufficient. The search ecosystem has evolved far beyond the traditional list of blue links. Users now find answers through voice assistants, complex AI chatbots, and generative search engines that summarize vast amounts of information instantly. This shift has resulted in a massive surge of zero-click searches, environments where the user receives their answer directly on the search page and never actually visits your website.

As traditional search engines evolve into AI-driven discovery agents like ChatGPT, Perplexity, Claude, and Google AI Overviews, modern marketers and business owners are desperately searching for new optimization frameworks to ensure their custom web apps and digital platforms stay visible. This paradigm shift represents an existential threat to traditional Search Engine Optimization. If your content is not part of the AI-generated answer, your business effectively does not exist in the modern discovery funnel. The disruption threatens to upend an €73 billion SEO industry, forcing a complete architectural redesign of how information is formatted, stored, and retrieved on the internet.

At Tool1.app, we specialize in future-proofing our clients’ digital footprints through custom websites, mobile applications, and advanced AI/LLM solutions. We have witnessed firsthand how businesses that rapidly adapt to these new generative algorithms thrive, while those clinging to outdated SEO practices see their organic traffic evaporate. To ensure a holistic approach that keeps your brand visible everywhere across the digital spectrum, it is imperative to master the new acronyms of digital visibility and understand the underlying technical infrastructure required to support them.

Defining the Acronyms of the AI Era

To navigate this new terrain successfully, business owners must first familiarize themselves with the specialized optimization frameworks that dictate how artificial intelligence models retrieve, rank, and cite online information. Each of these acronyms represents a distinct approach to digital visibility, addressing different user interfaces and machine learning behaviors.

Generative Engine Optimization (GEO)

Generative Engine Optimization represents a fundamental philosophical and technical shift from a system built on backlinks and page rankings to one built on language models, digital authority, and AI citations. GEO focuses specifically on ensuring your content is cited and referenced in AI-powered search engines and generative overlays, such as Google AI Overviews, Perplexity, Gemini, and ChatGPT’s web search functionalities.

While traditional search optimization aims to drive organic clicks to landing pages, the primary goal of GEO is to earn visibility and brand mentions directly within AI-generated answers and summaries. To achieve this, AI models favor semantic authority, demanding factual accuracy, structural clarity, and high-quality data over keyword density. The content must be formatted in a way that large language models can easily parse, extract, and confidently present to the user as absolute truth. Success in Generative Engine Optimization is not measured by click-through rates, but rather by citation frequency, reference rates, and the brand’s share of voice within generated conversational responses.

Search Agent Optimization (SAO)

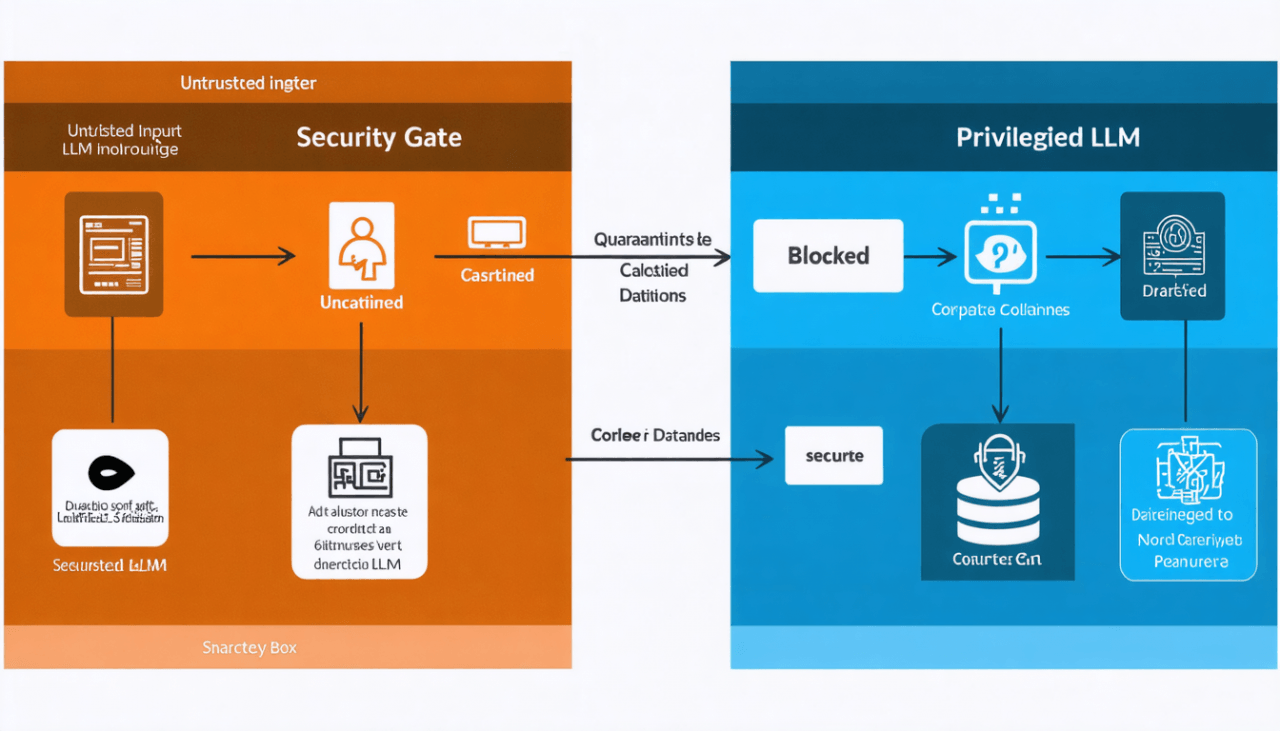

Search Agent Optimization focuses on how autonomous artificial intelligence agents access, interpret, and utilize your proprietary data. Unlike conversational chatbots that merely answer user queries, AI agents are designed to take autonomous action. They can book flights, compare software features across multiple disparate databases, audit financial reports, or execute complex multi-step workflows without human intervention.

SAO is highly technical and largely invisible to the average consumer. It extends far beyond basic web indexing to ensure that a company’s digital infrastructure features clean Application Programming Interfaces (APIs), machine-readable documentation, and proper agentic access controls. It is fundamentally about structuring your backend data and functional endpoints so that an AI agent can securely and seamlessly pull the required information, authenticate itself, and execute a function. If GEO is about getting your brand mentioned in a summary, SAO is about ensuring your software platform can be operated by a machine.

Voice Search Optimization (VSO)

Voice Search Optimization is the practice of tailoring your digital content to align with the conversational, natural-language queries used by voice assistants. While VSO has existed in the context of older technologies like Siri or Alexa, it has been revolutionized by the advanced generative voice modes found in ChatGPT and Gemini Live.

Spoken searches are fundamentally different from typed text searches. Linguistic data reveals that spoken queries are typically three to five times longer than their text-based counterparts, averaging around 4.2 words per query. They are almost always structured as complete, intent-rich questions, frequently beginning with Who, What, Where, When, Why, or How. Furthermore, voice searches exhibit a strong local intent, with roughly twenty-two percent of all voice queries relating to local content or immediate geographic needs. VSO requires businesses to provide single, definitive, authoritative answers that AI models can read aloud with confidence, utilizing natural language processing to match conversational phrasing rather than disjointed keywords.

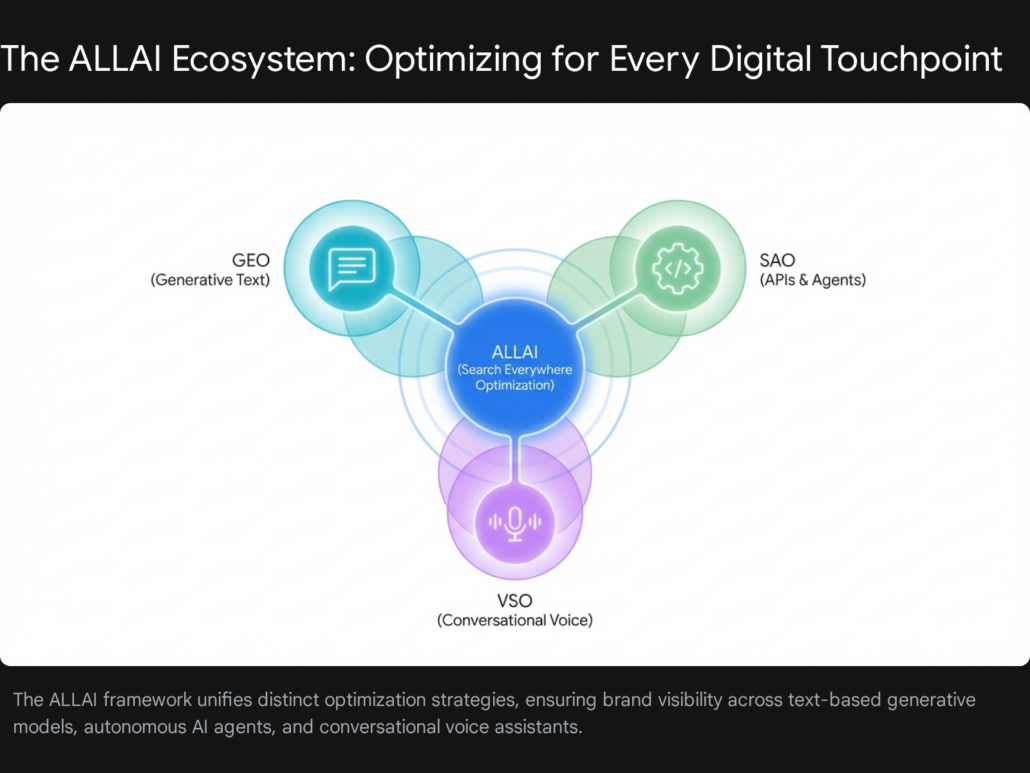

All AI Optimization (ALLAI)

All AI Optimization, or ALLAI, is the holistic, unified framework that brings all these individual strategies together. Because your target audience’s search behavior has fractured across traditional Google search bars, conversational AI chatbots, smart voice assistants, and autonomous software agents, optimizing for just one single channel leaves massive visibility gaps in your marketing strategy.

ALLAI is essentially a “Search Everywhere Optimization” strategy. It demands a unified approach to digital architecture, ensuring that whether a user types a query into a search bar, asks a smart speaker for a recommendation, or deploys an AI agent to research a market segment, your brand emerges as the verified, trusted source of truth. Implementing an ALLAI framework requires deep alignment between your marketing content, your technical web architecture, and your software development practices.

Navigating GEO vs SAO vs VSO Search Optimization

Understanding the deep nuances and strategic differences of GEO vs SAO vs VSO search optimization is no longer an optional intellectual exercise for business owners; it is a critical survival metric for the digital age. Each of these frameworks targets an entirely different layer of the modern artificial intelligence ecosystem. Consequently, each requires vastly different technical implementations, content formatting standards, and measures success through entirely different key performance indicators.

When optimizing for generative engines through GEO, your target interface is the conversational AI chatbot or the expansive AI-generated answer panel sitting prominently at the top of a traditional search engine results page. The content style designed for this environment must be incredibly concise, purely factual, densely informative, and easily citable by a machine. Success here is not measured in website sessions or page views. Instead, success is quantified by citation frequency, reference rates across multiple LLM platforms, and the brand’s overarching share of voice within AI-generated responses. If ChatGPT recommends your logistics software over a competitor when asked for supply chain solutions, your GEO strategy has succeeded, regardless of whether the user clicked a link to your homepage.

Conversely, Search Agent Optimization targets the hidden, programmatic layer of autonomous operations. The interface here is not a graphical screen that a human user reads, but rather an API endpoint, a structured data feed, or a webhook that a machine parses in milliseconds. Content for SAO does not consist of engaging blog posts, persuasive service pages, or cleverly designed landing pages. Instead, SAO content consists of rigorous OpenAPI specifications, flawless JSON-LD data structures, and highly structured machine-readable markdown files. Success in the realm of SAO is strictly binary and functional: either the external AI agent can successfully authenticate, comprehend your documentation, and execute a programmatic function using your data, or it throws an error and fails. There is no gray area in SAO.

Voice Search Optimization, meanwhile, targets the increasingly prevalent zero-click, audio-only environment. The content format relies heavily on short, highly natural-language answers, often extracted verbatim from FAQ schemas, direct summary blocks, or featured snippets. Because a voice assistant can only read one answer aloud, competition is fierce. There is no “page two” in voice search. Success in VSO means achieving total dominance for a specific conversational query, capturing the spoken intent of the user, and becoming the single preferred answer broadcast through the user’s smart device or mobile phone speaker.

The AI Paradigm Shift: How Large Language Models Retrieve Data

To effectively build an AI-optimized digital platform, one must first deeply understand the mechanics of how Large Language Models like ChatGPT, Claude, Perplexity, and Google’s underlying AI systems actually interact with the web. This retrieval process is fundamentally, structurally different from how traditional search engine crawlers have operated for the past two decades.

For decades, traditional search engines deployed basic web crawlers to index billions of web pages, storing them in vast databases and ranking them based on a relatively transparent formula of keyword relevance, on-page optimization, and backlink authority. If a user searched for a specific query, the engine’s algorithm served a ranked list of hyperlinks. The user clicked a link, read the page, and the business captured the resulting web traffic. The entire economy of the internet was built on this click-through traffic model.

Large Language Models, however, do not want to send users to your website. Their primary objective is to synthesize a comprehensive, accurate answer directly within their own chat interface, keeping the user engaged in their own ecosystem. To accomplish this, they rely on a sophisticated architectural process known as Retrieval-Augmented Generation (RAG).

When a user asks a complex or novel question, the AI performs a rapid, real-time web search in the background. It downloads the textual content of the top-ranking pages, parses that text, breaks it down into modular, semantic chunks, and then reconstructs a newly synthesized answer based on those fragments.

AI models are notably impatient readers. They demand immediate clarity and flawless structure. If your business’s website is built on heavy, unoptimized JavaScript frameworks, or if your core informational content is buried beneath intrusive pop-ups, slow-loading media, and unstructured, rambling paragraphs, the AI bot will simply abandon your site and seamlessly pull the necessary data from a competitor’s faster, clearer platform. At Tool1.app, we consistently recognize that many beautifully designed modern websites are ironically completely invisible to AI search engines. Sites built with heavy client-side rendering often appear as blank, empty pages to AI crawlers like GPTBot or ClaudeBot. Traditional search optimization tools and plugins do not solve this deep infrastructure problem; overcoming it requires a fundamental rethinking of technical web development from the ground up.

The Critical Role of E-E-A-T and Semantic Authority

Furthermore, AI algorithms prioritize entirely different trust signals compared to traditional search algorithms. While traditional search historically loved an abundance of backlinks, generative engines prioritize brand mentions, contextual sentiment, and verifiable, hard facts. They are rigorously trained to look for “E-E-A-T” signals: Experience, Expertise, Authoritativeness, and Trustworthiness.

In the AI era, semantic authority is everything. If your brand is frequently mentioned alongside positive sentiment on highly authoritative, heavily moderated platforms like Reddit, Wikipedia, or industry-specific specialized forums, the AI internalizes your brand as a globally trusted entity for that specific topic. Conversely, if your website produces massive volumes of generic, low-quality content that lacks original research, expert author biographies, or transparent citations, the AI will confidently exclude your brand from its generative summaries. To bake E-E-A-T into your content strategy, businesses must aggressively showcase real-world case studies, include comprehensive author biographies with verifiable credentials, and maintain highly accurate representations in Google’s Knowledge Graph by utilizing robust Organization schema markup.

Actionable Development Strategies for the AI-First Web

Transitioning your digital presence to survive and thrive in the AI era requires deep, intentional technical implementation. It is no longer enough to simply hire a copywriter to produce keyword-rich blog posts; that content must be rigorously machine-readable, flawlessly structured, and programmatically accessible to a variety of autonomous agents.

Implementing Advanced Schema Markup

Schema markup, specifically utilizing the JSON-LD (JavaScript Object Notation for Linked Data) format, is the foundational structured data vocabulary that translates human-readable web content into machine-readable semantic context.

To understand the importance of schema, imagine walking into a massive, chaotic grocery store where there are no aisles, no department signs, and no product labels. The steaks are next to the laundry detergent, the fresh produce is mixed with automotive supplies, and the dairy products are scattered randomly across the floor. Finding a specific item would be deeply frustrating, if not impossible. That chaotic environment is exactly how an AI tool views a standard, unstructured website. The textual content might technically exist on the page, but without schema markup, there is no organization, structural hierarchy, or semantic context. Search engines and AI platforms cannot make accurate sense of it.

By injecting precise JSON-LD scripts directly into your website’s source code, you explicitly define the exact nature and meaning of your content for the machine. You can explicitly tag elements as Frequently Asked Questions, step-by-step How-To guides, detailed product specifications, comprehensive software reviews, or nuanced organizational hierarchies. For example, if your website contains a section answering common customer queries, wrapping that specific content in an FAQPage schema explicitly tells the AI model, “Here is a specific question, and here is the exact, definitive answer.”

Extensive digital research indicates that structured formats like FAQ and HowTo schemas are pulled into AI summaries and generative overviews exponentially more often than unstructured paragraph text. When developing custom websites at Tool1.app, we absolutely never treat schema markup as a post-launch afterthought. We actively build dynamic JSON-LD injection directly into the core web architecture, ensuring that every single product listing, author biography, and service landing page continuously broadcasts perfectly clear semantic signals to generative engines.

The Answer-First Content Structure

When AI models parse web content to formulate an answer, they typically extract information in very short, concise spans of roughly 40 to 60 words. They do not want to read a long, meandering introduction filled with marketing fluff and broad generalizations. To optimize your content for immediate AI retrieval, you must adopt the classic journalistic “Inverted Pyramid” structure.

In practice, this means you must start every single page or core content section with a direct, highly concise answer to the main topic or question. Immediately deliver the most vital information in the first 50 words. Follow this dense opening block with short, incredibly clear headings (formatted as H2s and H3s) that perfectly mirror the exact conversational questions users are asking.

Furthermore, you must keep your paragraphs exceptionally short, ideally to a maximum of two to three sentences per block. Utilize bullet points and bold text formatting strategically to highlight key statistics, unique data points, and absolute facts. If a human reader cannot visually scan your page and fully understand the core message and primary data points within five seconds, an AI model will similarly struggle to extract, summarize, and cite your content.

Protocol Optimization: Navigating robots.txt and llms.txt

Controlling exactly how different AI agents and bots access your site is the new, complex frontier of technical search optimization. You must strategically configure your robots.txt file to carefully manage entirely different categories of AI crawlers.

In the modern landscape, there are generally two types of AI bots: training bots and retrieval bots. Training bots scour the internet to ingest massive amounts of raw data to build future iterations of large language models. Retrieval bots, on the other hand, search the live web in real-time to answer a specific user query right now. From a strategic standpoint, a business may wish to allow real-time retrieval bots (such as OAI-SearchBot for ChatGPT’s web search or Perplexity-User) full access to their site to ensure they are cited in live answers. Simultaneously, that same business might choose to explicitly block training bots (like GPTBot or ClaudeBot) to prevent their proprietary data, unique methodologies, and copyrighted content from being scraped, absorbed, and regurgitated by future models without proper attribution or compensation.

Furthermore, the technology industry is rapidly adopting a powerful new standard protocol: the llms.txt file. Serving a similar architectural purpose to a standard robots.txt file, an llms.txt file sits cleanly in your website’s root directory. However, instead of simply telling bots what not to crawl, the llms.txt file acts as an explicitly structured map, warmly guiding Large Language Models directly to your most important, highest-quality, AI-ready content.

The llms.txt file utilizes a clean, universally understood Markdown format. It uses simple hashes (#) for primary H1 headings, double hashes (##) for sub-sections, and standard syntax for hyperlinks and blockquotes. This file explicitly points AI agents toward canonical documentation, structured data exports, XML sitemaps, and high-priority business resources. Crucially, it strips away confusing site navigation menus, promotional banners, and website fluff, allowing the AI to ingest pure, highly structured knowledge. For highly complex websites, developers are also implementing llms-full.txt files, which go a step further by aggregating the full text of critical documentation into a single, massive file, allowing an AI model to ingest an entire knowledge base in one seamless API call.

Deep Dive into Search Agent Optimization (SAO)

As we move from conversational AI interfaces to autonomous AI systems, the importance of Search Agent Optimization cannot be overstated. If your business relies on providing software, offering SaaS platforms, or distributing proprietary data feeds, optimizing for SAO requires an absolute mastery of advanced API design and integration patterns. AI agents are software programs, and they need standardized, highly secure ways to interact with your proprietary systems.

There are five primary API integration patterns that developers currently use to connect autonomous AI agents to external commercial systems. Understanding these patterns is essential for business owners looking to make their platforms agent-ready.

1. Direct API Calls: This is the most basic, foundational pattern. The agent’s underlying code directly generates and executes raw HTTP requests to your server. The agent is entirely responsible for the full lifecycle of the request, including acquiring proper authentication tokens, parsing complex JSON responses, and handling diverse server errors. While this offers maximum control for the developer, it is incredibly brittle. If you change a single parameter in your target API, the agent’s code breaks, leading to massive maintenance burdens and high security risks as sensitive API credentials are often handled directly in the application code.

2. Tool (Function) Calling: In this model-native pattern, widely supported by industry leaders like OpenAI, Google, and Anthropic, the Large Language Model does not generate raw, error-prone API calls itself. Instead, developers define specific “tools” using highly structured schemas, almost always based on the rigorous OpenAPI specification. The LLM analyzes a user’s request and simply outputs a structured JSON object specifying exactly which tool to call and the necessary arguments. The application backend then securely executes the actual function call. This is vastly more reliable than direct generation, but still requires significant developer overhead to manage authentication for every single tool.

3. Model Context Protocol (MCP) Gateway: MCP is an emerging, highly critical open standard that effectively creates a universal translation language between autonomous agents and proprietary tools. An MCP Gateway serves as a centralized, highly secure intermediary where an agent can dynamically discover what tools are available on your platform. The gateway handles all authentication and execution protocols, beautifully abstracting the underlying API complexities from the AI agent. This allows an enterprise agent to connect to a gateway and instantly understand how to query an employee directory or pull a sales report without needing to be hard-coded with the specific API details of the underlying HR or CRM system.

4. Unified API for Agent Integrations: In this pattern, a third-party platform provides a single, standardized API for an entire category of software, such as CRM, Marketing, or Accounting. Developers build one single integration against the Unified API, which then automatically translates those calls into the specific native formats for various distinct applications like Salesforce, HubSpot, or Zoho. This drastically reduces development time and outsources the maintenance burden, though it introduces slight latency and relies on a middleman.

5. Agent-to-Agent (A2A) Protocols: This is the most advanced, futuristic pattern, allowing decentralized, autonomous agents to communicate with each other and dynamically delegate tasks to highly specialized sub-agents. For example, a generalist Travel Planning Agent might autonomously delegate highly specific sub-tasks to a specialized Flight Booker Agent or a localized Hotel Booker Agent. While it enables incredibly complex, emergent software behavior, A2A protocols are highly complex to implement, and industry standards for discovery and communication remain nascent.

To ensure your platform is discoverable and usable by these agents, providing machine-readable documentation is absolutely critical. Human-readable API documentation hosted on a nice webpage is entirely useless to an autonomous script. Your business must provide comprehensive OpenAPI specifications that clearly, mathematically define every single endpoint, every required parameter, and every accepted authentication method. The documentation must be written with the explicit assumption that an intelligent system with zero human context, intuition, or background knowledge will be reading it completely autonomously. Building these secure, impeccably documented, agent-ready API gateways is a highly specialized service that our senior development teams at Tool1.app architect for modern enterprises seeking to integrate deeply into the AI ecosystem.

Voice Search Optimization in the Generative Era

While APIs govern how machines talk to machines, Voice Search Optimization governs how machines talk to humans. VSO has transcended the simple, rigid commands of early smart speakers. With the introduction of highly advanced multimodal models like ChatGPT’s Advanced Voice Mode and Google’s Gemini Live, voice search has become a fluid, deeply conversational, and highly dynamic experience.

Traditional voice search optimization strategies often focused on short, localized queries like “Where is the nearest coffee shop?” However, generative voice search completely changes this dynamic. Users are now having long, complex, multi-turn conversations with AI assistants. They are asking for detailed explanations of complex topics, brainstorming marketing ideas, and seeking highly nuanced product comparisons while driving their cars or cooking dinner.

When comparing the leading generative voice platforms, profound differences emerge in how they retrieve and cite web content. Industry analysis suggests that OpenAI’s Advanced Voice Mode often feels a generation ahead in terms of conversational naturalness. It possesses advanced interruption performance, allowing a user to pause and think without the AI rudely jumping in to finish a sentence. It also features robust memory capabilities, allowing a user to maintain a complex conversation over time. Google’s Gemini Live, while incredibly powerful due to its seamless integration with the Google ecosystem and real-time search dominance, has historically struggled more with conversational interruptions and periodic disconnections, sometimes making it difficult for users to execute complex information retrieval tasks over audio.

To optimize your business content to be cited by these advanced generative voice engines, you must completely abandon keyword stuffing. Instead, you must publish incredibly clear, highly structured, and well-sourced answers written in an engaging, conversational style. The text must flow naturally when read aloud. If your content sounds robotic, overly academic, or heavily corporate on paper, the AI voice assistant will likely avoid reading it to the user, opting instead for a source that provides a more natural, engaging audio experience.

Python Automations for Tracking AI Visibility

One of the most deeply frustrating and highly disruptive aspects of the massive shift toward AI search is the sudden, catastrophic loss of traditional marketing analytics. For decades, digital marketers have relied on platforms like Google Analytics and Google Search Console to meticulously track exactly how many users visited their site, what keywords they searched for, and how they behaved once they arrived.

In the zero-click AI era, that data goes dark. When ChatGPT, Perplexity, or Gemini successfully recommends your software product to a highly qualified enterprise buyer, it rarely generates a trackable click to your website. The user gets their answer directly in the chat window and makes their decision without ever visiting your domain. Consequently, your Google Analytics dashboard will show declining or stagnant traffic, even if your brand is currently dominating the market through heavy AI bot recommendations. Traditional SEO metrics are completely blind to AI visibility.

To measure actual success in this new landscape, businesses must fundamentally shift their key performance indicators. Instead of tracking clicks, they must track “citation frequency,” “brand mentions,” and “brand share of voice” within specific AI responses. Furthermore, they must track the “sentiment” of those mentions—determining whether the AI is framing the brand in a positive, neutral, or negative context.

Because the major AI platforms currently do not natively provide this granular marketing data to external business owners, the only effective solution lies in custom software automation. Using the Python programming language, businesses can build incredibly powerful, highly automated monitoring systems that systematically and continuously query AI APIs to track their brand’s visibility.

At Tool1.app, we specialize in building these advanced Python automations. A typical AI visibility tracking architecture operates in several distinct stages:

First, the Python script relies on automated prompting. It is programmed to run hundreds of industry-specific, highly targeted prompts every single day through parallel API calls to platforms like OpenAI, Anthropic, and Perplexity (e.g., “What is the most reliable custom software development agency in Europe?”).

Second, the script utilizes advanced Natural Language Processing (NLP) to deeply parse the unstructured, conversational text returned by the AI models.

Third, the automation performs precise entity extraction. It scans the raw AI response to see if your specific brand name, your core products, or your direct competitors’ brand names were explicitly mentioned or formally cited as a source.

Finally, the script conducts advanced sentiment analysis, mathematically evaluating the specific context of the recommendation to ensure the AI is speaking favorably about your brand.

By aggressively aggregating this complex data over time, business owners can build a proprietary “AI Visibility Index.” Our Python automation experts at Tool1.app regularly build and deploy these custom monitoring dashboards for our enterprise clients, allowing them to definitively prove the ROI of their Generative Engine Optimization efforts, aggressively monitor their competitors, and adapt their content strategies based on real-time LLM outputs, even in a completely zero-click environment.

The Business Case: Costs, ROI, and Industry Impact

Adapting a business to survive the AI-driven search revolution is not a superficial marketing update or a minor budget reallocation; it is a fundamental, existential infrastructure investment that requires immediate, dedicated CEO-level attention. Organizations that mistakenly treat this massive shift purely as a marketing or copywriting challenge, rather than a deep technical transformation of their data architecture, will be swiftly left behind by competitors who understand the stakes.

The underlying economics of search visibility and digital customer acquisition are shifting dramatically. In the traditional SEO model, a small business might have spent anywhere between €1,380 and €2,760 monthly for standard retainer services, while complex enterprise campaigns scaled upward of €18,400 to €23,000 per month. However, successfully optimizing for the comprehensive ALLAI ecosystem requires vastly deeper technical infrastructure, multi-platform API citation tracking, the development of custom Python monitoring dashboards, and the continuous production of expert-verified, E-E-A-T compliant content.

Because of these heavy infrastructure requirements and the need for specialized technical talent, AI Search Optimization services command a necessary premium in the marketplace. Proposals for robust Generative Engine Optimization and Search Agent Optimization strategies often reflect a 15% to 25% cost increase over traditional SEO retainers. For example, while a highly standard, traditional optimization package might cost a mid-sized business €2,760 monthly, a comprehensive, multi-platform AI visibility framework could easily range from €3,680 to €13,800 monthly, depending entirely on the complexity of the backend data integration and the global scale of the enterprise.

Despite the notably higher upfront financial investment required to modernize this infrastructure, the Return on Investment (ROI) is incredibly compelling for businesses that execute correctly. Data indicates that traffic referred directly by AI platforms converts at significantly higher rates than traditional organic search traffic. Some industry studies indicate conversion rates are more than double—showing an 11.4% conversion rate for AI referrals versus a mere 5.3% for standard organic search. This dramatic increase in conversion efficiency occurs because the users deeply querying AI models are typically much further along in the buyer’s journey, utilizing high-intent, highly complex questions to finalize their purchasing decisions rather than browsing casually.

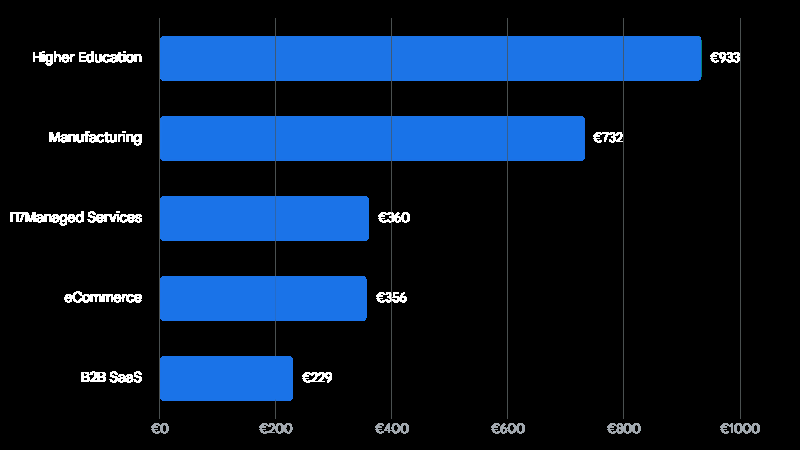

Generative Engine Optimization (GEO) Customer Acquisition Cost by Industry

While initial investment in AI infrastructure may be higher, the highly qualified nature of AI-referred traffic results in competitive customer acquisition costs, particularly in technical and structured data environments like B2B SaaS.

The financial data clearly shows that technical, highly structured industries benefit immensely and immediately from AI search optimization. For instance, the B2B SaaS sector demonstrates an incredibly efficient average Customer Acquisition Cost of just €229 under a GEO model. This stunning efficiency is largely because AI platforms show a massive algorithmic preference for highly technical, perfectly structured documentation, clear feature comparisons, and exceptionally clean APIs—the exact hallmarks of a strong, natively integrated SAO and GEO strategy.

Similarly, the eCommerce sector maintains a highly competitive CAC of roughly €356, as AI agents become increasingly adept at parsing structured product schema to recommend specific items directly to consumers. The IT and Managed Services sector follows closely behind at a €360 CAC. Conversely, industries requiring extensive, nuanced reputation management and much longer, relationship-driven trust cycles, such as Higher Education, see significantly higher acquisition costs (averaging €933). However, even these high-touch industries benefit from the undeniable, absolute necessity of being the primary cited authority in AI overviews to maintain institutional prestige.

Real-world business case studies further illustrate the transformative financial power of embracing the AI ecosystem. Global organizations are not merely experimenting; they are deploying AI to fundamentally alter their revenue trajectories. For example, Dailymotion implemented advanced AI search protocols for media discovery and achieved a staggering 17% increase in post-search click-through rates within the first week of testing—a dramatic, massive improvement over their previous traditional optimization efforts, which had historically peaked at a mere 2%.

Furthermore, widespread enterprise adoption is accelerating at a breathtaking pace. Recent surveys of global executives reveal that 52% of organizations are already actively using AI agents in production environments. Crucially, among the early adopters who have deeply embedded AI agents across their operations, 88% report they are already seeing measurable, positive ROI from generative AI use cases, compared to a 74% average across all organizations.

Global economic projections from leading consulting firms suggest that generative AI’s overarching impact on productivity, marketing, and operational efficiency could add the equivalent of €2.39 trillion to €4.05 trillion annually to the global economy. The businesses successfully capturing this unprecedented influx of value are not just casually experimenting with off-the-shelf AI chatbots to write emails; they are aggressively, systematically rebuilding their entire backend data architecture, documentation standards, and content strategies to ensure they are the definitive, authoritative source feeding those chatbots and autonomous agents.

Embracing the AI Discovery Era

The era of ten blue links dominating the digital economy is permanently ending. Generative Engine Optimization, Search Agent Optimization, and Voice Search Optimization are not fleeting marketing trends, minor algorithm updates, or industry buzzwords; they represent the foundational, structural architecture of the future internet. As artificial intelligence systems rapidly become the primary intermediaries between your business and your potential customers, your entire digital strategy must aggressively pivot. You must transition from trying to trick an algorithm into attracting human clicks, to supplying flawless, highly structured, machine-readable truth directly to autonomous systems.

Brands that intelligently and swiftly adapt to the comprehensive ALLAI framework will command unparalleled digital authority in the coming decade. They will become the default, implicitly trusted recommendations synthesized by AI agents worldwide, capturing massive market share in a zero-click environment. Conversely, those that stubbornly fail to modernize their web infrastructure, ignore advanced JSON-LD schema markup, and neglect their API accessibility will simply vanish from the discovery ecosystem entirely, rendering their businesses invisible to the machines that now guide human decision-making.

Secure Your Place in the Future of Search

Don’t lose your search visibility to AI bots and forward-thinking competitors! The inevitable transition to an AI-first digital presence requires deep, specialized technical expertise, ranging from advanced JSON-LD schema implementation and rigorous API structuring to building custom Python mention-tracking dashboards. Let Tool1.app build an incredibly powerful, fully AI-optimized web platform tailored specifically for your business. Whether you need a ground-up custom website architected natively for Generative Engine Optimization, highly secure backend endpoints designed for Search Agent Optimization, or sophisticated Python automations to reclaim your lost marketing analytics, our elite team of expert developers and AI specialists are ready to radically future-proof your brand. Contact Tool1.app today to schedule a comprehensive technical consultation and ensure your business becomes the exact answer the world’s most powerful AI models are constantly looking for.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.