Integrating OpenAI’s Assistants API into Your SaaS App: Best Practices

Table of Contents

- The Evolution of Context Management and System State

- Designing the Copilot Architecture for Enterprise SaaS

- Core Engineering Patterns: A Practical Implementation

- Advanced Integration: Retrieval-Augmented Generation and File Handling

- Security, Privacy, and Zero-Trust Architecture

- Navigating the 2026 Regulatory Landscape: The EU AI Act and GDPR

- Token Economics and ROI Optimization Strategy

- The Future of SaaS is Agentic

- Show all

The software-as-a-service landscape has undergone a profound transformation. Moving beyond static graphical user interfaces and rigid workflows, modern platforms are increasingly defined by their conversational intelligence. SaaS founders are in a relentless race to embed “Copilots”—highly capable, context-aware digital assistants—directly into their products. These AI agents do not merely answer questions; they orchestrate complex automated workflows, query proprietary databases, analyze vast troves of unstructured data, and exponentially enhance user productivity. However, deploying a production-ready AI assistant requires significantly more engineering rigor than simply forwarding text prompts to a language model. It demands sophisticated architectural planning, resilient state management, ironclad security perimeters, and strict adherence to global privacy regulations.

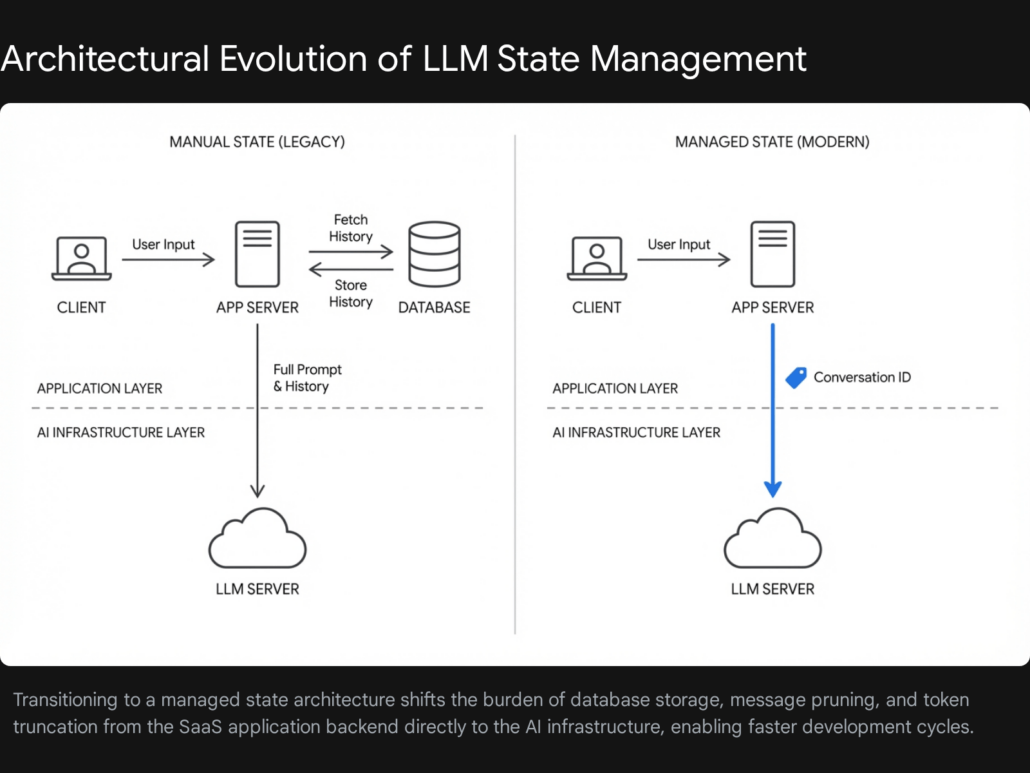

In the early days of generative AI integration, developers relying on raw chat completion endpoints faced a monumental hurdle: context management. Because large language models are inherently stateless, they retain no memory of previous interactions. To simulate an ongoing conversation, engineering teams had to build complex database architectures just to store, prune, summarize, and re-transmit entire chat logs with every single API call. The introduction of the OpenAI Assistants API revolutionized this paradigm by introducing persistent server-side threads, native retrieval-augmented generation (RAG), and streamlined function calling.

As we navigate the sophisticated AI ecosystem of 2026, the underlying technologies have evolved. OpenAI is actively migrating developers toward the more robust Responses API and Conversations API, preparing to sunset legacy Assistant constructs. Mastering these modern architectural patterns is no longer optional; it is critical for survival in the SaaS market. If you are searching for an exhaustive OpenAI Assistants API tutorial that bridges the gap between high-level theory and production-grade implementation, this comprehensive guide provides the blueprint. We will dissect the mechanics of persistent conversation state, the deployment of custom tools that bridge AI with internal systems, and the rigorous security measures required to maintain user privacy. At Tool1.app, we specialize in engineering scalable, secure AI and LLM solutions for modern businesses, and the strategies outlined in this report reflect the exact methodologies we deploy to build resilient SaaS Copilots.

The Evolution of Context Management and System State

To fully appreciate the architectural requirements of modern AI integration, one must first understand the foundational problem of context windows. A language model operates within a strict token limit—a maximum number of words or word fragments it can process in a single request. In traditional stateless architectures, developers were forced to implement manual sliding-window algorithms. As a conversation grew, the application backend had to calculate token counts using libraries like tiktoken, systematically dropping the oldest messages or passing them through a secondary summarization model before appending them to the new prompt. This approach was computationally expensive, highly prone to latency, and introduced significant points of failure.

The original Assistants API solved this by offloading state management entirely to OpenAI’s infrastructure. By introducing the concept of a persistent “Thread,” developers could simply append a new user message to an existing conversation object. The API’s backend automatically handled the context window optimization, intelligently deciding when to truncate or compress older messages to ensure the active prompt remained within the model’s limits while preserving semantic continuity.

The Modern Paradigm: Conversations and Responses

As the ecosystem matured, the architectural demands of enterprise SaaS platforms necessitated a more flexible, performant, and agentic approach. The original construct—which required managing Assistants, Threads, and complex polling loops known as Runs—is being phased out. The industry standard has shifted to the Responses API combined with the Conversations API. This modern approach retains the seamless context management of the past while drastically simplifying the integration code and enabling multi-tool parallel execution within a single network request.

Instead of managing asynchronous polling loops, developers now utilize durable conversation IDs combined with stateless response executions. You create a conversation object once in your database and continuously pass its unique identifier alongside new input data. This allows the AI to immediately access historical context across different user sessions, mobile devices, or asynchronous background processing jobs without the overhead of manual state synchronization. For enterprise SaaS applications, this means your Copilot can seamlessly recall a user’s specific workflow preferences from a session weeks ago, applying that historical context to a real-time data query today.

The transition also introduces the concept of “Prompts” replacing static “Assistant” objects. Previously, an Assistant was a persistent API object that bundled the model choice, system instructions, and tool declarations. If you needed to update the prompt, you had to execute an API call to mutate the object. Today, Prompts are managed configurations that separate application code from behavioral guidelines. Your SaaS application code handles the orchestration—managing history pruning, tool loops, and retries—while the Prompt focuses on high-level behavior, structured output schemas, and temperature defaults. This separation of concerns allows product teams to version control their AI behaviors independently of the core deployment pipeline.

Designing the Copilot Architecture for Enterprise SaaS

Before writing a single line of Python or configuring an API key, technical leadership must determine how the AI agent will interface with the SaaS application’s backend. The selected architectural pattern dictates the system’s latency, scalability, and, most importantly, its security posture. Depending on your specific use case, constraints, and scale, there are three dominant design patterns in modern SaaS AI development.

Embedded Flow Architecture

In the embedded architecture, the AI Copilot lives directly within the client interface, such as the frontend web application or native mobile app. The client application handles user inputs, makes direct calls to the AI service, and renders the output on the screen. This approach is highly responsive and excellent for simple, low-risk tasks like text summarization, grammar correction, or basic content generation. However, it is fundamentally flawed for enterprise SaaS. Because the client application coordinates the flow, exposing internal databases or proprietary backend business logic directly to the AI poses catastrophic security risks. Furthermore, storing API keys in client-side code, even when obfuscated, is a critical vulnerability.

API-Based Orchestration

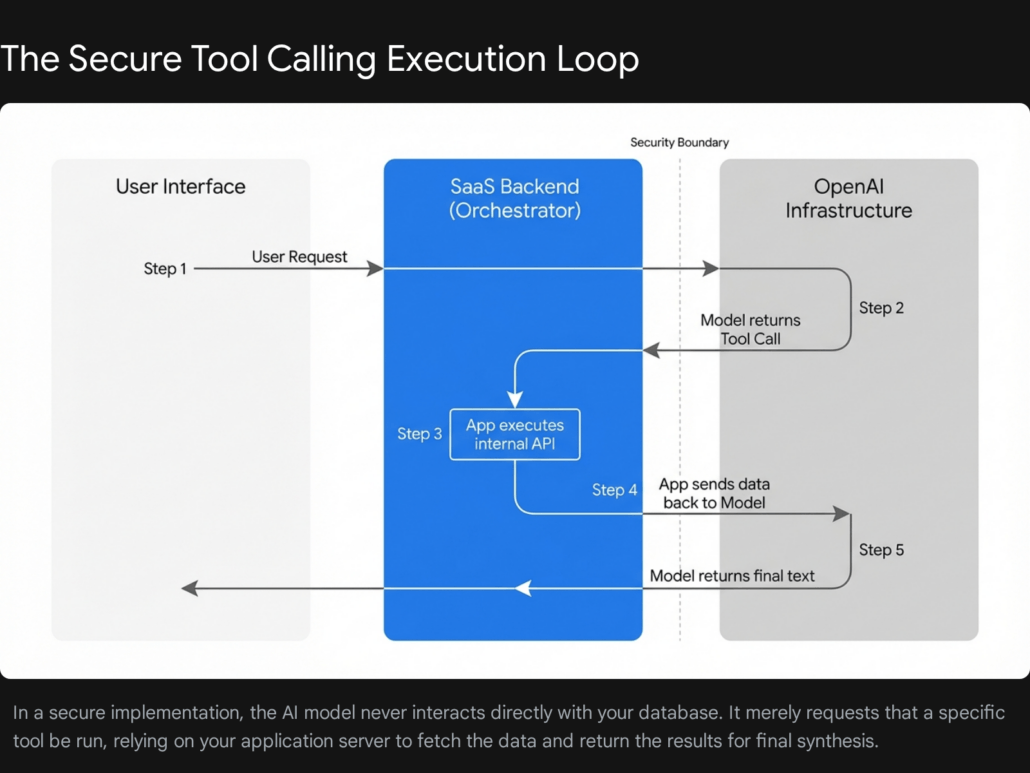

This is the enterprise gold standard for data-rich SaaS platforms. In this pattern, the user interfaces with the client app, which sends a standard HTTP request to your secure backend server. Your backend acts as the central Orchestrator. It authenticates the user, retrieves necessary contextual data from your internal databases, and securely constructs the payload to send to the AI provider.

When the AI model determines it needs more information to fulfill a user’s request, it sends a structured “tool call” back to your Orchestrator. Your server executes the requested function internally—meaning the AI never directly touches your database—and returns the resulting data for the model to synthesize. This hybrid flow allows for deterministic, highly secure data access combined with the dynamic reasoning capabilities of the large language model. It ensures that role-based access control (RBAC) is strictly enforced; if a user does not have permission to view a specific financial record in the SaaS platform, the Orchestrator will simply refuse to execute the tool call on their behalf.

Agentic Ecosystems and Autonomous Workflows

For highly complex, multi-step scenarios, applications utilize independent, autonomous agents. These agents operate in the background, untethered from a synchronous user interface session. They continuously poll systems, trigger internal workflows, monitor external data streams, and make decisions based on predefined operational rules. For example, an agentic system in a CRM platform might detect a negative sentiment email from a high-value client, automatically query the billing database for recent invoices, cross-reference the support ticketing system for open issues, and draft a comprehensive briefing document for the account manager before sending an alert via Slack.

This architecture requires robust safety guardrails. Because agents can take action without direct human supervision, implementing “human-in-the-loop” approvals for critical actions—such as issuing refunds, sending emails, or modifying database records—is essential. It also demands sophisticated telemetry and logging mechanisms to maintain auditability and track the exact chain of logic that led to a specific autonomous action.

At Tool1.app, we primarily advocate for and implement the API-Based Orchestration model for our enterprise SaaS clients. It perfectly balances the generative power and flexibility of the AI with the rigid security and compliance requirements of modern enterprise software.

Core Engineering Patterns: A Practical Implementation

To illustrate these concepts practically, we will construct the foundational logic for a SaaS Copilot using Python. This implementation serves as an applied OpenAI Assistants API tutorial, utilizing the modern Conversations and Responses patterns to demonstrate how persistent memory and tool calling operate in tandem. We will build an AI financial analyst embedded within a B2B SaaS platform that can answer user queries and dynamically pull live billing metrics from a secure internal PostgreSQL database.

Step 1: Initializing the Environment and Conversation State

The first step is establishing a secure connection to the API and initializing a persistent state. Instead of managing complex run loops and constantly polling for status updates, we generate a durable conversation object the moment a user initiates a new support ticket or chat session.

Python

import os

import json

from openai import OpenAI

# Initialize the client with environment variables to ensure secrets are kept out of source control

client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY"))

def initialize_user_session(user_id: str) -> str:

"""

Creates a new persistent conversation object.

In a production SaaS environment, you would store conversation.id in your database

mapped to the specific user's session ID and tenant ID.

"""

conversation = client.conversations.create()

print(f"Initialized secure conversation session: {conversation.id} for user: {user_id}")

return conversation.id

# Example usage during a user login or chat initialization event

active_conversation_id = initialize_user_session("usr_99824")

By storing this active_conversation_id in your application’s database (e.g., Redis or PostgreSQL), you can instantly resume the user’s context even if they close their browser and return three days later.

Step 2: Defining Custom Tools and Strict Schemas

The true power of a Copilot lies in its ability to take action. Language models cannot access your live SaaS data natively; they are isolated reasoning engines. To bridge this gap, you must provide the model with a precise JSON schema defining what tools are available, what they do, and exactly what data parameters they require to function. This is known as Function Calling.

With the advent of Structured Outputs, developers can now enforce strict adherence to these schemas, eliminating the historical issue where models would occasionally hallucinate parameter names or return invalid JSON structures. Here, we define a custom tool that allows the AI to request live revenue data for a specific account.

Python

# Define the tools available to the Copilot Orchestrator

tools =,

"additionalProperties": False

}

}

}

]

Step 3: Executing the Orchestration Loop

When a user asks a natural language question, the Orchestrator must send this input, along with the defined tools schema, to the API. The AI evaluates the prompt and determines whether it can answer the question using its internal knowledge or if it needs to invoke a tool.

If the user asks, “What is the current billing status for account ACC-8492, and are they behind on payments?”, the API will not return a plain text answer. Instead, it recognizes that to fulfill the request, it must first use the get_account_billing_status tool. It will return a payload indicating a tool call is required, complete with the parsed arguments.

Your application server must intercept this response, run the actual internal Python function against your secure database, and send the raw JSON data back to the model for final synthesis.

Python

def handle_user_query(user_input: str, conversation_id: str):

"""

Orchestrates the back-and-forth communication between the user,

the internal database, and the AI model.

"""

# 1. The Orchestrator sends the user query and the tool schema to the AI

response = client.responses.create(

model="gpt-4o",

input=[{"role": "user", "content": user_input}],

conversation=conversation_id,

tools=tools

)

# 2. Intercept and handle the model's tool calls

if response.output and response.output.type == "tool_calls":

for tool_call in response.output.calls:

if tool_call.function.name == "get_account_billing_status":

# Extract the arguments the AI intelligently parsed from the user's natural language

args = json.loads(tool_call.function.arguments)

target_account = args.get("account_id")

# --- SECURITY BOUNDARY ---

# Execute your secure internal logic here. The AI has NO direct database access.

# In production: Verify the user has RBAC permissions to view 'target_account'

print(f"Executing internal database query for {target_account}...")

# Simulated database response:

db_result = {

"status": "Past Due",

"balance_due_eur": 450.00,

"tier": "Enterprise",

"last_payment_date": "2025-12-15"

}

# 3. Return the factual data back to the model to generate the final human-readable text

final_response = client.responses.create(

model="gpt-4o",

conversation=conversation_id,

input=[{

"role": "tool",

"tool_call_id": tool_call.id,

"content": json.dumps(db_result)

}]

)

# 4. Deliver the synthesized, conversational answer back to the user frontend

return final_response.output.content.text

# Fallback if no tool was called

return response.output.content.text

# Simulating the frontend request

frontend_input = "Can you check if account ACC-8492 is behind on their bills?"

answer = handle_user_query(frontend_input, active_conversation_id)

print(f"Copilot: {answer}")

This multi-step tool-calling loop forms the robust backbone of any sophisticated SaaS Copilot. By abstracting the database layer securely behind your proprietary application logic, you empower the AI to behave intelligently without compromising systemic security or bypassing your application’s authorization protocols.

Advanced Integration: Retrieval-Augmented Generation and File Handling

Beyond calling discrete functions, modern SaaS platforms often require AI to synthesize information from massive repositories of unstructured data—such as PDF manuals, historical support tickets, or expansive knowledge bases. This is accomplished through Retrieval-Augmented Generation (RAG).

While OpenAI provides built-in File Search and Vector Store tools, relying blindly on default settings often yields suboptimal results for enterprise use cases. When a developer uploads a file to an OpenAI vector store, the document is automatically parsed and split into “chunks.” If these chunks are split arbitrarily—for instance, slicing a paragraph in half with zero contextual awareness—the semantic search ranker may return irrelevant or incomplete data.

Consider a legal SaaS application querying a dense contract. If a critical liability clause is split across two separate vector chunks, the retrieval engine might only inject the first half into the model’s context window. The model, lacking the full picture, could generate a severely inaccurate legal summary.

To mitigate this, sophisticated development teams often build bespoke RAG pipelines. Instead of relying entirely on the built-in file search, they utilize advanced semantic chunking algorithms to process documents locally, preserving header hierarchies and paragraph integrity. These pre-processed chunks are then embedded using models like text-embedding-3-large and stored in a dedicated vector database such as Pinecone or Weaviate. The Copilot is then given a custom tool—e.g., search_internal_knowledge_base—that executes a highly tuned hybrid search (combining dense vector search with sparse keyword search) to retrieve the exact relevant passages before passing them to the AI for synthesis.

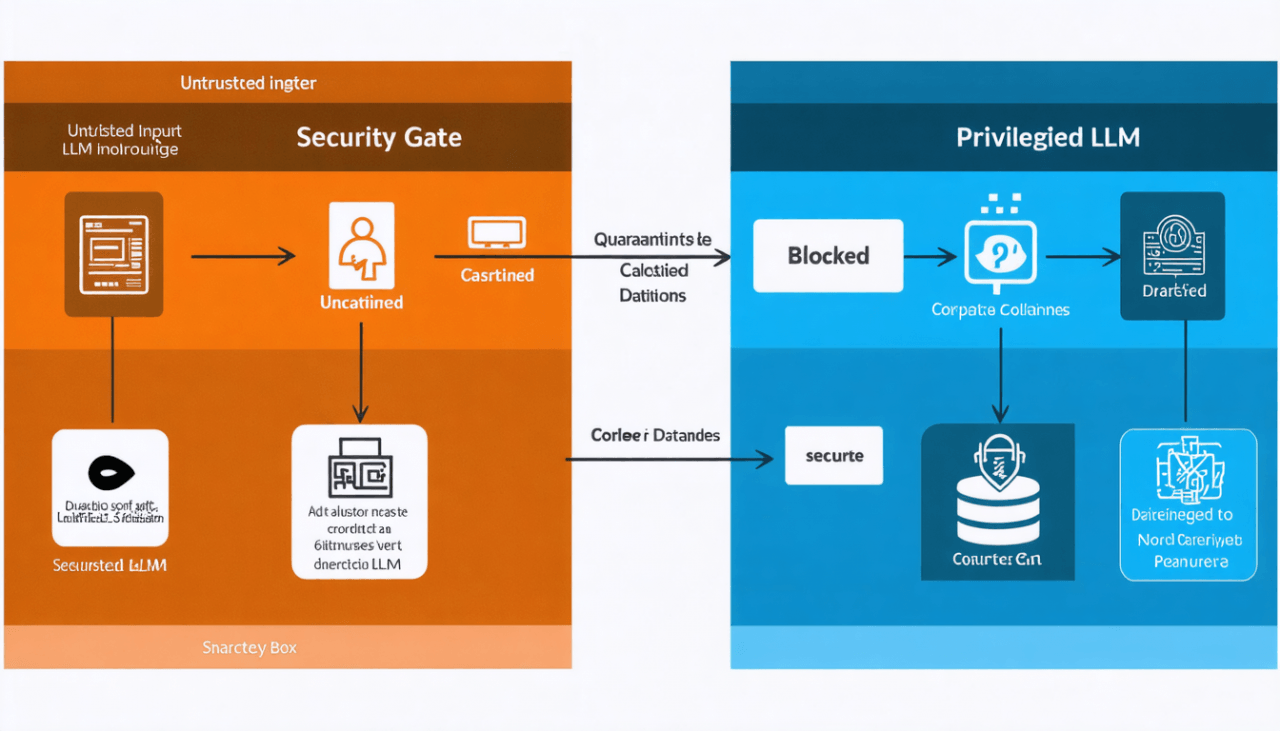

Security, Privacy, and Zero-Trust Architecture

When integrating AI into enterprise software, functionality is entirely secondary to data security. SaaS applications process a wealth of highly sensitive information, from Personally Identifiable Information (PII) and Protected Health Information (PHI) to confidential financial records and proprietary source code. Pumping raw, unredacted tenant data into an external language model API is a massive compliance violation and a critical breach of customer trust.

Data Masking and Tokenization Vaults

A Zero Trust approach dictates that no external service should be blindly trusted with raw sensitive data. Before any context is sent to the API, it must pass through a strict data masking or tokenization layer within your Orchestrator.

Data masking involves intercepting the outgoing payload and replacing sensitive entities with synthetic but structurally identical placeholders, or opaque format-preserving tokens. For example, replacing a real customer name like “Jane Doe” with a randomized string or a generalized label like “, and replacing a corporate credit card number with a vaulted token. The language model performs its reasoning and formatting using these tokens. When the generated response is received, your application intercepts it, maps the tokens back to their original values via a secure in-memory lookup table, and delivers the unredacted text to the user. This guarantees that PII never leaves your controlled geographic environment.

Furthermore, it is critical to leverage official enterprise API endpoints rather than consumer-facing web interfaces (like the public ChatGPT interface). OpenAI’s enterprise and API agreements dictate a strict Zero Data Retention policy for specific inputs, ensuring that your corporate data is explicitly excluded from being utilized to train future foundational AI models. However, relying solely on contractual promises is insufficient; cryptographic tokenization is the only way to achieve mathematical certainty regarding data privacy.

At Tool1.app, we implement robust, layered security frameworks for our custom software deployments, ensuring that every AI transaction is sanitized, logged, and fully compliant with the highest enterprise security standards.

Navigating the 2026 Regulatory Landscape: The EU AI Act and GDPR

For SaaS companies operating globally, compliance is rapidly shifting from the confines of the General Data Protection Regulation (GDPR) to the expansive and rigorous requirements of the European Union Artificial Intelligence Act. By 2026, the EU AI Act enforces a strict, risk-based tiered system for all software employing artificial intelligence, fundamentally altering how SaaS platforms must architect their Copilots.

If your SaaS Copilot is deemed a “High-Risk AI System”—a classification that encompasses AI used in biometric identification, critical infrastructure management, recruitment and HR software, or tools that determine access to essential private services like credit scoring—you are subject to stringent obligations before the software can be legally deployed in the European market.

These high-risk obligations mandate:

- Comprehensive Risk Assessments: Execution of detailed Data Protection Impact Assessments (DPIAs) specifically tailored to the AI’s autonomous capabilities.

- Dataset Quality Control: Flawless documentation of the datasets used to formulate system behavior to actively minimize and prevent discriminatory outcomes or algorithmic bias.

- Immutable Activity Logging: Granular logging of all AI activity, tool calls, and prompt evaluations to ensure absolute traceability in the event of an investigative audit.

- Human Oversight: The implementation of mandatory “human-in-the-loop” interfaces for any critical decision-making processes.

Even for systems classified under “Limited Risk,” transparency remains a stringent legal imperative. The AI Act mandates that developers must ensure end-users are unequivocally aware that they are interacting with an AI system, eliminating any risk of digital deception. Implementing transparent user consent workflows, clearly labeling AI-generated content or summaries with detectable watermarks where technically feasible, and providing users with intuitive controls to opt-out of AI processing are absolute necessities.

Under the intersecting rules of the GDPR, the “Right to Erasure” (Right to be Forgotten) presents a unique technical challenge for AI SaaS platforms. If a user requests their data be deleted, simply removing their profile from your SQL database is insufficient. You must also ensure their data is purged from any vector databases used for RAG, and that their historical conversation threads are permanently deleted from the API provider’s servers. This requires building automated data lifecycle management scripts that synchronize deletion requests across your entire microservice and external API architecture.

Token Economics and ROI Optimization Strategy

A persistent and often underestimated challenge in SaaS AI integration is managing the deceptive nature of token pricing. A single API call may cost a fraction of a cent, which feels negligible during local development and prototyping. However, when deployed to a production environment processing tens of thousands of automated interactions per day, inefficient token usage compounds exponentially, rapidly eroding profit margins and transforming a profitable SaaS feature into a massive financial liability.

Tokens represent the fundamental units of text the model processes—roughly equivalent to word fragments. Pricing is heavily dependent on the chosen model tier, the volume of text sent to the model (input tokens), and the volume of text generated by the model (output tokens).

2026 Cost Benchmarks

To understand the financial implications, it is necessary to examine the cost structure of leading models. The following comparison illustrates the dramatic cost differences across OpenAI’s modern model hierarchy, converted to approximate Euros (€) based on 2026 enterprise pricing models per one million tokens.

| Model Tier | Classification | Approximate Input Cost (€ per 1M tokens) | Approximate Output Cost (€ per 1M tokens) | Ideal SaaS Application Use Case |

| GPT-5 / o1-pro | Advanced Reasoning | €0.60 – €6.90 | €4.60 – €27.60 | Complex process orchestration, deep proprietary code analysis, high-stakes unstructured data extraction. |

| GPT-4o (Omni) | High-Fidelity Flagship | €1.15 – €2.30 | €4.60 – €6.90 | General customer support chat, multimodal image reasoning, reliable tool calling execution. |

| o3 / o4-mini | Efficient Reasoning | €0.50 – €0.92 | €2.00 – €3.68 | Mid-tier logic tasks, data formatting, standard text summarization. |

| GPT-4o-mini | Ultra-Low Cost | €0.07 | €0.28 | Massive-volume RAG background tasks, fast semantic routing, real-time typing completion. |

The Compounding Cost of Inefficiency

Consider a seemingly simple customer support Copilot that utilizes RAG to retrieve relevant documentation to help answer user queries. A standard, unoptimized workflow involves:

- Sending a detailed system prompt defining the AI’s persona and constraints (approx. 500 tokens).

- Fetching and attaching several long articles from the knowledge base as context (approx. 2,500 tokens).

- Inserting the user’s specific conversational question (150 tokens).

- Generating a detailed, formatted response (400 tokens).

This results in a total of 3,150 input tokens and 400 output tokens—equaling 3,550 total tokens processed for a single support ticket. If your application processes 10,000 tickets a month using a flagship model like GPT-4o, the cost is approximately €109. However, if a development team mistakenly routes these standard queries to an ultra-premium reasoning model like GPT-5 Pro without optimization, that same workload could exceed €1,200 per month.

Strategies for Architectural Optimization

To protect your operational margins without sacrificing the quality of the AI output, engineering teams must implement aggressive token optimization strategies:

1. Aggressive RAG Chunking and Truncation: Do not pass entire documents into the context window. As discussed earlier, utilize advanced vector databases to parse documents into highly granular chunks. Retrieve and inject only the top two or three most semantically relevant paragraphs into the prompt. Setting tight retrieval caps is the fastest way to reduce input token bloat.

2. Dynamic Model Routing: Not every query requires advanced cognitive reasoning. Implement a lightweight semantic router—often a small, highly tuned classification model—that assesses the complexity of an incoming prompt before sending it to OpenAI. Route simple FAQ retrieval queries or basic formatting tasks to highly efficient models like gpt-4o-mini. Reserve the expensive cognitive power of top-tier models exclusively for complex, multi-tool analytical workflows that require deep reasoning.

3. Prompt Caching Utilization: Modern APIs offer significant discounts for cached input tokens. By structuring your SaaS application to reuse identical system prompts, standardized tool schemas, and common contextual guidelines across thousands of requests, you can drastically reduce the cost of redundant input processing. Ensure your system instructions are front-loaded and static, appending dynamic user data only at the very end of the prompt payload to maximize cache hit rates.

At Tool1.app, our engineering teams conduct rigorous token audits for all custom software development projects. We implement semantic routing and caching layers that ensure our clients’ AI integrations operate at peak financial efficiency from day one, turning AI from a cost center into a sustainable revenue driver.

The Future of SaaS is Agentic

Integrating an AI Copilot into your SaaS application is no longer a peripheral feature; it is a transformative endeavor that completely redefines how users interact with your software. The evolution from manual context management to sophisticated, durable conversation architectures allows development teams to focus their energy on high-value business logic rather than algorithmic state tracking and token counting.

However, success in this highly competitive space requires immense technical rigor. Mastering the nuances of secure tool calling, building impenetrable data masking layers to satisfy evolving regulations like the EU AI Act, and continuously optimizing token economics are the factors that separate robust, enterprise-grade AI platforms from fragile, costly prototypes. As the ecosystem accelerates toward more autonomous, agentic frameworks—where AI operates independently in the background to solve complex problems—laying a solid, secure, and financially viable architectural foundation today is paramount to long-term scalability.

Ready to Build Your SaaS Copilot?

Navigating the complexities of secure LLM integration, custom orchestrators, vector database management, and rigorous data compliance requires specialized, battle-tested expertise. Attempting to bolt an AI layer onto a legacy codebase without a proper architectural strategy often leads to security vulnerabilities and runaway cloud costs.

Want an AI copilot in your SaaS product? Tool1.app delivers seamless OpenAI integrations, advanced Python automations, and scalable web architectures tailored to your exact business needs. We bridge the gap between cutting-edge artificial intelligence and rock-solid enterprise software engineering. Contact Tool1.app today to schedule a consultation, and let us discuss how we can engineer an intelligent, secure, and highly cost-effective AI solution that drives tangible, compounding value for your users.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.