Scaling NestJS to 1 Million Users: A Microservices Architecture Guide

Table of Contents

- The Monolith Bottleneck and the Distributed Imperative

- Executing the Migration: The Strangler Fig Pattern

- Establishing the Core Configuration of NestJS Microservices

- Selecting the Right Message Broker: RabbitMQ vs. Apache Kafka

- Advanced Data Architecture and the CQRS Pattern

- Database Sharding and Horizontal Scaling Strategies

- Ensuring Observability: Distributed Tracing with OpenTelemetry

- Cloud Economics and the Financial Reality of Massive Scale

- Architecting for Operational Resilience

- Show all

Handling one million active users represents a profound watershed moment for any digital product. At this operational scale, the fundamental constraints of monolithic software architectures and single-threaded execution environments become aggressively apparent. While the Node.js runtime offers exceptional non-blocking I/O capabilities, a single monolithic codebase attempting to process thousands of concurrent requests will eventually succumb to event loop saturation, memory heap exhaustion, and debilitating deployment friction.

Transitioning to a NestJS microservices architecture provides a robust, heavily structured, and future-proof blueprint. NestJS, leveraging an Angular-inspired modularity system, out-of-the-box TypeScript support, and elegant dependency injection, is specifically engineered to untangle the complexities of distributed systems. It seamlessly abstracts the transport layer, allowing engineering teams to pivot between TCP, RabbitMQ, Apache Kafka, or gRPC without requiring fundamental rewrites of core business logic.

This comprehensive technical guide breaks down the architectural strategies, messaging protocols, database scaling patterns, and cloud economics required to successfully scale a NestJS application to one million users and beyond.

The Monolith Bottleneck and the Distributed Imperative

Before the popularization of cloud-native paradigms, monolithic architectures were the undeniable standard for backend development. A single, unified codebase encapsulated user interfaces, business logic, data access layers, and background processing. While monoliths are highly efficient for rapid prototyping, early-stage growth, and teams with limited operational bandwidth, they introduce severe operational hazards at massive scale.

The primary danger of a monolithic architecture is its expansive “burst radius.” In a tightly coupled system, a memory leak occurring within a peripheral PDF generation module can crash the entire payment processing engine because all components share the identical memory space and runtime environment. Furthermore, scaling a monolith is fundamentally inefficient. If an application experiences a massive surge in authentication requests, the infrastructure team must replicate and scale the entire monolithic application across multiple server instances, wasting valuable compute resources on modules (like user settings or email parsing) that do not actually require additional capacity.

A NestJS microservices architecture resolves these systemic bottlenecks by dividing the overarching application into discrete bounded contexts. Each microservice acts as a self-contained unit strictly responsible for a single business domain, such as order processing, user management, or push notifications. This strict isolation ensures that if a third-party API brings down the notification service, the core e-commerce checkout flow remains fully operational. Furthermore, microservices unlock absolute technology freedom. A CPU-intensive data aggregation service can be written in Rust or Go, while the high-concurrency API gateway and business logic layers remain entirely within the NestJS ecosystem.

When evaluating infrastructure modernization, the architectural team at Tool1.app consistently notes that the primary catalyst for migrating away from a monolith is not merely performance degradation, but rather deployment friction. Large teams working on a single codebase experience constant merge conflicts, slow testing pipelines, and an inherent fear of deploying, which stifles innovation and severely lengthens the time-to-market for new features.

Executing the Migration: The Strangler Fig Pattern

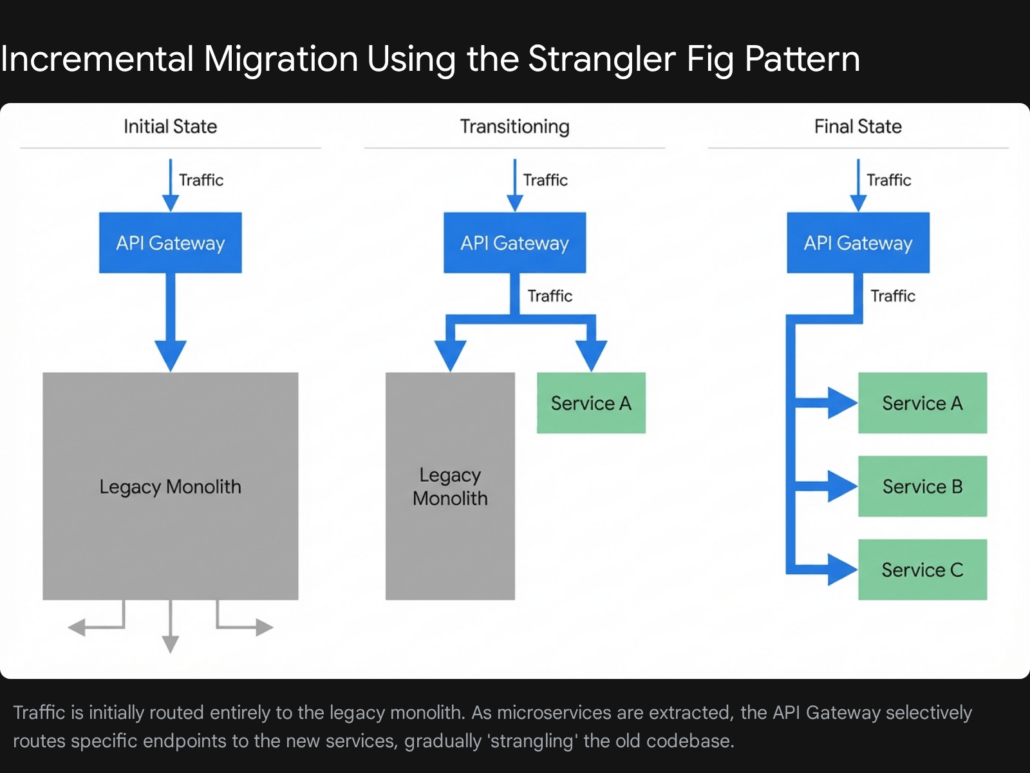

Rewriting a massive monolithic application from scratch in a secluded “version two” branch is a notoriously dangerous endeavor that almost inevitably leads to project paralysis and failure. The most successful, risk-averse transition strategy is the Strangler Fig pattern. This architectural approach takes its inspiration from a species of tree that naturally seeds in the upper branches of a host tree and slowly grows roots down to the ground, eventually enveloping and replacing the original structure entirely.

Instead of executing a high-risk “big bang” deployment where the entire system is swapped overnight, the Strangler Fig pattern advocates for an incremental, methodical migration. An advanced API Gateway or an ingress proxy is placed directly in front of the existing monolithic application. As the business dictates the need for new features, or as specific legacy domains become unscalable, they are built or extracted as independent NestJS microservices.

The proxy intelligently intercepts all incoming HTTP requests. If a user attempts to access the legacy /api/legacy-checkout route, the gateway seamlessly forwards the request to the underlying monolith. However, if the user accesses the newly architected /api/users route, the gateway routes the traffic to the newly provisioned NestJS user microservice. Over time, as more functional domains are extracted from the monolith, the older system is gradually “strangled” of its responsibilities. The legacy codebase shrinks until it can be safely decommissioned without ever causing a widespread system outage.

Establishing the Core Configuration of NestJS Microservices

NestJS provides a native, highly sophisticated @nestjs/microservices package that dramatically simplifies distributed communication. Rather than tightly coupling the application logic to a specific, hardcoded message queue API, NestJS leverages clean TypeScript decorators that effectively abstract the underlying transport layer.

To instantiate a microservice, the traditional HTTP-based NestFactory.create() initialization method is bypassed. Instead, the application utilizes the NestFactory.createMicroservice() method, defining the specific transport strategy required for the given node.

TypeScript

import { NestFactory } from '@nestjs/core';

import { Transport, MicroserviceOptions } from '@nestjs/microservices';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.createMicroservice<MicroserviceOptions>(

AppModule,

{

transport: Transport.TCP,

options: {

host: '127.0.0.1',

port: 8877,

},

},

);

await app.listen();

}

bootstrap();

Within this distributed architecture, communication patterns are broadly divided into two primary operational categories:

Synchronous Request-Response: In this pattern, the calling client sends a specific message to another microservice and explicitly waits for a response before proceeding. NestJS manages this complexity under the hood by dynamically creating two logical channels—one for transmitting the initial data and a secondary return channel to capture the response. Developers implement this by utilizing the @MessagePattern() decorator within their controller classes.

Asynchronous Event-Based Messaging: Conversely, the event-based pattern is utilized when a microservice simply needs to publish a notification that a specific event has occurred, without waiting for a downstream service to reply. This “fire-and-forget” approach is critical for maintaining high throughput, as it completely prevents the Node.js event loop from blocking. This is implemented utilizing the @EventPattern() decorator, allowing multiple distinct microservices to subscribe to and react to the exact same event in parallel.

Selecting the Right Message Broker: RabbitMQ vs. Apache Kafka

When preparing an infrastructure to scale to one million users, relying on direct HTTP/REST communication between internal microservices rapidly becomes a critical anti-pattern. Synchronous HTTP calls inherently create tight architectural coupling; if the receiving inventory service experiences downtime, the requesting order service will time out and fail, potentially triggering cascading failures across the entire platform. Instead, asynchronous communication facilitated by robust message brokers guarantees fault tolerance, enables seamless load leveling, and ensures a truly decoupled ecosystem.

The two dominant message brokers utilized in modern enterprise architectures are RabbitMQ and Apache Kafka. Choosing the correct broker between the two is a defining architectural decision that dictates the scalability ceiling of the entire system.

RabbitMQ: The Intelligent Routing Broker

RabbitMQ operates on a well-established “smart broker, dumb consumer” model. It is a highly versatile, general-purpose message broker that excels at complex message routing, background job processing, and scenarios where discrete tasks must be processed precisely once and subsequently deleted from the queue.

RabbitMQ actively manages the state of the messages, retaining them in queues and actively pushing them to connected consumer microservices. Once a NestJS consumer successfully processes the payload and issues an explicit acknowledgment back to the broker, RabbitMQ permanently deletes the message. It provides exceptionally granular routing capabilities through a system of exchanges and bindings, empowering developers to route traffic based on intricate headers, exact matches, or complex wildcard topic patterns.

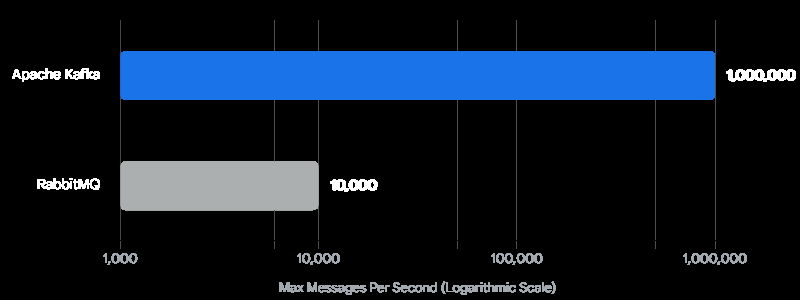

However, RabbitMQ has performance limitations inherent to its design. It typically processes between 4,000 and 10,000 messages per second. While this capacity is more than sufficient for the vast majority of standard applications, it can rapidly become a severe bottleneck when an application reaches the scale of one million highly active users generating massive volumes of real-time telemetry, live chat messages, or continuous financial transaction logs.

Configuring a NestJS microservice to connect to a RabbitMQ cluster requires precise queue and durability options:

TypeScript

import { NestFactory } from '@nestjs/core';

import { Transport, MicroserviceOptions } from '@nestjs/microservices';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.createMicroservice<MicroserviceOptions>(AppModule, {

transport: Transport.RMQ,

options: {

urls: ['amqp://localhost:5672'],

queue: 'notification_queue',

queueOptions: {

durable: true // Ensures messages survive broker restarts

},

},

});

await app.listen();

}

bootstrap();

To consume messages via RabbitMQ within a NestJS controller, developers inject the context to explicitly manage manual acknowledgments, a critical practice for ensuring no data is lost during sudden container crashes:

TypeScript

import { Controller } from '@nestjs/common';

import { Ctx, EventPattern, Payload, RmqContext } from '@nestjs/microservices';

@Controller()

export class NotificationController {

@EventPattern('user_created')

async handleUserCreated(@Payload() data: any, @Ctx() context: RmqContext) {

const channel = context.getChannelRef();

const originalMsg = context.getMessage();

try {

// Process the heavy notification logic here

console.log(`Processing notification for user: ${data.userId}`);

// Manually acknowledge the message upon successful completion

channel.ack(originalMsg);

} catch (error) {

// Reject and optionally requeue the message if processing fails

channel.nack(originalMsg, false, true);

}

}

}

Apache Kafka: The High-Throughput Distributed Log

For enterprise systems demanding unprecedented scale—such as real-time analytics dashboards, immense stream processing platforms, or high-frequency automated trading applications—Apache Kafka is the undisputed industry standard. Unlike RabbitMQ, Kafka operates on a distinct “dumb broker, smart consumer” architectural model.

Instead of relying on traditional memory-heavy queues, Kafka functions as an append-only distributed commit log. It leverages sequential disk I/O, a storage access method that writes data contiguously across physical storage sectors. Sequential I/O is exponentially faster than the random disk access methods typically used by relational databases or traditional message queues. This fundamental hardware optimization allows a properly tuned Kafka cluster to effortlessly ingest and process over one million messages per second.

Crucially, Kafka does not delete messages immediately after they are consumed. Messages remain durably stored within the distributed log based on a pre-configured retention policy—whether that is seven days, thirty days, or indefinitely. Instead of the broker tracking who has read what, the consumer microservices track their own reading progress by maintaining an “offset” value. This powerful architecture allows entirely new NestJS microservices to be deployed into production and “replay” historical events from the past month, allowing them to instantly rebuild their internal state—a highly desirable feature that is physically impossible in standard RabbitMQ deployments.

Configuring a robust Kafka consumer within NestJS requires assigning appropriate client identifiers and consumer group IDs. These IDs are critical; they ensure that Kafka partitions the message load efficiently and prevents collisions when scaling the NestJS microservice horizontally across multiple Docker containers.

TypeScript

import { NestFactory } from '@nestjs/core';

import { Transport, MicroserviceOptions } from '@nestjs/microservices';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.createMicroservice<MicroserviceOptions>(AppModule, {

transport: Transport.KAFKA,

options: {

client: {

clientId: 'billing-engine-client',

brokers: ['kafka-broker-1:9092', 'kafka-broker-2:9092'],

},

consumer: {

groupId: 'billing-consumer-group',

},

run: {

autoCommit: false // Disable auto-commit for explicit error handling

}

},

});

await app.listen();

}

bootstrap();

To provide absolute clarity on the architectural capabilities of both technologies, the following comparison highlights the core distinctions:

| Feature Dimension | RabbitMQ | Apache Kafka |

| Primary Architecture | Smart Broker, Dumb Consumer | Dumb Broker, Smart Consumer |

| Peak Throughput | ~4,000 to 10,000 messages/sec | Up to 1,000,000+ messages/sec |

| Message Persistence | Deleted upon acknowledgment | Retained based on time/size policy |

| Routing Flexibility | High (Complex Exchanges, Bindings) | Low (Direct routing to Topics) |

| Historical Replay | Not natively supported | Natively supported via offsets |

| Optimal Use Case | Task queues, complex routing logic | Massive event streaming, data pipelines |

For enterprise applications aiming to reliably support one million active users, architecture teams generally implement a highly resilient hybrid approach. Kafka is deployed as the central nervous system, handling all high-throughput domain events and maintaining an immutable ledger of system state. Concurrently, RabbitMQ is utilized alongside it to execute targeted, lower-latency background jobs like PDF generation or targeted email dispatching.

Advanced Data Architecture and the CQRS Pattern

A microservices architecture is fundamentally flawed if the engineering team simply points all newly created microservices to a single, massive monolithic database. A shared database immediately reintroduces the tight architectural coupling the team was trying to escape, creates a catastrophic single point of failure, and guarantees severe database lock contention under high transactional load. The golden rule of microservices is strict data sovereignty: each service must exclusively own and manage its own database instance.

However, decentralized data storage introduces highly complex querying challenges. If a client application needs to render a comprehensive dashboard showing a user’s profile information, their recent historical orders, and their real-time billing status, the API Gateway must independently query three entirely separate microservices. The gateway must then perform complex, memory-intensive data aggregations before returning the finalized response payload to the client. Over thousands of concurrent requests, this synchronous scattering and gathering process creates massive latency spikes.

To resolve this pervasive distributed systems issue, elite engineering teams rely heavily on the CQRS (Command and Query Responsibility Segregation) pattern. CQRS dictates that the models used to update data (Commands) should be strictly separated from the models used to read data (Queries).

Engineers at Tool1.app frequently implement the CQRS pattern to ensure that highly complex, multi-table analytical read queries do not block or degrade the performance of high-throughput transactional write operations.

In a highly scaled CQRS setup:

- Commands (Writes): Operations that alter system state (Create, Update, Delete) are routed exclusively to a Write Database. This database is typically a fully normalized relational database engine (such as PostgreSQL) optimized for strict ACID compliance and fast, localized transactions.

- Queries (Reads): Operations that request data are routed exclusively to a highly optimized Read Database. The Read Database is often a completely denormalized NoSQL document store (such as MongoDB) or a high-speed search index (like Elasticsearch). The data is pre-calculated and stored in the exact format the client application requires, removing the need for slow, computationally expensive database JOIN operations.

When a write operation successfully completes on the Command side, the service immediately publishes a domain event (typically via Kafka) broadcasting that the specific entity’s data has mutated. The Query service listens intently for this exact event and asynchronously updates its read-optimized denormalized database.

NestJS provides a dedicated, lightweight @nestjs/cqrs module to facilitate this pattern with remarkable elegance. Developers are encouraged to create explicit Command and Query classes, which are dynamically routed to dedicated handler classes via an internal event bus.

TypeScript

import { CommandHandler, ICommandHandler, EventBus } from '@nestjs/cqrs';

// The explicit definition of the action intent

export class CreateOrderCommand {

constructor(public readonly userId: string, public readonly totalAmount: number) {}

}

// The isolated business logic that handles the command

@CommandHandler(CreateOrderCommand)

export class CreateOrderHandler implements ICommandHandler<CreateOrderCommand> {

constructor(

private readonly repository: OrderRepository,

private readonly eventBus: EventBus

) {}

async execute(command: CreateOrderCommand) {

// 1. Execute strict business validation and persistence logic

const order = await this.repository.createOrder(command.userId, command.totalAmount);

// 2. Publish an internal event that the Read Model handler will intercept

this.eventBus.publish(new OrderCreatedEvent(order.id, command.userId, command.totalAmount));

return order;

}

}

This clear segregation ensures that if the system is suddenly bombarded with a massive influx of read requests (e.g., users refreshing a product catalog during a major flash sale), only the Query infrastructure scales up. The critical Write infrastructure remains entirely insulated from the read-heavy load, ensuring that users attempting to complete a checkout process experience zero latency degradation.

Database Sharding and Horizontal Scaling Strategies

Beyond conceptual architectural patterns, physical data scaling strategies remain paramount. When an application supports one million active users generating thousands of queries per second, even the most robust single database server will eventually exhaust its physical disk I/O, memory limits, and CPU capabilities. While vertical scaling (provisioning larger, vastly more expensive hardware) is a viable initial strategy, it inevitably hits a hard ceiling.

Horizontal scaling at the database layer is achieved through database sharding. Sharding involves systematically partitioning the global dataset across multiple independent database clusters.

For instance, utilizing a List or Range sharding strategy, user data could be distributed geographically. Users originating from the European Union might be stored securely on Database Cluster A, while users originating from North America are routed to Database Cluster B. Alternatively, a Hash sharding algorithm can distribute data evenly across ten different clusters based on a cryptographic hash of the user’s unique ID.

Modern Object-Relational Mappers (ORMs) deeply integrated with NestJS, such as Prisma and TypeORM, offer specialized configurations to handle these complex distributed database environments.

However, implementing database sharding introduces immense, often underestimated operational complexity. Executing a standard SQL JOIN query across data that lives on two entirely different physical servers is immensely slow and technically fraught. Furthermore, maintaining strict ACID transaction integrity across multiple shards requires complex two-phase commit protocols that drastically impact write speeds. Because of this inherent complexity, extensive database sharding should generally only be implemented as a last resort, pursued only after vertical scaling, extensive connection pooling, heavy indexing, and robust read-replicas have been completely exhausted.

To mitigate database load before resorting to sharding, implementing a robust caching layer is mandatory. Utilizing an in-memory datastore like Redis fundamentally changes the performance profile of a NestJS application. By employing the Cache-Aside pattern, the application checks Redis for the requested data first. If the data is present (a cache hit), it is returned in microseconds. If absent (a cache miss), the application queries the database, returns the data to the user, and simultaneously populates the Redis cache for subsequent requests, drastically reducing the total volume of expensive SQL queries hitting the primary database.

Ensuring Observability: Distributed Tracing with OpenTelemetry

A massive, often overlooked drawback of distributed microservice systems is the total loss of request traceability. In a legacy monolith, diagnosing an error is straightforward: a failed request generates a single, unified stack trace in the application logs.

In a distributed environment, a single user request might traverse an API Gateway, an authentication microservice, a message broker, an inventory service, and finally an order service. If the request ultimately fails, pinpointing the exact microservice responsible, or identifying which specific database query caused a massive latency spike, is nearly impossible without highly specialized observability tooling. The system devolves into a diagnostic murder mystery.

This is precisely where OpenTelemetry and Distributed Tracing become completely non-negotiable requirements for production readiness.

OpenTelemetry serves as the industry-standard, vendor-agnostic framework for unified observability. It enables developers to inject standardized trace contexts into every single request. When a new HTTP request hits the initial NestJS API Gateway, OpenTelemetry generates a universally unique Trace ID. As the request is passed to downstream microservices—even when flowing asynchronously through message brokers like RabbitMQ or Kafka—this specific Trace ID is automatically injected into the HTTP headers or the internal message metadata.

The development team at Tool1.app strictly advocates for integrating OpenTelemetry from day one of any microservices build. Tracing visualization platforms like Jaeger or open-source solutions like Signoz capture these telemetry streams and visualize the entire lifecycle of a request. Engineers can view a highly detailed waterfall chart displaying exactly how many milliseconds were spent executing code in each distinct microservice and precisely how long each database query took to resolve.

Integrating OpenTelemetry securely into a NestJS application requires bootstrapping the telemetry SDK completely before the core NestJS application context attempts to initialize. Failure to load the SDK first will result in critical Express and HTTP modules missing their required instrumentation hooks.

TypeScript

import { NodeSDK } from '@opentelemetry/sdk-node';

import { OTLPTraceExporter } from '@opentelemetry/exporter-trace-otlp-http';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

import { Resource } from '@opentelemetry/resources';

import { SemanticResourceAttributes } from '@opentelemetry/semantic-conventions';

// Configure the exporter to send traces to a collector (e.g., Jaeger)

const traceExporter = new OTLPTraceExporter({

url: 'http://localhost:4318/v1/traces',

});

// Initialize the core Node SDK with required instrumentations

const otelSDK = new NodeSDK({

resource: new Resource({

: 'core-billing-microservice',

}),

traceExporter,

instrumentations: [

new HttpInstrumentation(),

new ExpressInstrumentation()

],

});

// CRITICAL: The SDK must be started before NestFactory is invoked

otelSDK.start();

// Graceful shutdown handling to ensure final traces are exported

process.on('SIGTERM', () => {

otelSDK.shutdown()

.then(() => console.log('OpenTelemetry SDK shut down successfully'))

.finally(() => process.exit(0));

});

import { NestFactory } from '@nestjs/core';

import { AppModule } from './app.module';

async function bootstrap() {

const app = await NestFactory.create(AppModule);

await app.listen(3000);

}

bootstrap();

By ensuring that OpenTelemetry initializes first, every subsequent external HTTP call, database query, and broker message published by the NestJS application is automatically intercepted, recorded, and exported. This proactive instrumentation dramatically reduces the mean time to resolution (MTTR) required to diagnose and remediate catastrophic production outages.

Cloud Economics and the Financial Reality of Massive Scale

Successfully architecting and scaling a distributed microservices infrastructure to support one million highly active users involves a significant and ongoing financial commitment. The global public cloud market, dominated by Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP), is rapidly expanding, with total end-user spending projected to exceed an astounding €665 billion globally by the end of 2025.

While the exact operational cost of hosting an application catering to one million users fluctuates wildly based on specific computational requirements, average payload sizes, intensive media streaming demands, and complex machine learning inferences, a standard transactional web platform (such as a B2B SaaS product, fintech platform, or e-commerce marketplace) requires rigorous and proactive financial budgeting.

To provide concrete perspective, the table below outlines a typical estimated monthly expenditure baseline for a high-traffic microservices cluster, utilizing standard conversion estimates to provide clarity for European enterprises.

| Cloud Infrastructure Category | Estimated Monthly Cost | Key Architectural Optimization Strategies |

| Compute (Kubernetes / ECS) | €2,750 – €7,350 | Utilize aggressive Horizontal Pod Autoscaling (HPA) to scale down idle NestJS instances during low-traffic nights. Leverage deeply discounted spot instances for non-critical stateless background queue workers. |

| Managed Databases (SQL/NoSQL) | €2,300 – €5,500 | Implement widespread Redis caching to drastically reduce expensive database read operations. Utilize specialized read-replicas exclusively for heavy analytical reporting queries. |

| Data Egress & Load Balancing | €1,380 – €3,680 | Offload all static asset delivery, caching, and edge logic to a Content Delivery Network (CDN) like Cloudflare to systematically minimize highly punitive cloud provider data egress fees. |

| Message Brokers (Kafka/RMQ) | €735 – €1,840 | Strictly optimize event message payload sizes. Avoid transmitting full object states; instead, transmit only lightweight entity IDs and force consumers to query data only when strictly necessary. |

| Total Estimated Baseline | €7,165 – €18,370 | Assumes standard transactional API utilization devoid of intensive media streaming or massive data warehousing requirements. |

Cost predictability remains a paramount concern for technology leadership. AWS provides unparalleled global scale and an incredibly deep ecosystem, but requires specialized architectural expertise to navigate its complex, opaque pricing structures to avoid devastating bill shock. Google Cloud Platform is highly favored for data-heavy analytics and provides what is widely considered the superior managed Kubernetes service (GKE), which pairs perfectly with heavily containerized NestJS microservices. Microsoft Azure remains the dominant, logical choice for massive enterprise organizations already deeply integrated with legacy Microsoft ecosystems and compliance requirements.

Regardless of the selected cloud provider, underlying software architecture directly dictates the final cost. A poorly optimized Node.js monolith that forces an engineering team to continuously provision massive, highly expensive compute servers simply to absorb unpredictable peak loads will almost always cost significantly more than a well-orchestrated, loosely coupled microservices cluster that automatically scales specific, lightweight NestJS components independently based on exact real-time demand.

Financial modeling is a critical component of high-level system design. Tool1.app has observed that enterprises operating at this immense scale can eliminate substantial infrastructure waste simply by ensuring their codebase is modular enough to utilize granular autoscaling. When average enterprise downtime costs exceed an astonishing €92,000 per hour, the initial engineering investment in a resilient, decoupled, and observable microservices architecture is ultimately a massive, long-term cost-saving measure.

Architecting for Operational Resilience

Transitioning away from a monolithic application to a deeply distributed microservices architecture utilizing NestJS is not merely a technological upgrade; it is a fundamental strategic business decision explicitly designed to guarantee operational resilience, accelerate developer velocity, and unlock infinite horizontal scalability.

By confidently embracing the Strangler Fig pattern to mitigate migration risks, physically decoupling business domains with enterprise-grade message brokers like RabbitMQ or Apache Kafka, intelligently separating read and write data loads with the CQRS pattern, and aggressively securing system-wide visibility through OpenTelemetry distributed tracing, engineering teams can build highly robust platforms that effortlessly handle the immense demands of one million concurrent users.

Partner With Experts for Your Infrastructure Transformation

Planning to scale your backend or seeking to modernize a struggling legacy application? Tool1.app specializes in designing high-performance NestJS microservice architectures, crafting custom Python automations, and deploying scalable AI and LLM solutions optimized for profound business efficiency. Whether you need to seamlessly transition an unscalable monolith to a modern distributed infrastructure, or you are building an ambitious, high-traffic software product completely from scratch, our elite software development agency possesses the deep technical expertise required to make your vision a reality. Contact Tool1.app today to discuss your complex project requirements and definitively future-proof your digital infrastructure.

S

P

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.