RAG vs. Fine-Tuning: Which Approach Fits Your Enterprise Data Strategy?

Table of Contents

- The Mechanics of Foundational LLMs: Parametric vs. Non-Parametric Memory

- Deep Dive: Retrieval-Augmented Generation (RAG)

- Deep Dive: LLM Fine-Tuning

- RAG vs Fine-tuning LLM: The Ultimate Enterprise Comparison

- Advanced Architectures: The Hybrid Approach (RA-FT)

- Decision Matrix for Enterprise Leaders

- Architecting Your AI Future

- Show all

In the rapidly evolving landscape of artificial intelligence, adopting Large Language Models (LLMs) is no longer a futuristic experiment—it is a baseline requirement for maintaining a competitive edge in the modern business environment. However, as enterprise leaders move beyond generic AI chatbots and attempt to integrate generative AI into their core operations, they inevitably encounter a massive roadblock: foundational models know absolutely nothing about their proprietary, behind-the-firewall data.

An off-the-shelf model might understand the complexities of quantum physics or the syntax of every major programming language, but it does not know your company’s internal human resources policies, your unreleased product catalogs, or the highly specific way your customer service agents are trained to respond to billing disputes. To bridge this critical knowledge gap, businesses must customize these models with their own data.

This brings technology officers, lead developers, and product managers to the most important architectural debate in modern AI deployment: RAG vs Fine-tuning LLM.

Understanding the profound technical and financial distinctions between Retrieval-Augmented Generation (RAG) and model fine-tuning is paramount. Choosing the wrong methodology can lead to skyrocketing compute costs, unacceptable application latency, and AI systems that confidently hallucinate false information. At Tool1.app, a software development agency specializing in mobile and web applications, custom websites, Python automations, and bespoke AI/LLM solutions for business efficiency, we consistently see organizations struggle with this exact architectural choice.

In this comprehensive, deep-dive guide, we will dissect both approaches, explore their underlying mathematical mechanics, compare their Total Cost of Ownership (TCO), examine real-world use cases, and provide a definitive strategic framework to help you choose the right data strategy for your enterprise.

The Mechanics of Foundational LLMs: Parametric vs. Non-Parametric Memory

To truly understand the RAG vs Fine-tuning LLM debate, we must first examine how neural networks store and retrieve information. Large Language Models process language using what is known as “parametric memory.” During their initial, multi-million-dollar pre-training phase, they ingest massive datasets from the public internet. The relationships between words, concepts, and facts are encoded into billions (or trillions) of mathematical parameters (weights and biases).

This parametric memory is powerful, but it suffers from three critical enterprise limitations:

- The Knowledge Cutoff: A model’s knowledge is frozen at the exact moment its training concludes. If a model was trained in 2023, it has zero awareness of market events, newly released software frameworks, or your company’s latest quarterly financial reports.

- The Hallucination Problem: When forced to answer a question outside its parametric memory, an LLM’s predictive engine does not naturally say, “I do not know.” Instead, it calculates the most statistically probable sequence of words, resulting in confident, plausible-sounding, but entirely fabricated answers. In legal, medical, or financial contexts, hallucinations are catastrophic liabilities.

- Behavioral Rigidity: Standard foundational models are aligned via reinforcement learning to be universally helpful, polite, and chatty. If your business requires an AI to output terse, highly structured JSON payloads for a Python automation script, the model will often wrap the JSON in conversational filler (e.g., “Here is the JSON you requested: …”), which fundamentally breaks downstream application logic.

To solve these issues, we must augment the AI. We must either change the prompt dynamically by giving the model the exact information it needs at runtime (utilizing external “non-parametric memory”), or we must change the model internally by updating its mathematical parameters to permanently alter its behavior. These two paths define the core of our discussion.

Deep Dive: Retrieval-Augmented Generation (RAG)

Retrieval-Augmented Generation (RAG) is a revolutionary software architecture that connects an LLM to your external, proprietary data sources. Instead of forcing the LLM to permanently memorize your data during a costly training phase, RAG treats the LLM as a highly intelligent reasoning engine and gives it an incredibly efficient search engine to find the exact documents needed to answer a specific query.+1

To use an analogy, RAG is like giving a brilliant student an open-book exam. They may not have memorized the textbook, but because you provided a meticulously organized index, they can instantly locate the exact paragraph needed to synthesize a perfect, fact-based answer.

The Technical Architecture of a RAG Pipeline

Building a robust, production-grade RAG system requires orchestrating a complex data pipeline. At Tool1.app, we typically build these using Python, leveraging frameworks like LangChain or LlamaIndex. The process is divided into two phases: Data Ingestion and Query Retrieval.

Phase 1: Data Ingestion and Vectorization

- Document Parsing: Your raw enterprise data—PDFs from SharePoint, tickets from Jira, records from PostgreSQL—is extracted, cleaned, and normalized.

- Chunking Strategies: LLMs have strict “context window” limits. We cannot pass a 500-page manual into a single prompt. Therefore, documents are algorithmically split into smaller chunks. Advanced systems use semantic chunking, ensuring that paragraphs are not split mid-sentence, and applying an overlap (e.g., 150 characters) to preserve contextual continuity between adjacent chunks.

- Embedding: Each chunk is passed through an embedding model (such as OpenAI’s

text-embedding-3-largeor open-source alternatives like BAAI’sbge-large-en). The model translates human text into a dense, high-dimensional vector. For instance, a chunk of text becomes an array of 1,536 floating-point numbers. - Vector Database Storage: These vectors are stored in a Vector Database (like Pinecone, Milvus, Qdrant, or ChromaDB). In this mathematical space, concepts that are semantically related are positioned physically close to one another.

Phase 2: Retrieval and Generation When a user submits a query (e.g., “What is the deductible for the premium health plan?”), the system acts in milliseconds:

- The user’s query is vectorized using the exact same embedding model.

- The vector database performs a similarity search. It compares the query vector to the document vectors, typically calculating the Cosine Similarity. The mathematical formula is:

Cosine Similarity = (A · B) / (||A|| × ||B||). A score closer to 1.0 indicates a highly relevant document. - The database retrieves the top

Kmost relevant text chunks. - These chunks are dynamically injected into a hidden system prompt alongside the user’s question.

- The LLM reads the compiled prompt and generates a factually accurate answer based strictly on the retrieved text.

Python Implementation: A Foundational RAG Script

For technical teams evaluating how to start, here is a foundational Python example demonstrating a RAG retrieval chain. This represents the kind of backend automation Tool1.app engineers build for custom web applications:

Python

import os

from langchain_community.document_loaders import PyPDFLoader

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_openai import OpenAIEmbeddings, ChatOpenAI

from langchain_community.vectorstores import Chroma

from langchain.chains import create_retrieval_chain

from langchain.chains.combine_documents import create_stuff_documents_chain

from langchain_core.prompts import ChatPromptTemplate

# 1. Ingest and Chunk Proprietary Enterprise Data

# Using RecursiveCharacterTextSplitter to maintain paragraph integrity

loader = PyPDFLoader("enterprise_secure_policies_2026.pdf")

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=150

)

chunks = text_splitter.split_documents(docs)

# 2. Embed and Store in a Vector Database

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vector_store = Chroma.from_documents(documents=chunks, embedding=embeddings)

# Configure the retriever to fetch the top 4 most relevant chunks

retriever = vector_store.as_retriever(search_kwargs={"k": 4})

# 3. Define the LLM and the Strict Prompt Template

# A temperature of 0.0 ensures highly deterministic, factual answers

llm = ChatOpenAI(model="gpt-4o", temperature=0.0)

system_prompt = (

"You are a strict corporate AI assistant. Answer the user's question "

"using ONLY the provided context below. If the answer is not explicitly "

"stated in the context, strictly reply: 'I do not have this information.' "

"Do not attempt to guess.nn"

"Context: {context}"

)

prompt = ChatPromptTemplate.from_messages([

("system", system_prompt),

("human", "{input}"),

])

# 4. Create and Execute the RAG Chain

document_chain = create_stuff_documents_chain(llm, prompt)

rag_chain = create_retrieval_chain(retriever, document_chain)

# 5. Execute a query against the proprietary data

user_query = "What is the maximum reimbursement for remote office hardware?"

response = rag_chain.invoke({"input": user_query})

print(response["answer"])

Advanced RAG Techniques

Standard RAG is powerful, but enterprise deployments often require advanced techniques to reach near-perfect accuracy:

- Query Transformation (HyDE): If a user’s prompt is too brief, a smaller LLM automatically rewrites the query into a more detailed hypothesis before searching the vector database, drastically improving retrieval relevance.

- Re-Ranking: Retrieving 20 documents from the vector database and passing them through a specialized Cross-Encoder model to rigorously score and re-order them, ensuring only the absolute most relevant context reaches the generation LLM.

Deep Dive: LLM Fine-Tuning

If RAG is about giving the AI an open book, fine-tuning is about sending the AI to an intensive, specialized training academy. Fine-tuning involves taking a pre-trained foundational model and running a secondary training phase using a highly curated dataset. This process physically alters the neural network’s internal mathematical weights.

While RAG injects knowledge via the prompt (non-parametric memory), fine-tuning ingrains knowledge and behavior directly into the model’s core architecture (parametric memory).

The Mechanics of Parameter-Efficient Fine-Tuning (PEFT)

Historically, fine-tuning a Large Language Model required “Full Fine-Tuning,” which meant updating every single parameter in a network of billions. This demanded massive clusters of high-end cloud GPUs running for weeks, costing hundreds of thousands of dollars. It was an insurmountable capital expenditure for most businesses.

Today, the enterprise standard is Parameter-Efficient Fine-Tuning (PEFT), achieved primarily through a technique called Low-Rank Adaptation (LoRA), or its quantized variant, QLoRA.

Instead of modifying the massive original weight matrix W, LoRA freezes the original model. It then injects two smaller, lower-rank matrices (A and B) into the architecture’s attention layers. During the training loop, only these small matrices are updated. The new knowledge is calculated mathematically as: W<sub>new</sub> = W<sub>original</sub> + (A × B).

Because the rank of these matrices is incredibly small, LoRA reduces the number of trainable parameters by up to 99%. This breakthrough allows engineering teams to fine-tune powerful open-source models (like Llama 3 or Mistral) on a single commercial GPU in a matter of hours, making custom AI highly accessible.

Preparing Data for Fine-Tuning

The most critical aspect of fine-tuning is data quality. The adage “garbage in, garbage out” applies exponentially here. You cannot dump raw PDFs into a fine-tuning pipeline. You must provide highly structured training data, typically formatted in JSON Lines (JSONL), which demonstrates exactly how the model should respond to specific inputs.

Here is an example of what a fine-tuning dataset looks like. In this scenario, Tool1.app is training a model for a client to act as a strict, automated database architect that converts natural language into proprietary SQL queries:

JSON

{"messages": [{"role": "system", "content": "You are a senior database architect. Output only raw SQL without markdown formatting."}, {"role": "user", "content": "Fetch all active premium users who signed up in Q1 2026."}, {"role": "assistant", "content": "SELECT user_id, email FROM users WHERE status = 'active' AND tier = 'premium' AND signup_date BETWEEN '2026-01-01' AND '2026-03-31';"}]}

{"messages": [{"role": "system", "content": "You are a senior database architect. Output only raw SQL without markdown formatting."}, {"role": "user", "content": "Count the total revenue from the enterprise tier this month."}, {"role": "assistant", "content": "SELECT SUM(revenue) FROM subscriptions WHERE tier = 'enterprise' AND payment_date >= DATE_TRUNC('month', CURRENT_DATE);"}]}

Python Implementation: Fine-Tuning with Hugging Face

For backend developers, executing a LoRA fine-tuning job typically involves the Hugging Face ecosystem (transformers, peft, trl). Below is a conceptual Python script illustrating how an enterprise model is configured for Supervised Fine-Tuning (SFT):

Python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, TrainingArguments

from peft import LoraConfig, get_peft_model

from trl import SFTTrainer

from datasets import load_dataset

# 1. Load the Base Open-Source Model and Tokenizer

model_id = "meta-llama/Meta-Llama-3-8B-Instruct"

tokenizer = AutoTokenizer.from_pretrained(model_id)

tokenizer.pad_token = tokenizer.eos_token

# Load model in bfloat16 for memory efficiency

base_model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="auto",

torch_dtype=torch.bfloat16

)

# 2. Configure Low-Rank Adaptation (LoRA)

lora_config = LoraConfig(

r=16, # Rank of the update matrices

lora_alpha=32, # Scaling factor

target_modules=["q_proj", "v_proj"], # Target attention layers to apply LoRA

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM"

)

# 3. Apply LoRA to the Base Model

peft_model = get_peft_model(base_model, lora_config)

# 4. Load Dataset and Initialize Training Arguments

dataset = load_dataset("json", data_files="enterprise_sql_training_data.jsonl")

training_args = TrainingArguments(

output_dir="./enterprise_custom_sql_model",

per_device_train_batch_size=4,

gradient_accumulation_steps=4,

learning_rate=2e-4,

num_train_epochs=3,

logging_steps=10,

optim="paged_adamw_32bit"

)

# 5. Execute the Supervised Fine-Tuning (SFT) Run

trainer = SFTTrainer(

model=peft_model,

train_dataset=dataset["train"],

args=training_args,

dataset_text_field="text", # Assuming a pre-formatted conversational text column

max_seq_length=1024,

)

trainer.train()

# 6. Save the custom adapter weights

trainer.model.save_pretrained("tool1_custom_sql_adapter")

The Strategic Power of Fine-Tuning

Fine-tuning shines when you need absolute behavioral mastery. If you need a model to inherently understand the highly specific vernacular of aerospace engineering, or if you need a lightweight model to flawlessly output deeply nested JSON for a Python automation webhook 99.9% of the time, fine-tuning is unparalleled. It teaches the model how to process information, allowing you to use incredibly short prompts at inference time, which drastically cuts down on API token costs and reduces system latency.

RAG vs Fine-tuning LLM: The Ultimate Enterprise Comparison

With the technical foundations established, we can objectively evaluate the RAG vs Fine-tuning LLM debate across five critical business dimensions. Technology leaders must weigh these factors heavily when designing their AI data strategy.

Factual Accuracy and Hallucination Mitigation

Winner: Retrieval-Augmented Generation (RAG) There is a massive misconception in the corporate world that fine-tuning is how you teach a model new facts. This is dangerously incorrect. While a model might memorize some facts during fine-tuning, neural networks are not relational databases. They suffer from “catastrophic forgetting,” where learning new data frequently overwrites previous knowledge. Furthermore, if you ask a fine-tuned model a factual question, it still relies on probabilistic generation, meaning the hallucination risk remains incredibly high.

RAG entirely decouples knowledge from reasoning. By anchoring the model’s response to explicitly retrieved, verifiable text documents, RAG virtually eliminates factual hallucinations. If absolute truth is your priority, RAG is mandatory.

Tone, Style, and Format Adherence

Winner: Fine-Tuning If your enterprise requires an AI to write marketing emails that perfectly mimic your top copywriter’s cadence, or if you need it to autonomously generate proprietary Python code without adding conversational pleasantries, RAG will struggle. While you can provide formatting examples in a RAG prompt (few-shot prompting), models often succumb to “lost in the middle” syndrome as context windows grow, forgetting their persona instructions. Fine-tuning fundamentally alters the mathematical behavior of the model, ensuring perfect stylistic and structural compliance natively.

Handling Dynamic and Changing Data

Winner: Retrieval-Augmented Generation (RAG) Business data is volatile. Inventory levels change by the minute; HR policies update quarterly. If a product’s price changes, a fine-tuned model is instantly obsolete. To update its parametric memory, you must curate a new dataset and initiate a computationally expensive retraining loop. With RAG, you simply delete the outdated vector from your database and upload the new pricing document. The AI’s knowledge is updated globally in milliseconds.

Cost Dynamics: CapEx vs. OpEx

It Depends (Setup vs. Ongoing Costs) The financial models for these architectures are inverse.

- Fine-Tuning is CapEx heavy. You must invest heavily upfront in human capital to curate thousands of flawless training examples, and you must pay for cloud GPU time to train the model. However, once deployed, your OpEx is incredibly low. Because the behavior is baked in, you use very short prompts, saving massive amounts on API token costs. Furthermore, you can host a fine-tuned open-source model on your own servers, eliminating third-party API fees entirely.

- RAG is OpEx heavy. Building a vector database pipeline is relatively cheap and fast. However, at runtime, you are sending thousands of words of retrieved context to the LLM for every single user query. If you are using a commercial API (like GPT-4), these massive context windows drive up your per-query token costs significantly as your user base scales.

Latency and Inference Speed (TTFT)

Winner: Fine-Tuning In user-facing web applications, milliseconds matter. Time-To-First-Token (TTFT) is a critical metric. A RAG pipeline inherently introduces network latency: it must embed the user query, search the vector database, retrieve chunks, compile a massive prompt, and wait for the LLM to read the entire context window before generating the first word.

A fine-tuned model skips the retrieval step entirely. With a short prompt and baked-in behavior, the model begins streaming its response almost instantaneously, making it vastly superior for real-time applications like voice AI agents.

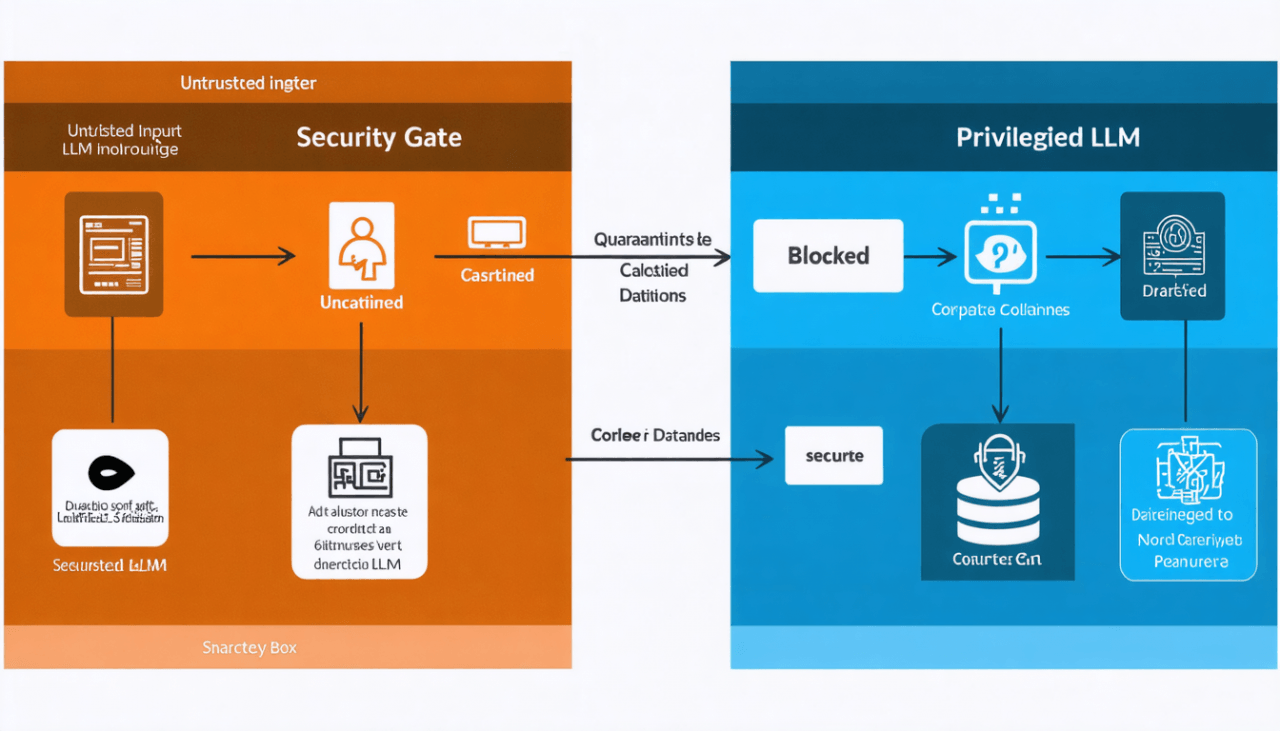

Data Security and Access Control

Winner: Retrieval-Augmented Generation (RAG) In a RAG system, security is handled cleanly via standard Role-Based Access Control (RBAC) at the database level. If a junior analyst asks the AI a question, the vector database simply filters out documents tagged “Executive Only,” ensuring the LLM never sees confidential data. In a fine-tuned model, all training data is permanently baked into the neural weights. There is no reliable mechanism to prevent a clever prompt-injection attack from tricking the model into revealing the sensitive data it was trained on.

Advanced Architectures: The Hybrid Approach (RA-FT)

At Tool1.app, we frequently advise our enterprise clients that the RAG vs Fine-tuning LLM debate does not have to be a mutually exclusive choice. For highly advanced, production-grade applications, the most resilient architecture is a hybrid: Retrieval-Augmented Fine-Tuning (RA-FT).

Enterprise requirements are rarely black and white. Consider a multinational corporate law firm. They need an AI that perfectly mimics their highly authoritative, distinct legal writing style and outputs flawlessly formatted legal briefs (requiring Fine-Tuning). Simultaneously, this AI must reference the absolute latest case law and the specific, up-to-the-minute details of a client’s ongoing contract negotiation (requiring RAG).

In a RA-FT architecture, the pipeline is built in two stages:

- The Fine-Tuning Stage: We take an open-source model and fine-tune it specifically on how to utilize retrieved documents. We train the model on thousands of examples where it is given a prompt, a set of retrieved facts, and a perfect response. Crucially, we also train it to recognize and ignore “distractor” documents—irrelevant information that might accidentally be retrieved by the vector database. We also fine-tune it to adopt the firm’s strict legal tone.

- The RAG Deployment: Once the model is a master of tone and document synthesis, we deploy it inside a highly optimized RAG pipeline connected to the firm’s live secure databases.

The result is the absolute pinnacle of enterprise AI. The system speaks your brand’s language flawlessly, formats outputs exactly as your downstream software requires, processes requests with minimal latency, and pulls its facts from a perfectly up-to-date, secure vector database. It is entirely immune to factual hallucinations while remaining stylistically perfect.

Building a hybrid system demands deep expertise in data curation, GPU orchestration, and vector search heuristics, but for mission-critical deployments, it is an investment that yields unparalleled ROI.

Decision Matrix for Enterprise Leaders

To simplify your AI architecture strategy, we have distilled this complex technical landscape into a rapid diagnostic framework. Gather your product managers and engineering leads, and evaluate your project against these criteria:

You should choose a RAG Architecture if:

- Your primary goal is to build an internal knowledge base, a Q&A chatbot, or an enterprise document search engine.

- Your proprietary data is vast and changes frequently (daily, weekly, or monthly).

- Factual accuracy is paramount, and AI hallucinations pose a severe legal or financial risk.

- You need the AI to cite its sources with direct hyperlinks to the original enterprise documents.

- You require strict, user-level access controls (RBAC) on the data the AI can reference.

You should choose a Fine-Tuning Strategy if:

- Your primary goal is workflow automation, text generation, or code creation rather than factual knowledge retrieval.

- You need the model to strictly adhere to a specific brand persona, corporate voice, or niche industry vernacular.

- You require the model to output highly rigid programmatic formats (like deep JSON, XML, or proprietary Python syntax).

- You want to radically reduce inference latency for real-time user experiences.

- You want to deploy a smaller, highly efficient open-source model on your own private servers to eliminate recurring commercial API token costs.

You should pursue a Hybrid (RA-FT) Strategy if:

- You are building a flagship, mission-critical application that demands both domain-specific behavioral mastery and up-to-the-minute dynamic factual accuracy.

Architecting Your AI Future

The integration of generative artificial intelligence into the enterprise is no longer an option; it is an operational imperative. However, as the RAG vs Fine-tuning LLM debate illustrates, true AI maturity requires moving beyond basic API calls. It demands a sophisticated understanding of data pipelines, embedding mathematics, neural weight manipulation, and cloud infrastructure optimization.

Retrieval-Augmented Generation is your enterprise’s ultimate research assistant—delivering verified facts dynamically to ensure accuracy and mitigate hallucinations. Fine-tuning is your enterprise’s intensive training program—molding the fundamental behavior, efficiency, and structural precision of the neural network itself. Aligning the right architecture with your specific business goals, security requirements, and budget is what will separate industry leaders from those left grappling with failed proof-of-concepts.

Attempting to build these complex systems internally without specialized architectural guidance often leads to stalled projects and bloated cloud computing bills. Your proprietary data is your most valuable asset; deploying it securely requires expert precision.

Not sure how to leverage your data? Let’s architect the perfect AI solution for you.

At Tool1.app, our dedicated teams of software developers, Python automation experts, and AI engineers specialize in transforming raw enterprise data into measurable strategic advantages. Whether you need a secure RAG system to navigate your corporate archives, a precisely fine-tuned LLM deployed on private infrastructure, or a custom web application to house your advanced AI tools, we build scalable solutions tailored to your unique workflows. Reach out to Tool1.app today for a comprehensive consultation, and let us help you turn your complex challenges into highly efficient, automated reality.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.