How to Create an Internal Knowledge Base Search Engine Using RAG and Node.js

Table of Contents

- The Business Case and Return on Investment for Intelligent Internal Search

- Deconstructing Retrieval-Augmented Generation Architecture

- The Technical Foundations of Document Processing

- Strategic Selection of Vector Database Infrastructure

- Evaluating Orchestration Frameworks in Node.js

- Step-by-Step Implementation: The RAG Node.js Tutorial

- Ensuring Data Privacy: Local RAG and On-Premise Execution

- Advanced Paradigms for 2026: The Shift to Agentic Workflows

- Navigating European Regulations: GDPR and the Artificial Intelligence Act

- Conclusion: Activating Your Corporate Intelligence

- Show all

The modern enterprise is drowning in a deluge of unstructured information but remains critically starved for accessible, actionable knowledge. Across vast corporate networks, crucial insights, standard operating procedures, and historical data are scattered across countless PDF manuals, fragmented onboarding documents, dense Confluence pages, and siloed internal databases. Extensive research into enterprise efficiency indicates that employees frequently spend one to two hours every single day merely hunting for the information they need to execute their core responsibilities. This friction leads to profound employee frustration, redundant work, and massive losses in aggregate productivity. The solution to this modern operational crisis is conceptually clear: companies desperately need an “internal Google” tailored specifically to their proprietary, secure documents.

Historically, building a functional, semantically aware search engine capable of understanding the nuances of human language was a monumental task reserved exclusively for tech giants with massive engineering teams. Keyword-based search engines of the past were rigid, requiring users to guess the exact phrasing used in a document to yield a successful query. Today, however, the convergence of Large Language Models and high-performance vector search algorithms has democratized this capability. Retrieval-Augmented Generation is now the definitive architectural standard for achieving this, allowing businesses to ground advanced AI models securely in their own verified, private data.

This comprehensive guide serves as an exhaustive RAG Node.js tutorial. It explores the business imperatives driving this technology, deconstructs the underlying architectural theories, and provides the practical, step-by-step implementation required to build a highly accurate, privacy-compliant internal search engine capable of transforming organizational efficiency.

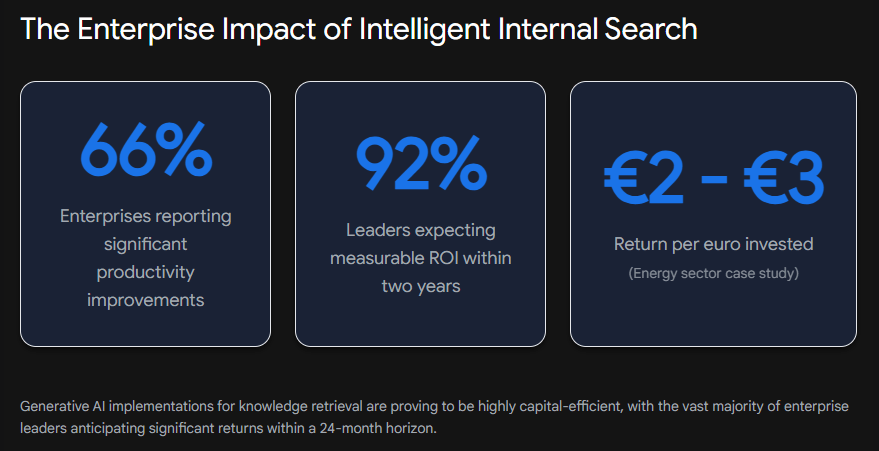

The Business Case and Return on Investment for Intelligent Internal Search

Before diving into vector mathematics, embedding models, and code execution, it is absolutely critical to understand the return on investment that justifies the allocation of capital to build a custom AI search engine. In the current economic landscape, the paradigm has decisively shifted from viewing artificial intelligence as a simple, experimental technological upgrade to recognizing it as a strategic imperative for fundamental operational redesign.

According to comprehensive economic surveys of European businesses conducted by leading consultancies, the integration of generative AI for knowledge retrieval is already delivering highly measurable productivity gains. When employees can instantly query an internal database using natural language and receive synthesized, highly accurate answers, the operational bottleneck of information discovery is completely eliminated. In demanding sectors such as energy, resources, and industrials, corporate executives report highly tangible and rapid returns; numerous organizations note that for every single euro invested in AI initiatives, they recover ongoing operational benefits of two to three euros annually. Furthermore, studies encompassing over three thousand senior executives across the EMEA region reveal that a staggering majority have already achieved significant operational productivity improvements specifically through AI deployment.

These gains are not theoretical. For example, large media agencies and global consulting firms are actively using generative AI to construct internal knowledge centers for their customer service and sales teams, allowing representatives to synthesize complex client histories and product manuals in seconds rather than hours. However, achieving this high level of return on investment requires moving far beyond generic, off-the-shelf chatbot wrappers. True enterprise value is unlocked only when the artificial intelligence is deeply, securely integrated into the company’s specific workflows and proprietary data silos.

For enterprises looking to accelerate this digital transformation without diverting their internal IT resources, partnering with specialized development agencies like Tool1.app ensures that the architecture is not only highly scalable and secure but perfectly aligned with bespoke business objectives. High-performing organizations are increasingly classifying themselves as AI return-on-investment leaders, frequently allocating more than ten percent of their entire technology budgets to these intelligent, autonomous systems. They expect measurable returns across cost reduction, time savings, and elevated employee satisfaction within strict twelve to twenty-four-month horizons.

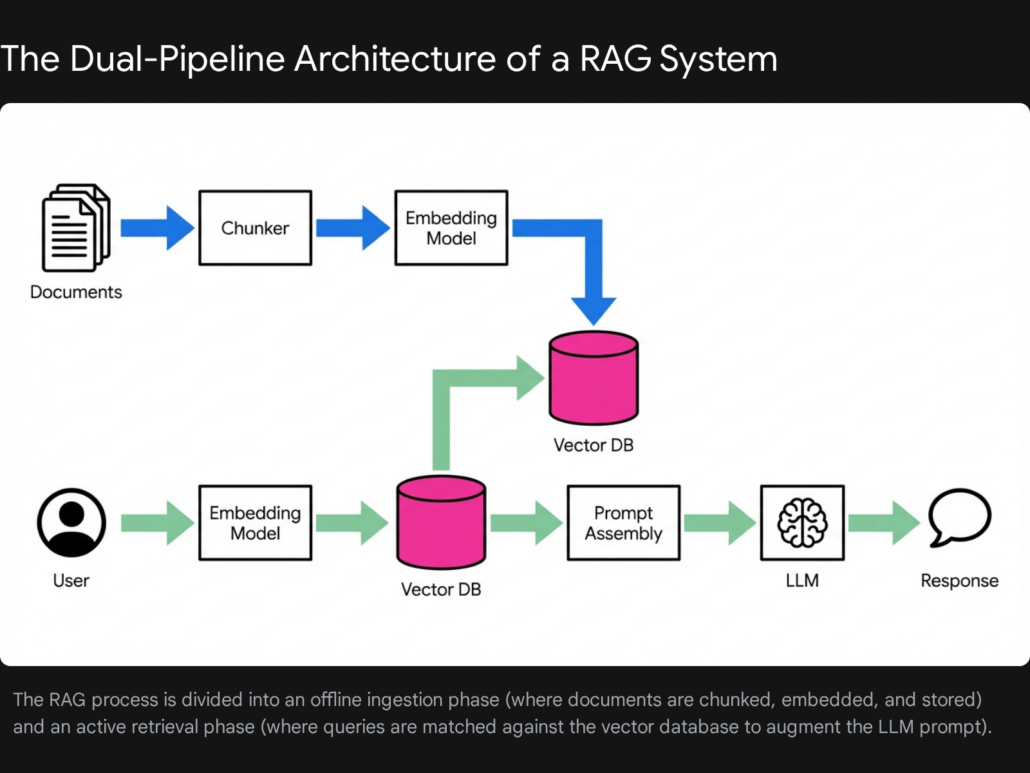

Deconstructing Retrieval-Augmented Generation Architecture

Large Language Models are undeniably powerful text synthesizers, but they suffer from fundamental flaws when deployed naively in an enterprise context. First, their knowledge is strictly limited by their pre-training cutoff dates, rendering them entirely oblivious to recent company events or shifting market dynamics. Second, and more critically, they inherently lack access to your company’s private, proprietary data. If an employee asks an off-the-shelf model about your company’s highly specific travel reimbursement policy or a newly released internal product architecture, the model will either fail to answer or confidently hallucinate an entirely incorrect response, presenting fiction as fact.

Retrieval-Augmented Generation solves this critical problem by separating the knowledge repository from the reasoning engine. Conceptually, it is akin to providing the artificial intelligence with an open-book examination.

When a user submits a query to the system, the application does not immediately send that prompt to the language model. Instead, it intercepts the query and performs a lightning-fast semantic search across a highly specialized vector database containing all of your company’s parsed documents. It identifies and retrieves the most relevant paragraphs, tables, or sections that directly pertain to the user’s question. The system then packages those specific retrieved excerpts alongside the user’s original question, wrapping them in a strict instructional prompt before sending the entire package to the language model. The prompt explicitly commands the model to answer the user’s question using only the provided document excerpts, forbidding it from relying on its outside training data.

This architectural workflow dramatically reduces the risk of hallucinations, allows the system to provide fully transparent, clickable source citations for every claim it makes, and ensures that the model’s output is always rigorously grounded in factual, up-to-date company policies.

The Technical Foundations of Document Processing

To build this sophisticated architecture in a Node.js environment, developers must first prepare the raw data. Enterprise knowledge bases overwhelmingly consist of unstructured data formats, including dense PDF financial reports, sprawling Word documents, intricate Markdown files, and deeply nested HTML web pages. This raw, human-readable data must be transformed into a standardized, mathematical format that a computational engine can rapidly search for contextual meaning.

Intelligent Data Ingestion and Parsing

The absolute foundational step is extracting raw text from source files with high fidelity. In the robust Node.js ecosystem, developers have access to a wide array of parsing libraries, but choosing the correct tool is vital. For simple, continuous text extraction from basic PDFs, lightweight JavaScript libraries such as the popular pdf-parse or pdfjs-dist are highly efficient and easy to implement. They process streams of data quickly and require very little memory overhead.

However, modern enterprise documents are rarely simple blocks of text. They contain complex multi-column layouts, nested financial tables, embedded diagrams, and crucial headers and footers. When basic parsers attempt to read these complex documents, they frequently scramble the reading order, reading straight across a two-column page rather than down each column. This catastrophic failure destroys the semantic meaning of the text before the AI even sees it.

For advanced enterprise use cases, specialized layout-aware parsers are strictly required. Tools like the Unstructured API or advanced implementations of optical character recognition paired with machine learning heuristics analyze the visual layout of the page. They identify where a table begins and ends, ensuring that the structured data within the table is converted into clean Markdown or HTML arrays rather than a jumbled string of numbers. Preserving this structure ensures that when an employee queries the system about specific financial metrics from a quarterly report, the artificial intelligence can accurately comprehend the tabular relationships.

The Critical Science of Text Chunking

Once the text is perfectly extracted and cleaned, it cannot be fed entirely into an embedding model or a language model. This is due to strict context window limits; many high-quality embedding models are constrained to analyzing a few thousand tokens at a time. More importantly, large, unedited swaths of text contain entirely too many overlapping, contradictory, or disparate ideas, which severely dilutes the mathematical focus of the search algorithm.

Consequently, the text must be meticulously broken down into smaller segments known as chunks. The strategy used to chunk documents is arguably the single most critical factor determining a system’s retrieval accuracy. A poorly chosen chunking strategy will fracture concepts and create a massive drop in retrieval performance, turning a potentially helpful tool into a frustrating liability.

The most rudimentary approach is fixed-size character splitting. Under this method, a script simply slices the text into equal segments, such as every five hundred characters, completely disregarding the content. While computationally inexpensive and incredibly fast, it frequently slices crucial sentences or complex ideas precisely in half, destroying the context necessary for the AI to understand the fragment.

The current industry standard for general text applications is recursive character splitting. This algorithm takes a highly structural approach, attempting to split text using a hierarchical list of natural language separators. It first looks to split the document by double newlines to separate discrete paragraphs. If a paragraph remains too large for the token limit, it falls back to single newlines, then to periods to separate individual sentences, and finally to individual words if absolutely necessary. This method respects the natural, human-authored structure of the document, ensuring that chunks remain semantically cohesive and that related ideas are not unnecessarily divorced. Extensive industry benchmarking demonstrates that utilizing a recursive splitting strategy aimed at roughly five hundred tokens, coupled with a deliberate overlap of fifty to sixty tokens to preserve the transitional context between chunks, yields excellent baseline accuracy.

However, the vanguard of document processing relies on semantic chunking. This is an advanced, highly precise strategy where mathematical embedding models are actually deployed during the chunking phase itself. The system calculates the semantic similarity between consecutive sentences as it reads the document. It dynamically determines chunk boundaries by identifying points where the topic naturally shifts, ensuring that each generated chunk contains a perfectly encapsulated, unified idea. While this method requires significantly more computational power and increases processing time during the ingestion phase, it drastically improves the precision of the final search engine. When architecting complex solutions at Tool1.app, we consistently observe that tailoring the exact chunking strategy to the specific nature of the client’s documents yields the most profound, measurable improvements in search accuracy and user satisfaction.

Vector Embeddings and the Logic of Semantic Search

With the unstructured text cleanly parsed and intelligently chunked, the next fundamental step is generating vector embeddings. An embedding model is a specialized neural network that takes a chunk of text and translates its underlying meaning into a high-dimensional array of floating-point numbers, commonly referred to as a vector.

To visualize this concept, imagine a vast, multi-dimensional graph. An axis might represent the concept of finance, another technology, and another human resources. A paragraph detailing a new corporate payroll software would be plotted high on both the finance and technology axes. Modern, enterprise-grade embedding models, such as those provided by industry leaders or powerful open-source alternatives like the all-MiniLM-L6-v2 model, do not plot text across merely three dimensions. They map language across hundreds or even thousands of dimensions, capturing incredibly nuanced linguistic, tonal, and semantic relationships that traditional computing cannot grasp.

When an employee interacts with the finished application and searches for a specific procedure, such as requesting time off for a medical procedure, that query is instantly converted into a corresponding vector using the exact same embedding model. The backend system then performs complex geometric calculations to determine the mathematical distance between the user’s query vector and all the millions of document vectors stored in the database. The document vectors that sit geometrically closest to the query vector are retrieved as the most relevant answers. This semantic matching occurs flawlessly regardless of whether the retrieved documents share exact, matching keywords with the user’s original search prompt, bypassing the limitations of traditional database querying.

Strategic Selection of Vector Database Infrastructure

A vector database is highly specialized infrastructure explicitly designed to store, manage, and rapidly query these massive, high-dimensional floating-point arrays. Standard relational databases utilized in traditional web development are fundamentally not optimized for calculating geometric similarities across millions of dense arrays in a matter of milliseconds. The underlying architecture requires advanced indexing algorithms, most notably Hierarchical Navigable Small World graphs, which allow the database to approximate nearest neighbors with blistering speed and high precision.

The Node.js ecosystem is richly supported by various commercial and open-source vector database providers. Selecting the right infrastructure depends heavily on an enterprise’s specific scale, budgetary constraints, data privacy requirements, and long-term deployment preferences.

| Vector Database Platform | Primary Deployment Model | Architectural Strengths | Optimal Enterprise Use Case |

| Pinecone | Fully Managed Serverless Cloud | Requires zero operational overhead, features an exceptional developer experience, and offers massive, instant scalability without manual sharding. | Ideal for rapid prototyping and modern enterprise deployments where fully managed, low-maintenance infrastructure is highly preferred over self-hosting. |

| Milvus | Open-Source or Managed Cloud | Highly cost-effective at massive scale, fully capable of handling billions of high-dimensional vectors, and highly tunable for specific algorithmic performance. | Suited for massive enterprise knowledge bases and deep tech companies requiring highly customized, scalable, and potentially self-hosted architecture. |

| Weaviate | Open-Source or Managed Cloud | Provides exceptional hybrid search capabilities out of the box, elegantly combining semantic vector search with traditional keyword-based scoring mechanisms. | Perfect for complex applications requiring highly nuanced filtering and precise, exact keyword matching deployed alongside advanced semantic understanding. |

| pgvector | Native PostgreSQL Extension | Offers a unified data model, allowing developers to keep traditional relational data and new vector data within the exact same database ecosystem. | Best for engineering teams already heavily invested in PostgreSQL infrastructure looking to incrementally add artificial intelligence features without adopting entirely new systems. |

For the practical implementation in this tutorial, we will utilize Pinecone due to its seamless integration with the Node.js environment and its highly accessible serverless tier, which allows developers to initiate a robust project without incurring immediate, heavy infrastructure costs.

Evaluating Orchestration Frameworks in Node.js

Building a Retrieval-Augmented Generation system requires stringing together multiple distinct operations: loading documents, chunking text, calling embedding APIs, connecting to databases, formatting complex prompts, and finally invoking the language model. Writing the connective code for all of this from scratch is highly inefficient and prone to errors. Orchestration frameworks abstract this complexity, providing modular, reusable components.

In the JavaScript and Node.js ecosystem, developers primarily choose between two dominant orchestration frameworks: LangChain.js and LlamaIndex.TS. Both frameworks are exceptionally powerful, but they approach the architecture from slightly different philosophies.

LangChain.js is a sprawling, highly modular framework designed to build a vast array of artificial intelligence applications. It excels in chaining multiple different tools, models, and agents together into complex, multi-step workflows. Its flexibility allows developers to seamlessly swap out different language models or vector databases with minimal code refactoring. If your ultimate goal is to build an autonomous agent that can search a vector database, query an external API, and then send an email, LangChain.js is the superior choice.

Conversely, LlamaIndex.TS was historically laser-focused specifically on data ingestion and retrieval. It provides incredibly sophisticated abstractions for indexing complex data structures and performing advanced querying techniques, such as hierarchical node parsing or document-summary indexing. While it has recently expanded its agentic capabilities, its core strength remains in highly optimized data retrieval.

In our custom software deployments at Tool1.app, we frequently leverage LangChain.js for our Node.js applications due to its unparalleled flexibility and massive community ecosystem, which provides reliable integrations for virtually every enterprise software tool on the market. Consequently, this tutorial will utilize LangChain.js as the primary orchestration engine.

Step-by-Step Implementation: The RAG Node.js Tutorial

With the extensive theoretical framework established, we can transition to constructing the actual application. This section provides a comprehensive, technically rigorous implementation guide for building the core ingestion and retrieval pipelines.

Initializing the Project Environment and Dependencies

First, it is necessary to establish a clean development environment. Open a terminal interface, create a dedicated project directory, and initialize a new Node.js package. It is critical to ensure that all environment variables and API keys are managed securely and never committed to public version control repositories.

Ensure your machine has Node.js installed, then execute the following commands to initialize the project and install the necessary dependencies, including the LangChain orchestration tools, the specific vector database client, and the document parsing utility.

The required packages include the core LangChain library, the specific OpenAI and Pinecone integration packages, the official Pinecone database client, the dotenv package for environment management, and the pdf-parse utility for handling basic document extraction. After installation, create a .env file in the root directory of your project to securely store your sensitive API credentials. You will require active API keys from both OpenAI and Pinecone, alongside the specific name of the index you have created within the Pinecone dashboard.

Constructing the Data Ingestion Pipeline

The ingestion pipeline is the critical backend process responsible for transforming raw corporate documents into searchable mathematical vectors. This script is typically run asynchronously, completely separate from the user-facing application, often triggered automatically via a webhook whenever a new file is uploaded to a secure corporate server or a designated cloud storage bucket.

The ingestion process begins by utilizing a dedicated document loader to read the unstructured file from the local file system. Once the raw text is extracted into memory, it must be passed through a configured text splitter. Utilizing a recursive character text splitter ensures that the natural paragraph and sentence boundaries of the original document are respected. Configuring a chunk size of approximately five hundred characters with a deliberate overlap of roughly sixty characters guarantees that the semantic context flows smoothly from one chunk to the next, preventing critical data from being orphaned.

After the document is successfully split into an array of searchable chunks, the script initializes a secure connection to the vector database. The orchestration framework simplifies the subsequent step immensely; it automatically iterates over the array of chunks, seamlessly transmits each chunk to the embedding API to generate the corresponding vector, and finally pushes both the vector and the original text payload into the remote vector database index.

Executing this script populates the database, effectively turning a static, unsearchable document into a live, dynamic knowledge base ready for instantaneous querying.

Developing the Retrieval and Generation Chain

With the database fully populated and indexed, the next phase involves building the active application logic that accepts a user’s natural language question, retrieves the highly relevant context, and generates the final, synthesized answer.

The retrieval script begins by re-establishing the secure connections to the existing vector index and initializing the large language model. For enterprise applications demanding high-quality reasoning and precise factual synthesis, utilizing advanced models like GPT-4 is highly recommended. It is imperative to configure the language model’s temperature parameter to absolute zero. This forces the model to act in a highly deterministic manner, drastically curtailing its creative liberties and compelling it to focus entirely on factual synthesis based solely on the data provided.

The core of the security and accuracy in this system relies on the construction of a strict prompt template. The prompt must explicitly command the language model to act as an expert internal assistant and to formulate its answer utilizing only the context retrieved from the database. Furthermore, it must include a strict failsafe instruction: if the retrieved context does not contain the necessary information to answer the user’s question, the model must explicitly state its inability to answer rather than attempting to guess or hallucinate an unverified response.

The orchestration framework is then used to construct a sophisticated retrieval chain. This chain links the language model, the strict prompt template, and the vector store retriever together. The retriever is configured to fetch a specific number of the most semantically similar document chunks—typically the top four or five results—to ensure the model has ample context without exceeding token limitations.

When a query is executed, the chain automatically converts the user’s string into a vector, queries the database, retrieves the textual context, injects that context into the prompt template alongside the user’s original question, and invokes the language model to generate the final, grounded response.

Ensuring Data Privacy: Local RAG and On-Premise Execution

For many European businesses, transmitting highly sensitive, proprietary corporate data to external cloud APIs—even those with robust enterprise data agreements—remains a fundamental violation of internal security protocols or strict regulatory mandates. In these highly secure environments, the standard architecture relying on external providers must be adapted to ensure absolute data sovereignty.

To mitigate data transmission risks entirely, enterprises are aggressively pivoting toward localized, on-premise deployments. Instead of sending sensitive internal documents to external servers for embedding and synthesis, companies can run powerful open-weights models completely locally on their own dedicated hardware infrastructure.

Using the Node.js ecosystem in tandem with local model management tools like Ollama, developers can orchestrate an entirely private Retrieval-Augmented Generation system. Under this paradigm, the document parsing, the complex vector embedding generation using local models like Nomic-Embed-Text, and the final large language model reasoning all occur exclusively within the secure, air-gapped perimeter of the company intranet. The vector database itself can be hosted locally utilizing open-source solutions like Milvus or an on-premise PostgreSQL instance augmented with the pgvector extension.

While this privacy-first approach requires a substantial upfront capital expenditure in specialized GPU hardware to ensure low-latency inference, it completely eliminates external data leakage vectors. Furthermore, it massively simplifies compliance audits, ensuring that proprietary algorithms, classified financial data, and sensitive employee records never traverse the public internet.

Advanced Paradigms for 2026: The Shift to Agentic Workflows

Implementing a standard, linear Retrieval-Augmented Generation pipeline is merely the starting point in the modern AI landscape. As enterprise technology has rapidly matured, the inherent limitations of standard workflows—the simple, straight line from user query to single-pass database retrieval to final generation—have become increasingly apparent. If an employee asks a highly complex, multi-part, or vaguely worded question, a single retrieval step will frequently miss critical, interconnected information spanning multiple distinct documents.

The next evolution of this enterprise architecture is known as Agentic RAG. In an agentic workflow, the large language model is no longer treated merely as a passive, final synthesizer of provided text. Instead, it is elevated to act as an autonomous reasoning engine that actively dictates and manages the flow of the entire application.

Instead of blindly accepting the very first set of retrieved documents as the absolute and final truth, an agentic system evaluates its own retrieved context before answering the user. If the retrieved documents are deemed insufficient, contradictory, or irrelevant by the reasoning engine, the agent autonomously formulates a new, refined search query and triggers a multi-hop search across entirely different data silos to actively fill the knowledge gap. It can be equipped with various tools, allowing it to decide whether to search the vector database for a policy document, query an SQL database for real-time inventory levels, or trigger an internal API to check the status of a specific support ticket.

Implementing a production-ready agentic system requires the careful, fault-tolerant orchestration of dynamic tool-calling, continuous state memory management, and rigorous programmatic guardrails to prevent infinite reasoning loops. This intense architectural complexity is precisely why leading organizations consistently rely on Tool1.app to bridge the massive gap between experimental, local scripts and highly robust, fault-tolerant enterprise software ecosystems capable of driving real business value. Moving from a static, single-pass pipeline to an asynchronous, self-correcting knowledge runtime ensures maximum factual integrity and unparalleled user trust.

Navigating European Regulations: GDPR and the Artificial Intelligence Act

For European businesses, immense technological capability must always be carefully balanced against strict, legally binding regulatory frameworks. Building an internal knowledge base inherently involves processing vast amounts of proprietary data, which almost inevitably includes the Personally Identifiable Information of current employees, past contractors, or active clients.

The General Data Protection Regulation intersects heavily with the implementation and maintenance of artificial intelligence systems. Two specific principles must be rigorously managed by engineering teams. First, the principle of data minimization dictates that AI systems should not indiscriminately ingest entire, unfiltered human resources databases if the stated goal of the application is merely to answer general corporate policy questions. Prior to the embedding and indexing phase, unstructured data pipelines must utilize sanitization scripts to actively strip out unnecessary personal identifiers, ensuring the system only learns the policy, not the individuals involved.

Second, the right to be forgotten presents a uniquely complex technical challenge within the realm of vector databases. If an employee departs the organization and legally requests the comprehensive deletion of their personal data, standard relational database deletion is a simple SQL command. However, if that employee’s specific data has been mathematically embedded and merged into a dense vector index, identifying and surgically excising that specific high-dimensional array without compromising the integrity of the entire search index requires robust metadata tagging architectures implemented during the very first ingestion phase.

Furthermore, following its formal adoption, the sweeping provisions of the European Union Artificial Intelligence Act will become widely applicable and actively enforced by August 2026. This comprehensive framework utilizes a graduated, risk-oriented structure to regulate technology. While internal corporate search engines generally do not fall under the strict “prohibited” practices reserved for biometric surveillance or social scoring, they may be classified under tiers requiring strict transparency, ongoing human oversight, and verifiable data governance standards.

Corporate legal teams and regulatory bodies will increasingly expect enterprises to produce thorough, auditable documentation, including comprehensive Technical Documentation Files and rigorous Data Protection Impact Assessments, to definitively prove that the deployed artificial intelligence system is structurally secure, free from systemic bias, and fundamentally respects the privacy rights of all individuals represented within the training and retrieval data.

Conclusion: Activating Your Corporate Intelligence

The strategic transition from managing scattered, isolated files to operating a fully integrated, conversational internal knowledge base represents one of the most significant and financially rewarding operational upgrades available to the modern enterprise. By intelligently leveraging the power of Retrieval-Augmented Generation, the flexibility of the Node.js ecosystem, and the immense speed of specialized vector databases, organizations can empower their entire workforce with instant, highly accurate, and deeply context-aware answers, ultimately reclaiming millions of euros in historically lost productivity and operational friction.

However, moving from a basic, conceptual tutorial script to a secure, infinitely scalable, and agentic enterprise platform requires deep technical expertise and rigorous architectural planning. The subtle nuances of layout-aware document parsing, the mathematical optimization of semantic chunking strategies, and the strict adherence to evolving European regulatory compliance demand a highly professional, experienced approach to software engineering.

Unlock the immense, untapped value hidden within your company’s internal data silos. Tool1.app can rapidly design, architect, and build custom RAG search engines perfectly tailored to the unique workflows and security requirements of your team. Whether your organization requires a seamlessly integrated solution deployed globally to the cloud, or a completely private, air-gapped artificial intelligence ecosystem hosted on-premise to ensure maximum data sovereignty, our dedicated experts are ready to turn your static, siloed documents into a dynamic, revenue-driving intelligence engine. Contact us today to discuss your next big operational innovation.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.