Automating Multilingual SEO with Claude and Python: A Step-by-Step Guide

Table of Contents

- The Financial Reality of Global Expansion

- Why Traditional SEO Tools Fail in Global Markets

- Evaluating the Engine: Why Claude Dominates SEO Localization

- Architecting the Python Automation Pipeline

- Phase One: Secure Data Extraction via SQLAlchemy

- Phase Two: Advanced Prompt Engineering for SEO Context

- Phase Three: Asynchronous Database Injection

- Automating Technical SEO: Hreflang Matrices and Multilingual Schema

- Enterprise Scaling: Slashing API Costs with Advanced Features

- Ready to Conquer Global Search?

- Show all

Expanding into global markets is one of the most reliable pathways to exponential business growth, yet it remains one of the most difficult to execute effectively. For years, digital businesses and enterprise platforms have been constrained by the exorbitant costs and logistical friction associated with traditional translation workflows. Attempting to localize a comprehensive web application, a sprawling e-commerce catalog, or an extensive technical blog meant enduring endless email chains, spreadsheet management, and vendor minimums.

Today, the landscape has fundamentally shifted. The emergence of highly capable Large Language Models (LLMs) allows businesses to replace manual localization friction with programmatic data pipelines. AI automated SEO translation is no longer a theoretical concept; it is a highly practical, deployable engineering solution that provides massive return on investment for international businesses. By treating translation as a continuous data engineering workflow rather than a disconnected vendor service, organizations can scale their search engine visibility across dozens of languages simultaneously.

The Financial Reality of Global Expansion

To appreciate the necessity of automation, one must first look at the economics of traditional localization. Using professional human translators and post-editors typically costs between €0.04 and €0.08 per word. For a mid-sized enterprise platform featuring 100,000 words of core interface text, documentation, and foundational marketing content, translating into just five new languages requires an upfront budget of €20,000 to €40,000.

Furthermore, this is not a one-time cost. Software development is iterative; new features, blog posts, and landing pages are published weekly. Maintaining parity across five languages means incurring a persistent, high-cost operational tax on every single piece of content your team produces.

By transitioning to an AI automated SEO translation workflow utilizing modern APIs—specifically Anthropic’s Claude 4.5 Sonnet—the financial model is inverted. Instead of paying per word, you pay per computational token. Generating a highly accurate, contextually aware translation using Claude costs a mere fraction of a cent per word. This translates to an immediate cost reduction of over 95%, transforming global expansion from a prohibitive luxury into a baseline operational standard.

Why Traditional SEO Tools Fail in Global Markets

Many marketing teams attempt to solve the localization problem by relying on legacy SEO platforms. Historically, a specialist would export an English keyword list, run it through a basic translation API, and map those literal translations to target pages. In the modern search ecosystem, this approach is disastrous.

Search engines have evolved significantly. The queries users submit to AI-powered search systems and generative answer engines now average over twenty words, compared to the short fragments typical of traditional search a decade ago. Search algorithms prioritize deep semantic understanding and user intent over simple algorithmic keyword matching.

When expanding globally, a keyword phrase that signals high commercial intent in the United Kingdom might translate perfectly into Spanish, yet carry absolutely zero commercial context for a user in Madrid. Traditional keyword research tools frequently struggle with error rates exceeding 50% when estimating search volumes for niche, low-traffic B2B markets in secondary languages.

This is exactly where AI automated SEO translation diverges from basic programmatic translation. Advanced LLMs do not simply swap vocabulary; they perform real-time cultural adaptation. An automated Python pipeline can instruct the AI to analyze the entity relationships within your content, adapt idioms to fit local market nuances, and generate highly personalized meta tags that traditional keyword tools simply cannot conceptualize. At Tool1.app, we consistently see that clients who shift from literal translation to AI-driven semantic adaptation experience drastically faster indexing and higher engagement rates in foreign markets.

Evaluating the Engine: Why Claude Dominates SEO Localization

When building a mission-critical automation pipeline that interacts directly with your production database, the choice of the underlying AI model dictates the stability and safety of the entire system. While there are many formidable models on the market, Anthropic’s Claude 4.5 Sonnet has proven uniquely suited for technical SEO automation and Python code generation.

The primary requirement for database automation is structural adherence. The LLM must return data in a strict, parsable format like JSON. If the AI hallucinates conversational text outside the requested JSON object, it will break the Python parser and crash the pipeline. Claude demonstrates exceptional structural adherence and instruction following, boasting industry-leading scores on complex software engineering benchmarks. This immense capability translates directly into an ability to flawlessly format HTML meta tags, preserve nested schema markup, and return perfectly valid data structures every single time.

Additionally, SEO translation requires deep, multi-layered reasoning. Claude exhibits robust multilingual capabilities alongside graduate-level analytical reasoning. This allows the model to balance multiple conflicting constraints simultaneously. For example, Claude can translate a title tag, adapt the cultural phrasing for the German market, seamlessly inject a localized target keyword, and ensure the entire string remains strictly under the 60-character limit dictated by search engines—a complex task that often causes lesser models to fail or truncate essential information.

Finally, enterprise websites possess vast amounts of context. To ensure the AI maintains a consistent brand voice across thousands of pages, the automation pipeline must feed the model comprehensive brand glossaries and existing translation memories. Claude features a massive context window capable of processing up to one million tokens. This allows your Python application to inject hundreds of pages of SEO guidelines directly into the prompt, ensuring the model possesses a holistic understanding of your entire corporate architecture before generating a single localized word.

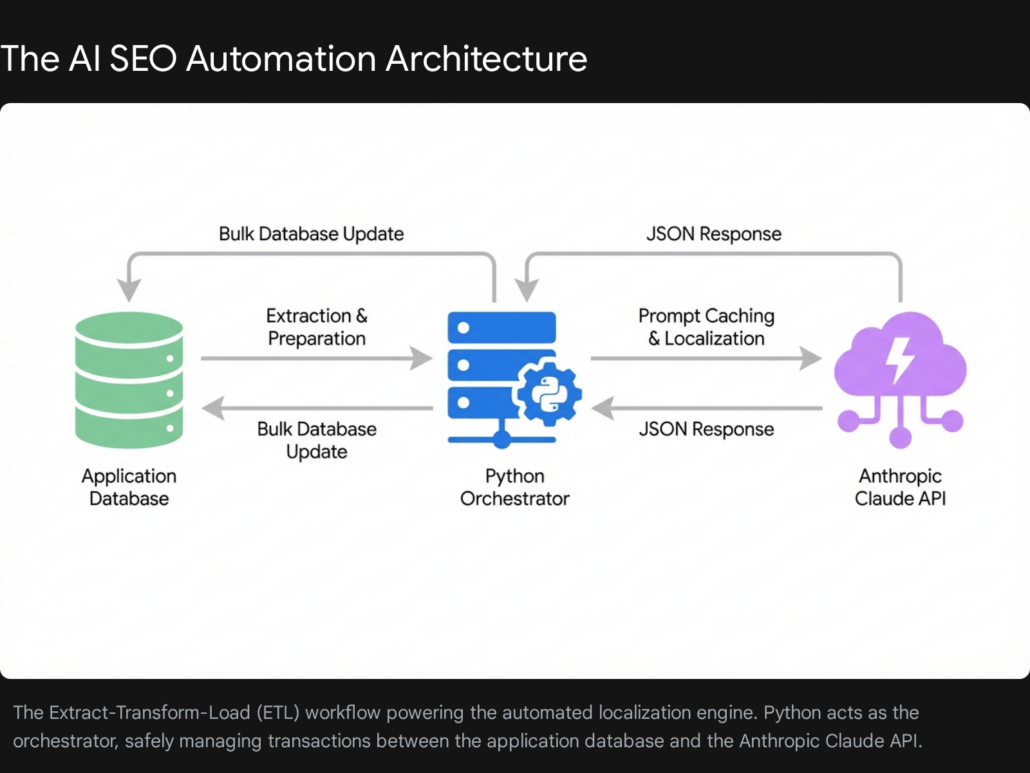

Architecting the Python Automation Pipeline

Building a resilient AI automated SEO translation system requires treating the process as a rigorous Extract, Transform, and Load (ETL) operation. The architecture consists of three distinct phases managed entirely by Python.

First is the extraction phase, pulling the source content such as meta titles, descriptions, and URL slugs directly from your application’s database. Second is the transformation phase, where the data is passed to the Claude API with highly specific prompt engineering to localize the content while adhering to strict SEO constraints. Finally, the injection phase parses the localized response, validates the data, and executes bulk database updates to push the new language variations live.

When we engineer these custom ETL pipelines at Tool1.app, we prioritize system stability and data governance above all else. Direct database manipulation requires robust error handling and transactional safety to ensure that a failed API call or a network timeout does not corrupt a live production environment.

Phase One: Secure Data Extraction via SQLAlchemy

The first technical hurdle is safely retrieving the content that needs translation. While web scraping tools are useful for gathering external competitor data, an internal automation tool should always interact directly with your database. Scraping your own site introduces unnecessary network latency and is highly prone to breakage if the frontend markup changes.

We highly recommend using SQLAlchemy, the premier Object-Relational Mapper (ORM) for Python. SQLAlchemy allows you to query your database securely, avoiding SQL injection vulnerabilities, and prepares the relational data as manipulatable Python objects.

Below is a foundational example of how to set up a secure database connection and extract English pages that currently lack a localized equivalent.

Python

import os

from sqlalchemy import create_engine, Column, Integer, String, Text, ForeignKey

from sqlalchemy.orm import sessionmaker, declarative_base

# Database configuration utilizing environment variables for security

DATABASE_URL = os.getenv("DATABASE_URL")

engine = create_engine(DATABASE_URL, pool_size=10, max_overflow=20)

SessionLocal = sessionmaker(bind=engine)

Base = declarative_base()

# Define the ORM Page model mapping to the database table

class Page(Base):

__tablename__ = "pages"

id = Column(Integer, primary_key=True)

slug = Column(String, unique=True)

lang = Column(String)

title_tag = Column(String)

meta_description = Column(String)

h1_heading = Column(String)

content_body = Column(Text)

parent_id = Column(Integer, ForeignKey('pages.id'), nullable=True)

def get_untranslated_pages(target_lang="es", batch_size=50):

"""

Fetches English pages that do not have a translation in the target language.

Utilizes subqueries to optimize database performance.

"""

session = SessionLocal()

try:

# Subquery to find IDs of pages already translated into target_lang

translated_parent_ids = session.query(Page.parent_id).filter(

Page.lang == target_lang,

Page.parent_id.isnot(None)

).subquery()

# Query English pages whose IDs are NOT in the translated subquery

pages_to_translate = session.query(Page).filter(

Page.lang == "en",

Page.id.notin_(translated_parent_ids)

).limit(batch_size).all()

return pages_to_translate

finally:

session.close()

This script ensures we only process data that actually requires localization. By limiting the extraction to a specific batch size, we prevent memory overload and prepare the data for chunked, asynchronous processing.

Phase Two: Advanced Prompt Engineering for SEO Context

Passing a raw string of text to an API and simply requesting a “translation” yields amateur results that fail to rank. For enterprise-grade SEO, the LLM must operate under strict technical constraints.

Effective prompt engineering for Claude involves establishing a highly specific persona within the system prompt. Instructing the AI to act as an “Elite Native SEO Specialist” fundamentally alters its predictive behavior, prioritizing professional terminology over casual translation. You must also mandate strict formatting instructions, demanding that Claude return a pure JSON schema so the Python parser executes flawlessly.

When localizing meta tags, character limits are absolute. Title tags must remain under 60 characters to avoid truncation in Google Search Engine Results Pages, while meta descriptions should be kept under 160 characters.

Below is an implementation utilizing the Anthropic Python SDK, demonstrating how to construct a robust, context-rich prompt.

Python

import anthropic

import json

import os

client = anthropic.Anthropic(api_key=os.getenv("ANTHROPIC_API_KEY"))

def translate_seo_metadata(page_data, target_language, target_country, target_keywords):

"""

Sends page data to Claude and returns localized SEO metadata as a validated JSON object.

"""

system_prompt = f"""

You are an elite, native-speaking SEO Specialist and localization engineer for the {target_language} market in {target_country}.

Your objective is to translate and adapt website metadata from English into {target_language}.

CRITICAL CONSTRAINTS:

1. Title Tags MUST be strictly under 60 characters.

2. Meta Descriptions MUST be strictly under 160 characters.

3. Adapt the phrasing to match high-volume, natural search queries in {target_country}.

4. Seamlessly integrate the provided target keywords.

5. You MUST respond ONLY with a valid JSON object. Do not include markdown formatting or explanations.

REQUIRED JSON SCHEMA:

{{

"localized_title": "string (< 60 chars)",

"localized_description": "string (< 160 chars)",

"localized_h1": "string",

"url_slug": "string (lowercase, hyphenated, URL safe)"

}}

"""

user_prompt = f"""

Localize the following SEO data into {target_language}:

Target Keywords to Integrate: {', '.join(target_keywords)}

Original Title: {page_data.title_tag}

Original Description: {page_data.meta_description}

Original H1: {page_data.h1_heading}

Original Slug: {page_data.slug}

"""

try:

response = client.messages.create(

model="claude-3-5-sonnet-latest",

max_tokens=1000,

temperature=0.1,

system=system_prompt,

messages=[

{"role": "user", "content": user_prompt}

]

)

localized_data = json.loads(response.content.text)

return localized_data

except json.JSONDecodeError:

print(f"Critical Error: Claude failed to return valid JSON for page {page_data.id}")

return None

except Exception as e:

print(f"Anthropic API Error: {e}")

return None

The key to this implementation is utilizing an extremely low temperature parameter (0.1). While high temperature leads to creative outputs suitable for copywriting, it is disastrous for database automation. When demanding strict JSON schemas, analytical predictability is paramount. Combining low temperature with explicit formatting instructions ensures an exceptional parsing success rate.

Phase Three: Asynchronous Database Injection

Once the Python script has successfully received and parsed the JSON payload, the final step is to save the newly localized pages back into the production database.

Executing individual insert statements inside a standard loop incurs massive network overhead and drastically increases execution time. Instead, leveraging SQLAlchemy’s bulk operation capabilities ensures maximum performance and safeguards database stability.

Python

def save_localized_pages(original_pages, target_lang, target_keywords):

"""

Processes translations via the AI pipeline and performs a bulk insert

for the new localized pages using SQLAlchemy.

"""

session = SessionLocal()

new_pages =

for page in original_pages:

seo_data = translate_seo_metadata(page, target_lang, "Spain", target_keywords)

if seo_data:

new_page = Page(

slug=seo_data['url_slug'],

lang=target_lang,

title_tag=seo_data['localized_title'],

meta_description=seo_data['localized_description'],

h1_heading=seo_data['localized_h1'],

content_body="HTML content translation logic goes here...",

parent_id=page.id

)

new_pages.append(new_page)

try:

# Execute a bulk save in a single database transaction

session.bulk_save_objects(new_pages)

session.commit()

print(f"Successfully localized {len(new_pages)} pages into {target_lang}.")

except Exception as e:

session.rollback()

print(f"Database error during bulk injection. Transaction rolled back: {e}")

finally:

session.close()

This bulk architecture allows you to comfortably localize thousands of URLs unattended. At Tool1.app, this transactional safety net is a mandatory standard. If a network interruption occurs midway through the batch, the entire transaction rolls back, preventing incomplete or orphaned data from polluting the live database.

Automating Technical SEO: Hreflang Matrices and Multilingual Schema

Translating text solves the user experience equation, but to ensure Google serves the correct regional page to the correct user, highly complex technical SEO elements must also be aligned.

Hreflang tags are HTML attributes that communicate the linguistic and geographical relationship between alternate versions of a web page. If you have an English page and a Spanish page, both must contain a reciprocal matrix of hreflang tags pointing to each other. Manual implementation of these matrices across large sites is notoriously error-prone, leading to silent indexing failures. Because our Python database architecture smartly uses a parent ID foreign key to link translated pages back to their English original, generating these matrices dynamically becomes a trivial exercise. Your web application can simply query all rows sharing the same parent ID and render the reciprocal links instantly upon page load.

Translating JSON-LD schema markup (the structured data powering rich snippets) is equally precarious. Breaking a single bracket or translating a reserved schema property key will invalidate the entire script. When applying AI automated SEO translation to schema, you must feed the entire JSON-LD block to Claude and explicitly command the AI to translate only the specific text string values (such as descriptions or review content) while leaving the JSON structural keys strictly untouched. Validating the output through Python’s native JSON library before injection guarantees that you maintain your rich snippet visibility across all international search results.

Enterprise Scaling: Slashing API Costs with Advanced Features

Once the pipeline is functional, the final objective is cost optimization. Scaling an enterprise website with millions of words requires strategic utilization of Anthropic’s advanced developer features to keep ongoing localization expenses negligible.

In a robust workflow, your system prompt will inevitably grow massive, containing extensive brand guidelines and negative keyword lists. Sending this identical 3,000-token prompt alongside every translation request is highly inefficient. Anthropic’s Prompt Caching feature is designed to solve this. The system stores the heavy system instructions, and crucially, cached input tokens are discounted by up to 90% compared to base rate input tokens.

Furthermore, if your localization effort does not require synchronous, real-time responses, you should integrate the Message Batches API. This allows you to package thousands of discrete requests into a single file processed asynchronously over 24 hours. The financial reward for this scheduling flexibility is a flat 50% discount on both input and output tokens. By combining the massive savings of Prompt Caching with the Batches API discount, the financial barrier to expanding your platform globally entirely evaporates.

Ready to Conquer Global Search?

The transition from manual translation to an automated Python and AI architecture represents one of the highest-leverage engineering investments a modern business can make. By treating localization as a dynamic data engineering challenge, companies can instantly unlock global markets that were previously cost-prohibitive.

Navigating the intersection of artificial intelligence, search engine algorithms, and complex database management is a highly specialized discipline. If your current localization strategy still relies on exporting messy spreadsheets and waiting weeks for manual agency returns, you are leaving substantial global revenue on the table. Tool1.app builds custom AI localization tools, robust Python automations, and high-performance web applications designed specifically to eliminate operational friction. Our team of expert developers is ready to architect and deploy an automated pipeline tailored to your exact database schema and business goals. Contact Tool1.app today, and let’s engineer your global expansion.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.