Analyzing On-Chain Data: A Python Toolkit for Ethereum Smart Contracts

Table of Contents

- The Business Imperative for On-Chain Data Analysis

- Understanding the Ethereum Data Architecture

- Evaluating Node Infrastructure and RPC Providers

- Establishing the Python Environment with Web3.py

- Demystifying the Application Binary Interface

- The Power of Event Logs in On-Chain Analytics

- Building an Enterprise-Grade Whale Alert System

- Transforming and Visualizing Blockchain Data

- Python vs. The Graph vs. Dune Analytics

- Automating MiCA Compliance and Regulatory Reporting

- Handling Reorganizations and Ensuring Data Redundancy

- Advanced Applications: DeFi Risk Management

- Integrating Artificial Intelligence with On-Chain Data

- Building the Future of Decentralized Intelligence

- Secure Your Edge in the Web3 Economy

- Show all

The decentralized web operates as an immutable, globally distributed ledger, processing millions of transactions and executing complex logic through smart contracts every single day. For modern enterprises, decentralized finance platforms, and regulatory compliance entities, the Ethereum blockchain represents an unprecedented goldmine of publicly accessible data. However, the sheer volume and highly unstructured nature of this data pose a massive technical challenge. Synchronizing a full Ethereum archive node currently requires upward of 21 terabytes of storage, and raw on-chain data is encoded in complex hexadecimal formats that offer little immediate business value in their native state.

To extract actionable business intelligence, perform comprehensive risk management, and adhere to strict new regulatory frameworks, organizations must deploy specialized analytical pipelines. Python, with its rich ecosystem of data science libraries and the powerful Web3.py toolkit, has emerged as the industry standard for this task.

Whether you are building custom trading algorithms, monitoring liquidity pools, or ensuring regulatory compliance, understanding how to interact programmatically with the Ethereum blockchain is a critical operational advantage. At Tool1.app, we specialize in building these precise types of custom web applications, Python automations, and AI solutions to drive business efficiency. This comprehensive guide will explore the technical architecture, practical code implementations, and business applications of Python Ethereum on-chain analysis, providing you with a complete toolkit to navigate the Web3 data landscape.

The Business Imperative for On-Chain Data Analysis

Before diving into the codebase and architectural design, it is essential to understand why on-chain data analysis is driving massive investments in enterprise Web3 infrastructure. The transition from purely speculative cryptocurrency trading to robust, utility-driven decentralized financial services has necessitated enterprise-grade analytics.

First, decentralized finance (DeFi) platforms require real-time, precision-driven risk management. Smart contracts govern billions of euros in total value locked across lending protocols, decentralized exchanges, and yield aggregators. Monitoring critical metrics such as collateralization ratios, market slippage thresholds, and historical vault performance can mean the difference between profitable protocol operations and catastrophic algorithmic liquidations.

Second, the regulatory landscape across global jurisdictions is rapidly maturing. The European Union’s Markets in Crypto-Assets (MiCA) regulation has fundamentally altered the compliance requirements for Crypto Asset Service Providers. Under these new legislative frameworks, exchanges, stablecoin issuers, and custodial service providers are legally mandated to implement continuous transaction monitoring, robust anti-money laundering controls, and real-time reporting mechanisms. Automated Python scripts that parse on-chain data are no longer just an analytical luxury; they are a legal necessity for operating within the European market and beyond.

Finally, competitive market intelligence relies heavily on understanding capital flows. Identifying large token movements—often referred to as whale alerts—allows businesses to anticipate market volatility, track institutional adoption, and analyze the behavior of competing protocols. Building custom software to track these metrics offers a distinct competitive edge. This is a domain where proactive data extraction directly translates to strategic market positioning.

Understanding the Ethereum Data Architecture

To effectively analyze Ethereum data, one must first comprehend how the Ethereum Virtual Machine processes and stores information. Unlike traditional relational databases (like PostgreSQL or MySQL) that store data in neat rows and columns, Ethereum stores data in a series of interconnected blocks, utilizing cryptographic structures known as Patricia Merkle Trees.

When a user or a decentralized application interacts with the Ethereum network, they submit a transaction. This transaction can be a simple transfer of native Ether, or it can be a complex execution of a smart contract function. Once the transaction is validated by the network’s decentralized nodes, it is grouped with other transactions into a block.

Within these blocks, data is primarily categorized into three distinct layers:

State Data, which represents the current balances and variable values of all accounts and smart contracts at a specific point in time.

Transaction Data, which records the inputs, sender, receiver, and execution cost of the action.

Receipt and Log Data, which acts as a side-channel recording system. When smart contracts execute successfully, they can be programmed to emit “events.” These events are stored as logs in the transaction receipt. Because accessing historical state data is computationally expensive and requires massive archive nodes, reading these event logs is the most efficient and cost-effective method for conducting historical on-chain analysis.

The challenge for data analysts is that all of this information is serialized and hashed. A standard transaction input appears as a long string of alphanumeric hexadecimal characters. It is the job of the Python developer to connect to a node, retrieve this hex data, and use the correct cryptographic keys—known as Application Binary Interfaces—to decode it into human-readable business intelligence.

Evaluating Node Infrastructure and RPC Providers

Your Python application cannot analyze the blockchain without a connection to an Ethereum node. This communication occurs via a Remote Procedure Call interface. While an enterprise could choose to provision and maintain its own local Ethereum node, doing so requires significant hardware resources, massive bandwidth, and complex ongoing synchronization management. For most enterprise-grade applications, utilizing a specialized Web3 infrastructure provider is the most efficient, secure, and scalable approach.

Providers operate globally distributed, load-balanced node networks that you can query via standard HTTP requests or persistent WebSocket connections. When architecting a data ingestion solution, it is vital to evaluate these providers based on request limits, geographical latency, and cost structures.

The pricing models in the Web3 infrastructure space generally fall into two categories: credit-based systems and request-per-second (RPS) tiered systems. Entering recent market cycles, we can observe the following typical configurations and their approximate costs converted to Euros:

| Infrastructure Provider | Primary Pricing Model | Estimated Starter Tier Cost | Performance Characteristics |

| Infura | Credit-based (method-weighted) | €46 / month | Enterprise-grade reliability, 99.9% uptime SLA, excellent for basic RPC calls. |

| Alchemy | Compute Units (CUs) | Pay-as-you-go | Rich developer tooling, specialized Notify and Transact APIs, supports over 40 chains. |

| QuickNode | Credit-based with RPS limits | €45 – €450 / month | Ultra-fast response times globally, SOC 2 Type II certified, ideal for high-frequency trading. |

| Chainstack | RPS tiers with unlimited add-ons | €0 – Custom Enterprise | Highly flexible, allows deployment of dedicated nodes, excellent for heavy analytical scraping. |

| GetBlock | Pay-as-you-go or RPS tiers | €36 / month | Cost-effective production nodes, great for startups building early-stage analytics. |

For highly resilient applications—such as real-time compliance monitors or algorithmic trading bots—relying on a single provider introduces an unacceptable single point of failure. Best practices dictate deploying a multi-node architecture, subscribing to multiple RPC endpoints concurrently to ensure uninterrupted data flow even during massive network congestion events.

Establishing the Python Environment with Web3.py

The foundation of our analytical toolkit is the web3.py library, a comprehensive Python implementation that mirrors the popular JavaScript web3.js library. It provides all the necessary cryptographic functions, encoding utilities, and network connection protocols required to interface with the Ethereum Virtual Machine.

To begin, you must establish an isolated Python environment to manage dependencies securely.

Bash

python3 -m venv web3_analytics_env

source web3_analytics_env/bin/activate

pip install web3 requests pandas plotly pycoingecko

This technology stack provides everything needed: web3 for blockchain interaction, requests for fetching external metadata, pandas for structuring the extracted data into manageable dataframes, plotly for advanced visualization, and pycoingecko for cross-referencing on-chain token amounts with real-world Euro fiat values.

Connecting to the Network via HTTP

The most straightforward method to connect to an Ethereum node is via an HTTP Provider. This is ideal for script-based analytical tasks that run on a schedule, pull historical data, and terminate.

Python

from web3 import Web3

from web3.middleware import geth_poa_middleware

# Define your secure RPC endpoint provided by Alchemy, Infura, etc.

# In a production environment, this should be loaded from secure environment variables.

RPC_URL = "https://mainnet.infura.io/v3/YOUR_SECURE_API_KEY"

# Initialize the Web3 connection instance

w3 = Web3(Web3.HTTPProvider(RPC_URL))

# Optional but recommended: Inject the Proof of Authority (PoA) middleware

# This is crucial if your analysis ever expands to networks like Polygon, BNB Chain,

# or specific testnets which use PoA consensus mechanisms rather than pure Proof of Stake.

w3.middleware_onion.inject(geth_poa_middleware, layer=0)

# Validate the connection to the decentralized network

if w3.is_connected():

current_block = w3.eth.block_number

print(f"Successfully connected to the Ethereum Mainnet.")

print(f"The latest synchronized block is: {current_block}")

else:

print("Critical Error: Failed to establish a connection to the Ethereum node.")

If your business use case requires listening for events the exact millisecond they are minted into a block, you would substitute the HTTPProvider with a WebsocketProvider (wss://...). WebSockets maintain a persistent connection, allowing the node to push new data to your Python application instantly, which is a mandatory architecture for latency-sensitive operations like arbitrage bots.

Demystifying the Application Binary Interface

Ethereum smart contracts are written in high-level languages like Solidity or Vyper, but before they are deployed to the blockchain, they are compiled down into EVM bytecode. To interact with a contract—whether to call a function, check a balance, or read an event log—Python needs a translation map that defines the contract’s methods, inputs, outputs, and event signatures.

This critical translation map is known as the Application Binary Interface (ABI). The ABI is typically formatted as a massive JSON array. Without the ABI, the compiled bytecode on the blockchain is practically indecipherable to an external application. It serves as the bridge between off-chain Python logic and on-chain decentralized execution.

When developing internal enterprise tools at Tool1.app, we often build automated, resilient scrapers that fetch these ABIs dynamically from blockchain explorers to streamline the data ingestion pipeline, rather than hardcoding thousands of lines of JSON into our repositories.

Programmatically Fetching Contract ABIs

For verified smart contracts, block explorers like Etherscan provide robust APIs to programmatically retrieve the ABI. Below is a practical, production-ready implementation of how to fetch the ABI for a specific smart contract—in this case, the highly utilized USD Coin (USDC) stablecoin contract—and instantiate that contract object in Python.

Python

import requests

import json

from web3 import Web3

# Establish connection

w3 = Web3(Web3.HTTPProvider("https://mainnet.infura.io/v3/YOUR_API_KEY"))

# USDC Smart Contract Address on the Ethereum Mainnet

CONTRACT_ADDRESS = "0xA0b86991c6218b36c1d19D4a2e9Eb0cE3606eB48"

# Etherscan API Key for querying contract metadata

ETHERSCAN_API_KEY = "YOUR_ETHERSCAN_API_KEY"

def fetch_verified_abi(contract_address):

"""

Queries the Etherscan API to retrieve the verified Application Binary Interface

for a given smart contract address.

"""

# Construct the API endpoint URL

url = (

f"https://api.etherscan.io/api"

f"?module=contract"

f"&action=getabi"

f"&address={contract_address}"

f"&apikey={ETHERSCAN_API_KEY}"

)

try:

response = requests.get(url, timeout=10)

response.raise_for_status()

data = response.json()

# Etherscan returns a status of "1" for successful queries

if data.get("status") == "1":

# The ABI is returned as a stringified JSON array, so we must parse it

return json.loads(data["result"])

else:

raise ValueError(f"Etherscan API Error: {data.get('message')} - {data.get('result')}")

except requests.exceptions.RequestException as e:

print(f"Network error while communicating with Etherscan: {e}")

return None

# Execute the fetch and instantiate the contract

contract_abi = fetch_verified_abi(CONTRACT_ADDRESS)

if contract_abi:

# Ethereum addresses must be checksum-formatted to prevent routing errors

checksum_address = w3.to_checksum_address(CONTRACT_ADDRESS)

# Instantiate the contract object using the address and the dynamically fetched ABI

usdc_contract = w3.eth.contract(address=checksum_address, abi=contract_abi)

print(f"USDC Contract successfully instantiated.")

# Introspect the contract to view available functions

available_functions = [func.fn_name for func in usdc_contract.all_functions()]

print(f"Total callable functions discovered: {len(available_functions)}")

print(f"Sample functions: {available_functions[:5]}")

With the usdc_contract object instantiated, your Python application is now fully aware of the contract’s capabilities. You can seamlessly execute read-only calls directly through Python. For example, querying the total circulating supply of USDC or checking the exact balance of a specific enterprise treasury wallet becomes a single line of Python code: usdc_contract.functions.totalSupply().call().

The Power of Event Logs in On-Chain Analytics

While querying individual state variables (like a user’s current balance) is useful for point-in-time checks, true Python Ethereum on-chain analysis relies on tracking historical activities over time. This is achieved through event logs.

Smart contracts are programmed to emit specific logs during their execution. For example, every time an ERC-20 token is transferred from one user to another, the contract emits a Transfer event. This event contains the sender’s address, the recipient’s address, and the precise amount of tokens transferred.

Why do analysts rely on logs instead of just reading state changes? The answer lies in the fundamental economics of the Ethereum blockchain. Storing data permanently in the EVM’s active state variables is incredibly expensive in terms of gas fees. Emitting a log, however, is significantly cheaper. Therefore, developers use logs as a highly efficient, searchable historical ledger of everything a contract has ever done.

Topics, Indexing, and Keccak Hashes

Event logs are organized and filtered using cryptographic “topics.” A log can have up to four topics. The very first topic (Topic 0) is almost always the Keccak-256 cryptographic hash of the event’s signature.

For a standard ERC-20 transfer, the human-readable signature is Transfer(address,address,uint256). If you hash this string using the Keccak-256 algorithm, you get the unique identifier that the EVM uses to categorize the log.

Subsequent topics (Topic 1, Topic 2, etc.) are used to store “indexed” parameters. In our Transfer example, the sender and receiver addresses are usually indexed. This is a crucial architectural feature because Ethereum nodes utilize Bloom filters to allow off-chain applications to search for these specific indexed topics across millions of blocks with incredible speed.

Building an Enterprise-Grade Whale Alert System

To demonstrate the immense practical value of Python Ethereum on-chain analysis, we will build a sophisticated “Whale Alert” monitoring system. This system will scan the blockchain for massive transfers of Wrapped Ethereum (WETH).

To make the data contextually relevant for European financial analysts, the script will integrate the CoinGecko API to fetch the real-time Euro (€) price of Ethereum. We will configure the analytical filter to ignore network noise and only flag transactions that exceed a massive €500,000 threshold.

This type of script forms the baseline for institutional market surveillance and competitive intelligence tools.

Python

import time

from pycoingecko import CoinGeckoAPI

from web3 import Web3

# Initialize the Web3 provider

w3 = Web3(Web3.HTTPProvider("https://mainnet.infura.io/v3/YOUR_API_KEY"))

# Initialize the CoinGecko API client for fiat conversions

cg = CoinGeckoAPI()

# The verified contract address for Wrapped Ethereum (WETH)

WETH_ADDRESS = w3.to_checksum_address('0xC02aaA39b223FE8D0A0e5C4F27eAD9083C756Cc2')

# Define the minimum alert threshold strictly in Euros

EURO_THRESHOLD = 500000

def get_eth_euro_price():

"""

Communicates with CoinGecko to fetch the real-time price of Ethereum in Euros.

Includes basic error handling for API rate limits.

"""

try:

# Request the current price of 'ethereum' versus 'eur'

price_data = cg.get_price(ids='ethereum', vs_currencies='eur')

current_price = price_data['ethereum']['eur']

return current_price

except Exception as e:

print(f"Pricing API Error: Unable to fetch EUR conversion rate. {e}")

return None

def analyze_institutional_transfers():

"""

Scans recent Ethereum blocks for WETH transfers exceeding the Euro threshold.

"""

eth_price_eur = get_eth_euro_price()

if not eth_price_eur:

return

print(f"--- Market Intelligence Initialization ---")

print(f"Current ETH Market Price: €{eth_price_eur:,.2f}")

# Calculate exactly how much WETH constitutes our Euro threshold.

# WETH, like raw ETH, utilizes 18 decimal places of precision.

# Therefore, 1 WETH is represented on-chain as 1,000,000,000,000,000,000 Wei.

weth_threshold_wei = int((EURO_THRESHOLD / eth_price_eur) * (10**18))

# Generate the Keccak-256 hash for the ERC-20 Transfer event signature

transfer_topic_hash = w3.keccak(text="Transfer(address,address,uint256)").hex()

# Define our search window. For this example, we search the last 50 blocks.

# In a production environment, this would be a continuous asynchronous loop.

latest_block = w3.eth.block_number

from_block = latest_block - 50

# Construct the JSON-RPC filter parameters

event_filter_params = {

"fromBlock": from_block,

"toBlock": "latest",

"address": WETH_ADDRESS,

"topics": [transfer_topic_hash]

}

print(f"Scanning blocks {from_block} through {latest_block}...")

print(f"Targeting transfers strictly greater than €{EURO_THRESHOLD:,.2f}n")

# Request the logs from the node

logs = w3.eth.get_logs(event_filter_params)

anomalies_detected = 0

for log in logs:

# The amount transferred is typically not indexed, so it resides in the 'data' field.

# We must convert the raw hex data into a base-10 integer.

amount_wei = int(log['data'].hex(), 16)

# Check if the transaction meets our institutional threshold

if amount_wei >= weth_threshold_wei:

anomalies_detected += 1

# Convert Wei back to human-readable ETH and calculate the exact Euro value

amount_eth = amount_wei / (10**18)

fiat_value_eur = amount_eth * eth_price_eur

# The sender and recipient are indexed, meaning they are stored in the topics array.

# Topic is the event signature. Topic is the sender. Topic is the receiver.

# Addresses in topics are padded to 32 bytes, so we slice the last 40 characters (20 bytes).

raw_sender = "0x" + log['topics'].hex()[-40:]

raw_recipient = "0x" + log['topics'].hex()[-40:]

sender = w3.to_checksum_address(raw_sender)

recipient = w3.to_checksum_address(raw_recipient)

tx_hash = log['transactionHash'].hex()

print(f"⚠ INSTITUTIONAL WHALE ALERT DETECTED ⚠")

print(f"Transaction Hash: {tx_hash}")

print(f"Capital Flow: {sender} → {recipient}")

print(f"Volume: {amount_eth:,.2f} WETH")

print(f"Estimated Fiat Value: €{fiat_value_eur:,.2f}")

print("-" * 50)

if anomalies_detected == 0:

print("No institutional transfers detected in the current block window.")

# Execute the intelligence script

analyze_institutional_transfers()

This script effectively bridges the gap between obscure, low-level blockchain cryptography and actionable, high-level financial intelligence. By transforming abstract base-16 integer values into localized Euro amounts, modern businesses can apply traditional financial logic, accounting standards, and risk parameters to decentralized networks.

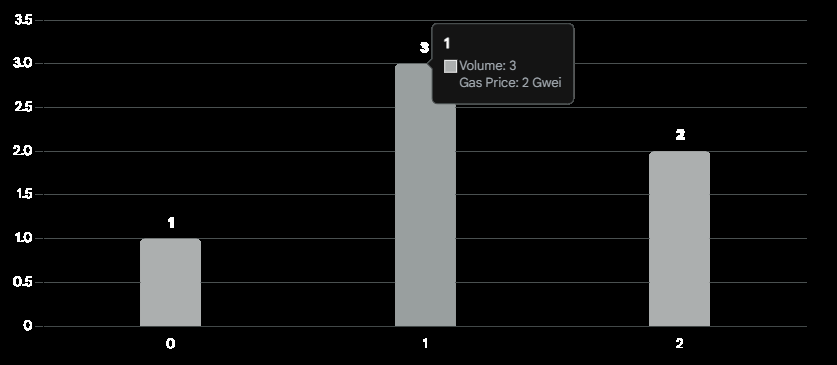

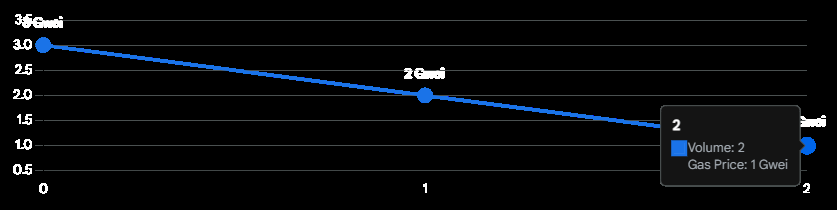

Transforming and Visualizing Blockchain Data

Extracting raw hex data from the blockchain is only the first half of the data science equation; structuring, cleaning, and visualizing it is where true, actionable business intelligence is fully realized. A data stream is essentially useless to a Chief Financial Officer or a Compliance Director if it cannot be interpreted at a glance.

In enterprise software development—a core competency at Tool1.app—we regularly engineer comprehensive pipelines that extract high-volume data via Web3.py, transform that data using the Pandas library, and visualize it using modern frontend frameworks or powerful Python backends like Plotly.

Pandas DataFrames are perfectly suited for manipulating time-series blockchain data. Because Ethereum produces a block approximately every twelve seconds, financial data is inherently chronological. By loading transaction receipts, block timestamps, and log data into Pandas, analysts can calculate moving averages, track volatility indices, and identify outlier events with incredible efficiency.

Furthermore, by setting the Pandas plotting backend directly to Plotly (pd.options.plotting.backend = "plotly"), developers can generate highly interactive, visually striking charts directly from their data structures without needing to export the data to external business intelligence software.

Consider a practical scenario where an enterprise needs to deeply analyze the historical gas fees and transaction volumes of a specific smart contract to optimize their automated interaction times. Executing smart contracts during peak network congestion can cost thousands of Euros in wasted gas fees. By pulling historical logs into a Pandas DataFrame, converting the Unix timestamps into localized datetime objects, and visualizing the data, organizations can actively forecast network congestion patterns and deploy their capital much more efficiently.

Analysis of on-chain data reveals peak network congestion windows. Organizations utilize these data pipelines to schedule automated smart contract interactions during low-fee periods, significantly reducing operational overhead.

This approach is fundamentally reshaping how institutions interact with decentralized ledgers. Visual dashboards elevate blockchain technology from a backend infrastructure component to a frontline business strategy tool.

Python vs. The Graph vs. Dune Analytics

When designing an analytical stack, Python is not the only option on the market. It is crucial to evaluate Web3.py against other popular ecosystem tools to ensure you are deploying the right architecture for your specific business requirements.

Dune Analytics has become highly popular for its SQL-based interface. It allows analysts to write standard SQL queries against pre-indexed blockchain datasets and generate visual dashboards rapidly. However, Dune is primarily a read-only, retrospective tool. It operates within a browser interface and is excellent for high-level reporting, but it cannot be easily integrated into a live, executing software application. You cannot easily trigger a live trading bot or an automated compliance freeze based on a Dune SQL query.

The Graph is a decentralized protocol for indexing and querying data via GraphQL. Developers build “subgraphs” that dictate how blockchain data should be organized. While incredibly powerful and efficient for frontend decentralized applications (dApps) that need to display user balances or token histories, creating and deploying a subgraph requires a significant engineering lift. Furthermore, querying The Graph involves relying on a network of decentralized indexers, which introduces unique economic and latency considerations.

Python and Web3.py, by contrast, offer the ultimate degree of flexibility and execution power. While it requires you to manage your own node connection and handle the raw data parsing manually, it allows for real-time, programmatic execution. A Python script can detect an on-chain event, run a complex machine learning algorithm to assess the risk, and immediately sign and broadcast a new transaction back to the blockchain to hedge a position—all within a matter of seconds. For businesses building proprietary algorithms, automated workflows, and deeply integrated backend systems, Python remains the unrivaled choice.

Automating MiCA Compliance and Regulatory Reporting

For businesses operating within or providing services to the European Union, on-chain data analysis has firmly transitioned from an optional operational optimization tool into a strict, non-negotiable compliance mandate. The Markets in Crypto-Assets (MiCA) regulation has established comprehensive oversight and rigorous new standards for Crypto Asset Service Providers (CASPs), stablecoin issuers (Electronic Money Tokens and Asset-Referenced Tokens), and trading platforms.

Under the MiCA framework, systemic transparency and absolute market integrity are paramount. CASPs must demonstrate robust corporate governance, stringent safeguarding of client funds, and the advanced technological capacity to detect market manipulation, wash trading, or insider activity. This is precisely where automated Python analytics play a pivotal, mission-critical role.

Implementing On-Chain Transaction Monitoring

Traditional financial institutions have long relied on deeply integrated, monolithic transaction monitoring systems to comply with standard Anti-Money Laundering (AML) directives. In the Web3 space, executing this level of oversight requires continuous, programmatic surveillance of the blockchain layer itself.

Under MiCA, CASPs must adhere to complex guidelines involving the Travel Rule and align their data practices with the EU’s General Data Protection Regulation (GDPR). When a user transfers funds from a hosted exchange wallet to a self-hosted private wallet—particularly when transactions exceed the critical €1,000 threshold—the service provider must possess the capability to verify, record, and securely store the origin and destination data of those digital assets.

Using Web3.py, forward-thinking compliance teams can build modular, automated systems designed to satisfy these exact regulatory demands:

- Real-Time Address Screening: Python scripts can continuously monitor incoming and outgoing transactions, cross-referencing sender and receiver addresses against known global blacklists, sanctioned entity databases, or high-risk smart contracts in real-time.

- Capital Flow Traceability: Sophisticated recursive algorithms can trace the origin of funds through multiple transactional hops on the blockchain. This allows compliance officers to detect potential money laundering techniques, such as the use of decentralized mixing services (like Tornado Cash) or complex cross-chain asset bridges.

- Automated Audit Trail Generation: Scripts can automatically compile execution reports, detailed transaction logs, and internal risk assessments into tamper-proof, formatted digital records ready for immediate regulatory review.

The reality of modern crypto regulation is that failing to implement effective AML systems can result in the immediate revocation of a CASP’s operating license. Building these massive compliance systems entirely in-house using traditional enterprise software vendors can be prohibitively expensive and dangerously inflexible.

Instead, utilizing agile, modular Python architectures allows startups and established exchanges alike to deploy tailored, highly specific compliance engines. At Tool1.app, we leverage our deep expertise in custom Python automations to construct these exact types of modular, audit-ready compliance pipelines. We ensure that our clients meet the rigorous MiCA data standards seamlessly, without crippling their operational budgets or slowing down their transaction processing speeds.

Handling Reorganizations and Ensuring Data Redundancy

A critical technical challenge in on-chain data analysis that is frequently overlooked by novice developers is the phenomenon of chain reorganizations (reorgs). The Ethereum blockchain achieves consensus probabilistically. Occasionally, due to network latency or competing miners/validators, two different blocks may be mined at the exact same time. The network temporarily splits, but eventually, one chain becomes longer and is accepted as the definitive truth. The shorter chain is discarded, and its blocks are “orphaned.”

If your Python script processes a block that is subsequently orphaned in a reorg, your database will contain false information. If you trigger a business action—such as releasing escrowed fiat funds—based on an orphaned block, your enterprise will suffer a direct financial loss.

Robust Python applications must account for this. When pulling critical data, scripts should wait for a certain number of block “confirmations” before considering a transaction truly final. Furthermore, implementing an asyncio loop to subscribe to multiple different RPC nodes concurrently ensures that if one node falls out of sync with the main network, your application continues to receive accurate, up-to-date state information from redundant sources.

Designing these fault-tolerant, asynchronous systems is a complex engineering task, but it is an absolute necessity for enterprise-grade Web3 infrastructure.

Advanced Applications: DeFi Risk Management

Beyond simple transaction monitoring and regulatory compliance, the absolute frontier of Python Ethereum on-chain analysis involves dynamic risk management and predictive modeling.

The Decentralized Finance ecosystem is a highly interconnected web of smart contracts. A sudden price drop in a major asset like Ethereum can trigger a massive cascade of automated algorithmic liquidations across lending platforms such as Aave, Compound, or MakerDAO. For institutions participating in DeFi—whether as liquidity providers, borrowers, or yield farmers—monitoring this systemic risk is critical.

By continuously extracting historical vault performance, analyzing collateral balances in real-time, and measuring the depth of decentralized exchange liquidity pools, data scientists can engineer powerful features for predictive models. Python’s native integration with industry-standard machine learning libraries like Scikit-Learn, PyTorch, or TensorFlow makes it the perfect and most natural environment for this evolution.

Analysts can build systems that simulate market stress tests. For example, a Python script could query a lending protocol’s smart contract to retrieve the current Loan-to-Value (LTV) ratios of all major borrowers. It could then run a localized simulation: “If the price of Ethereum drops by 15% in the next hour, which of these collateralized debt positions will fall below the liquidation threshold?”

Armed with this predictive intelligence, a business can automatically execute transactions to top up collateral, unwind risky positions, or actively participate in liquidations for profit, all before the broader market reacts.

Integrating Artificial Intelligence with On-Chain Data

The convergence of Web3 data analytics and advanced Artificial Intelligence represents the next major technological leap for business intelligence. While writing custom Web3.py scripts is incredibly powerful, it still requires dedicated engineering resources. The ultimate goal for executive decision-makers is to interact with this vast ocean of blockchain data seamlessly and intuitively.

Integrating Large Language Models (LLMs) with robust on-chain data pipelines—another core architectural service offered at Tool1.app—enables natural language querying of complex decentralized networks.

Imagine a sophisticated corporate risk dashboard where a Chief Compliance Officer does not need to understand hexadecimal encoding, contract ABIs, or Python syntax. Instead, they can simply type a request to an AI agent: “Analyze the last 72 hours of transaction history for our main corporate wallet, flag any transfers exceeding €10,000 sent to unverified contracts, and generate a compliance summary.”

Behind the scenes, the AI agent interprets this request, dynamically writes the necessary Web3.py filter parameters, communicates with the Ethereum RPC node to fetch the logs, processes the raw hex data into a structured Pandas dataframe, and returns a cleanly formatted, localized report directly to the officer’s screen.

This fusion of generative AI and deterministic blockchain data processing drastically reduces the friction of interacting with Web3. It democratizes access to on-chain intelligence, allowing non-technical stakeholders to leverage the full transparency of the blockchain for strategic decision-making.

Building the Future of Decentralized Intelligence

The Ethereum blockchain is much more than a platform for digital currency; it is an open, permissionless ledger containing the financial, operational, and transactional history of the world’s most robust decentralized economy. However, as we have explored, accessing, decoding, and interpreting that raw data requires highly specialized technical proficiency and a robust architectural strategy.

By leveraging the versatility of Python, the power of the Web3.py library, and modern data visualization techniques, businesses can transform abstract cryptographic hex codes into actionable financial insights, real-time risk alerts, and automated, audit-ready compliance reports.

Whether your organization is navigating the complexities of the new European MiCA regulations, tracking institutional capital flows across decentralized exchanges, or actively optimizing algorithmic DeFi trading strategies, an automated, Python-driven infrastructure is your most valuable strategic asset. As the Web3 ecosystem continues to mature and intertwine with traditional finance, the organizations that possess the capability to read, analyze, and act upon on-chain data with the highest speed and accuracy will inevitably dominate the market.

Secure Your Edge in the Web3 Economy

Need deep insights from the blockchain? Tool1.app offers advanced Web3 data analysis, bespoke Python automation, and enterprise-grade LLM integrations tailored specifically to your operational requirements. Do not let the complexity of on-chain data slow your innovation. Contact our engineering team today to build the custom infrastructure that will securely power your business into the decentralized future.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.