7 Essential Security Measures to Protect Your WordPress Site from AI Bots

Table of Contents

- The Silent Siege: The Economics of AI Scraping and Server Exhaustion

- Implement Intelligent, Dynamic Rate Limiting at the Edge

- Ruthlessly Block Rogue AI User Agents at the Server Level

- Terminate XML-RPC to Prevent Brute-Force Amplification

- Deploy a Modern, Behavior-Based Web Application Firewall (WAF)

- Shield the WordPress REST API from Public Data Extraction

- Enforce Bot-Resistant, Invisible Behavioral Authentication

- Continuous Log Monitoring and Threat Intelligence via Python Automations

- Conclusion: Future-Proof Your Digital Infrastructure

- Show all

The digital landscape has undergone a radical and irreversible transformation. As we navigate the complexities of the modern web in 2026, business owners and IT administrators face an entirely new class of automated threats. The internet is no longer merely a space where human users interact with content; it has become an expansive feeding ground for artificial intelligence crawlers, large language model (LLM) data miners, and incredibly aggressive scraper bots. Because WordPress powers a massive portion of the global internet, it is inherently the primary target for these advanced automated networks. Adapting to this reality is not optional—it is a critical operational necessity for survival.

In the past, the primary automated traffic on the web consisted of polite search engine crawlers indexing content for search results, or rudimentary malicious scripts looking for outdated plugins. Today, a new, far more aggressive entity dominates global bandwidth. AI bots do not just quietly index your site. They hammer servers with tens of thousands of concurrent requests, aggressively siphon proprietary data to train commercial models, rapidly drain server resources, and intelligently probe web applications for architectural vulnerabilities.

If you are a business leader searching for the most effective WordPress security tips 2026 has to offer, you must prioritize defense against these intelligent bots above all else. Relying on outdated security plugins, basic passwords, or legacy firewalls is no longer sufficient. AI bots operate with a scale, sophistication, and evasion capability that easily bypasses traditional static defenses.

At Tool1.app, a software development agency specializing in mobile/web applications, custom websites, Python automations, and advanced AI/LLM solutions for business efficiency, we frequently rescue enterprises whose digital infrastructure has buckled under the sheer weight of unregulated bot traffic. We have seen firsthand how failing to adapt to this new threat vector can result in catastrophic server outages, severely degraded user experiences, and devastating intellectual property theft. In this comprehensive, technical guide, we will dissect the true nature of the AI bot threat, analyze its severe economic impact, and provide seven essential, enterprise-grade security measures to perfectly safeguard your WordPress site.

The Silent Siege: The Economics of AI Scraping and Server Exhaustion

Before implementing complex technical solutions and firewalls, it is imperative to deeply understand exactly what you are defending against and why these bots target your specific infrastructure. Not all bots are inherently malicious. Standard search engine crawlers like Googlebot or Bingbot remain essential for your site’s visibility, bringing in valuable organic search traffic. However, the new generation of AI bots exists primarily to serve the commercial interests of massive tech conglomerates or rogue data brokers, often at a direct and heavy financial cost to your business.

AI models, particularly advanced LLMs, require astronomically large datasets to function. To gather this data, companies deploy distributed networks of headless browsers and crawlers to traverse the web. These bots systematically scrape articles, product catalogs, customer reviews, pricing matrices, and proprietary research. While the companies training these models stand to make millions, the businesses hosting the scraped content are left footing the bill for the massive server bandwidth and computing power consumed by the bots.

AI bots do not browse like humans. When an LLM data-gathering bot targets your WordPress site, it attempts to download every single page, image, PDF, and text document as rapidly as your server’s hardware will allow. For a medium-sized e-commerce site, a SaaS platform, or a content-heavy digital publisher, this aggressive behavior can result in terabytes of unnecessary outbound data transfer in a matter of hours.

Consider the direct financial cost of bandwidth and dynamic cloud infrastructure. Let us examine the mathematics of a severe bot attack. If your enterprise cloud hosting provider dynamically scales resources and charges €0.08 per gigabyte of outbound data egress, a relentless botnet scraping 10 terabytes of data will add an unexpected €800 to your monthly hosting bill. Worse still, this massive influx of concurrent requests rapidly exhausts your server’s CPU and RAM allocation. Because WordPress dynamically generates pages using PHP and MySQL, every single bot request forces the database to work.

When legitimate, paying human customers attempt to access your site during a scraping event, they are met with agonizingly slow load times or immediate 503 Service Unavailable errors. In the e-commerce sector, website performance is directly tied to revenue. A mere one-second delay in page load time can lead to a drastic drop in conversion rates. For a business generating €10,000 a day, even a few hours of bot-induced sluggishness can result in thousands of euros in lost sales.+1

To calculate the potential financial damage of ignoring these threats, we can observe a simple business impact formula:

Total Monthly Loss = ( Excess Bandwidth in GB × €0.08 ) + ( CPU Auto-Scaling Surcharges ) + ( Downtime in Hours × Average Hourly Revenue )

Beyond merely scraping content, bad actors are deploying AI-driven bots to actively hunt for security flaws. Traditional automated scanners used a static list of known exploits. Modern AI bots, however, can dynamically analyze your site’s HTTP responses, identify the specific plugins and themes you are running, and string together complex, multi-step attacks to bypass rudimentary firewalls. They are incredibly efficient at executing credential stuffing attacks, where they use AI to guess administrative passwords based on context, user behavior, and leaked databases.

To prevent catastrophic financial loss and reputational damage, modern businesses must pivot from passive defense to proactive, intelligent security architectures. Here are the seven critical measures you need to implement immediately.

Implement Intelligent, Dynamic Rate Limiting at the Edge

Rate limiting is the fundamental process of restricting the number of requests a single user or IP address can make to your server within a specific timeframe. While standard rate limiting has been a web security staple for over a decade, protecting a site in the current landscape requires intelligent, behavior-based rate limiting applied explicitly at the server edge.

AI bots often utilize sprawling, decentralized residential proxy networks to disguise their true origin. They distribute their requests across thousands of unique IP addresses to evade basic, single-IP bans. However, even highly distributed bots often exhibit unnatural request speeds per connection—such as requesting a new URL every 0.2 seconds without ever requesting the associated CSS stylesheets or JavaScript files.

Many website owners make the critical mistake of relying on PHP-based WordPress plugins to handle rate limiting. This is an architectural flaw. By the time a PHP script processes the incoming request, connects to the database to check the IP’s history, and decides to block it, your server resources have already been consumed. To truly protect your infrastructure, rate limiting must happen at the web server level (Nginx or Apache) before the request ever reaches the WordPress application layer. By dropping abusive traffic at the edge, you save vital CPU cycles and RAM.

Practical Implementation (Nginx)

If your high-performance WordPress infrastructure relies on Nginx, you can configure the limit_req_zone directive to throttle aggressive scrapers utilizing a “token bucket” algorithm. This configuration limits the baseline rate of requests but utilizes the burst parameter to ensure that legitimate human users loading a complex page (which simultaneously requests dozens of images and scripts) are not accidentally blocked.

Nginx

# Define the rate limit memory zone in the HTTP block

# This allocates 10MB of RAM for state tracking (enough for ~160,000 IPs)

# The base rate is strictly limited to 2 requests per second per IP

limit_req_zone $binary_remote_addr zone=ai_bot_limit:10m rate=2r/s;

server {

listen 443 ssl http2;

server_name yourbusiness.com;

# Apply standard processing to static files to ensure fast delivery for humans

# Bots scraping images will not easily trigger the strict PHP rate limit

location ~* .(jpg|jpeg|png|gif|ico|css|js)$ {

expires 365d;

access_log off;

}

# Apply strict rate limiting to dynamic PHP processing (where bots hit the database)

location ~ .php$ {

# Allow a rapid burst of 15 requests to accommodate legitimate human browsing

# The 'nodelay' directive ensures the burst is served instantly; excess is dropped

limit_req zone=ai_bot_limit burst=15 nodelay;

include snippets/fastcgi-php.conf;

fastcgi_pass unix:/var/run/php/php8.3-fpm.sock;

}

}

Business Context and ROI: A prominent B2B wholesale client recently approached our agency after their custom platform repeatedly crashed during peak European business hours. Analyzing their server telemetry, we found AI scrapers pulling their deeply paginated product catalog and pricing matrix every two hours to feed a competitor’s dynamic pricing algorithm. By implementing strict Nginx rate limiting on dynamic endpoints, the database CPU usage instantly dropped by 75%. The site stabilized, the competitor was starved of data, and the client saved over €450 per month in emergency server auto-scaling costs.

Ruthlessly Block Rogue AI User Agents at the Server Level

When AI companies and data aggregators launch crawlers, they typically identify themselves via their HTTP “User-Agent” string. Prominent examples in the wild include GPTBot, ChatGPT-User, ClaudeBot, CCBot (Common Crawl), Amazonbot, Diffbot, and Bytespider.

Historically, website administrators relied on the robots.txt file located in the site’s root directory to ask bots politely not to scrape certain directories or to limit their crawl rate. You might add directives like User-agent: GPTBot Disallow: /. However, in 2026, robots.txt is strictly an honor system—a gentleman’s agreement for the web. It provides absolutely no technical barrier to an incoming network request.

While some highly reputable AI companies claim to respect it, countless rogue bots, customized corporate scrapers, secondary data brokers, and malicious clones simply ignore robots.txt instructions altogether. Relying on it is equivalent to putting a “Please Do Not Enter” sign on an unlocked bank vault. To truly protect your WordPress site, your proprietary content, and your server bandwidth, you must block these User-Agents directly at the web server level.

When the server detects an incoming request containing an AI bot’s signature, it forcefully terminates the connection immediately, returning a 403 Forbidden status before WordPress even boots up.

Practical Implementation (Apache and Nginx)

Here is how you can effectively block known AI data-mining bots at the infrastructure level. This list should be updated regularly as new bots emerge.

For Apache Servers (using .htaccess): If your WordPress installation uses Apache, you can leverage the mod_rewrite engine. Add these rules to the very top of your .htaccess file:

Apache

<IfModule mod_rewrite.c>

RewriteEngine On

# Define the user agents associated with known AI crawlers and LLM scrapers

# The [NC] flag ensures the match is case-insensitive

RewriteCond %{HTTP_USER_AGENT} (GPTBot|ChatGPT-User|ClaudeBot|Claude-Web|CCBot|anthropic-ai|Omigili|OmigiliBot|FacebookBot|Diffbot|Bytespider|PerplexityBot|DeepThink) [NC]

# The [F] flag immediately returns a 403 Forbidden status

# The [L] flag stops processing further rules to save server time

RewriteRule ^.* - [F,L]

</IfModule>

For Nginx Servers: In Nginx, you can achieve the exact same highly efficient result using an if statement within your primary server block:

Nginx

server {

listen 443 ssl http2;

server_name yourbusiness.com;

# Intercept and block known AI data scrapers instantly

if ($http_user_agent ~* (GPTBot|ChatGPT-User|ClaudeBot|Claude-Web|CCBot|anthropic-ai|Omigili|OmigiliBot|FacebookBot|Diffbot|Bytespider|PerplexityBot|DeepThink)) {

return 403;

}

# ... remainder of your server configuration ...

}

Business Context and ROI: A prominent legal blog, generating substantial revenue through premium consultations, found that an AI company was scraping their highly researched, proprietary articles to train a specialized legal LLM. The AI company was monetizing the firm’s intellectual property without citing or compensating them. By auditing their access logs, the firm identified the specific User-Agents of the AI crawlers and instituted a blanket server ban. This instantly halted the intellectual property leakage, preserving the uniqueness of their brand and reducing their monthly outbound data transfer by 22%.

Terminate XML-RPC to Prevent Brute-Force Amplification

XML-RPC (xmlrpc.php) is a legacy remote procedure call protocol that was built into WordPress over a decade ago. Its original purpose was to allow remote publishing from third-party desktop blogging clients and to enable inter-blog communication features like trackbacks and pingbacks. In modern web development, the WordPress REST API has completely superseded XML-RPC, rendering it obsolete.

Despite this obsolescence, XML-RPC remains enabled by default on millions of WordPress installations to maintain backward compatibility. For AI bots and malicious automated scripts, this file is a glaring, massive vulnerability.

AI-driven botnets aggressively exploit xmlrpc.php because it allows for “amplification attacks.” Instead of attempting one password guess per HTTP request on your standard wp-login.php page (which would quickly trigger a standard rate limiter), a bot can use the system.multicall method within XML-RPC. This method allows the bot to submit hundreds, or even thousands, of username and password combinations in a single, consolidated XML payload. This drastically accelerates brute-force attacks and easily bypasses basic security plugins that only count one failed login per HTTP request.

Furthermore, attackers frequently exploit the pingback feature within XML-RPC to force your server to send requests to other servers, effectively weaponizing your WordPress installation to participate in Distributed Denial of Service (DDoS) attacks against third parties. This can quickly result in your server’s IP address being blacklisted by major ISPs and spam monitoring networks.

Leaving XML-RPC open is an unacceptable operational risk. Disabling it is a non-negotiable step in hardening your environment.

Practical Implementation

You must block access to the file at the server level to prevent the request from initiating a PHP process, and disable it within the application logic as a fail-safe.

Server Level (Apache .htaccess):

Apache

# Block all external network access to the XML-RPC endpoint

<Files xmlrpc.php>

Order Allow,Deny

Deny from all

</Files>

Server Level (Nginx):

Nginx

# Deny all access to xmlrpc.php and turn off logging for these drops to save disk I/O

location = /xmlrpc.php {

deny all;

access_log off;

log_not_found off;

}

Application Level (WordPress functions.php): To ensure that plugins or legacy themes do not inadvertently rely on parts of the XML-RPC system, add the following snippet to your custom theme’s functions.php file or a dedicated security utility plugin:

PHP

// Completely disable the XML-RPC API functionality within WordPress core

add_filter('xmlrpc_enabled', '__return_false');

// Remove the X-Pingback HTTP header from server responses to stop broadcasting its availability

add_filter('wp_headers', function($headers) {

unset($headers['X-Pingback']);

return $headers;

});

By completely sealing off this outdated endpoint, you eliminate one of the most frequently abused attack vectors on the platform, dramatically reducing the success rate of automated, AI-enhanced password guessing algorithms.

Deploy a Modern, Behavior-Based Web Application Firewall (WAF)

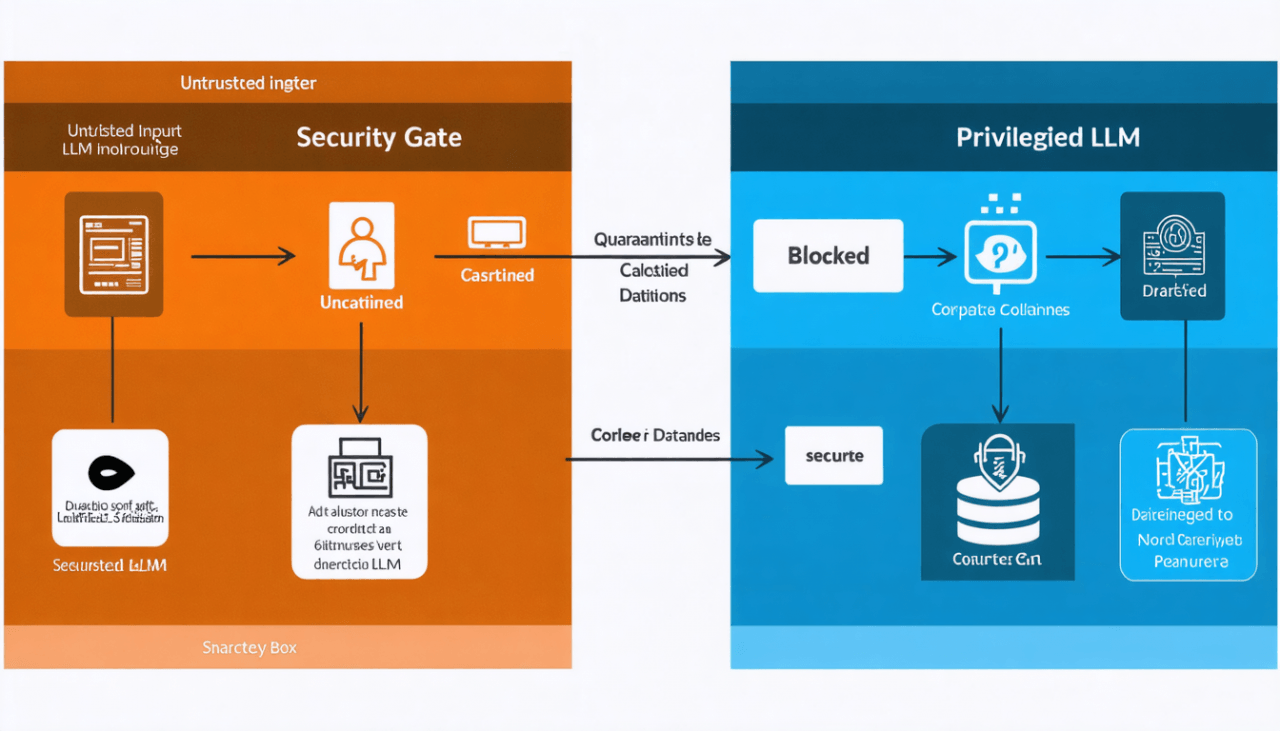

Traditional security plugins installed directly inside the WordPress dashboard (often called Endpoint Firewalls) operate on PHP. While they offer a baseline level of security, they suffer from a fatal architectural flaw: by the time the firewall script inspects the malicious traffic, the HTTP request has already reached your origin server, executed PHP code, and consumed precious CPU and RAM resources.

To defend against highly distributed, advanced AI bots, you need a cloud-based Web Application Firewall (WAF) sitting geographically in front of your server, at the network edge. More importantly, you must utilize a next-generation, AI-powered WAF.

Legacy firewalls operate on static, signature-based rule sets. They inspect incoming traffic for specific SQL injection strings, known cross-site scripting (XSS) patterns, or blacklisted IP addresses. However, modern generative AI bots are highly dynamic. They rapidly rotate through hundreds of thousands of clean residential proxy IP addresses, randomize their attack vectors, alter their request pacing, and perfectly spoof their HTTP headers to simulate legitimate human traffic.

To counter AI, you must leverage AI. Modern edge-based WAFs use machine learning to establish a behavioral baseline of normal human traffic on your website. They ask dynamic questions:

- Are the mouse movements and scroll speeds human-like, or perfectly linear and robotic?

- Is the client resolving JavaScript rendering as quickly as a script, or with natural latency?

- Does the navigation pattern through the site match human reading speeds?

If an entity deviates from this behavioral baseline—for example, by perfectly navigating through 50 pages of your product catalog at exact 1.1-second intervals from a residential IP—the WAF intercepts the connection at the network edge, thousands of miles away from your actual server.

When configuring a WAF for our clients at Tool1.app, we focus heavily on custom behavioral mitigation. For instance, if a regional e-commerce business only ships products within the European Union, we implement strict Geo-Blocking and Autonomous System Number (ASN) filtering rules. There is rarely a legitimate business reason for automated data center traffic originating from another continent to continuously scrape the site. Applying aggressive blocks to non-target regions drastically reduces automated scraping overhead.

Investing in a robust, enterprise-grade edge WAF typically costs between €50 to €300 per month. However, the Return on Investment (ROI) is massive when you consider it virtually eliminates the risk of a catastrophic data breach, protects your intellectual property from LLM ingestion, and prevents crippling DDoS downtime that could cost thousands of euros an hour.

Shield the WordPress REST API from Public Data Extraction

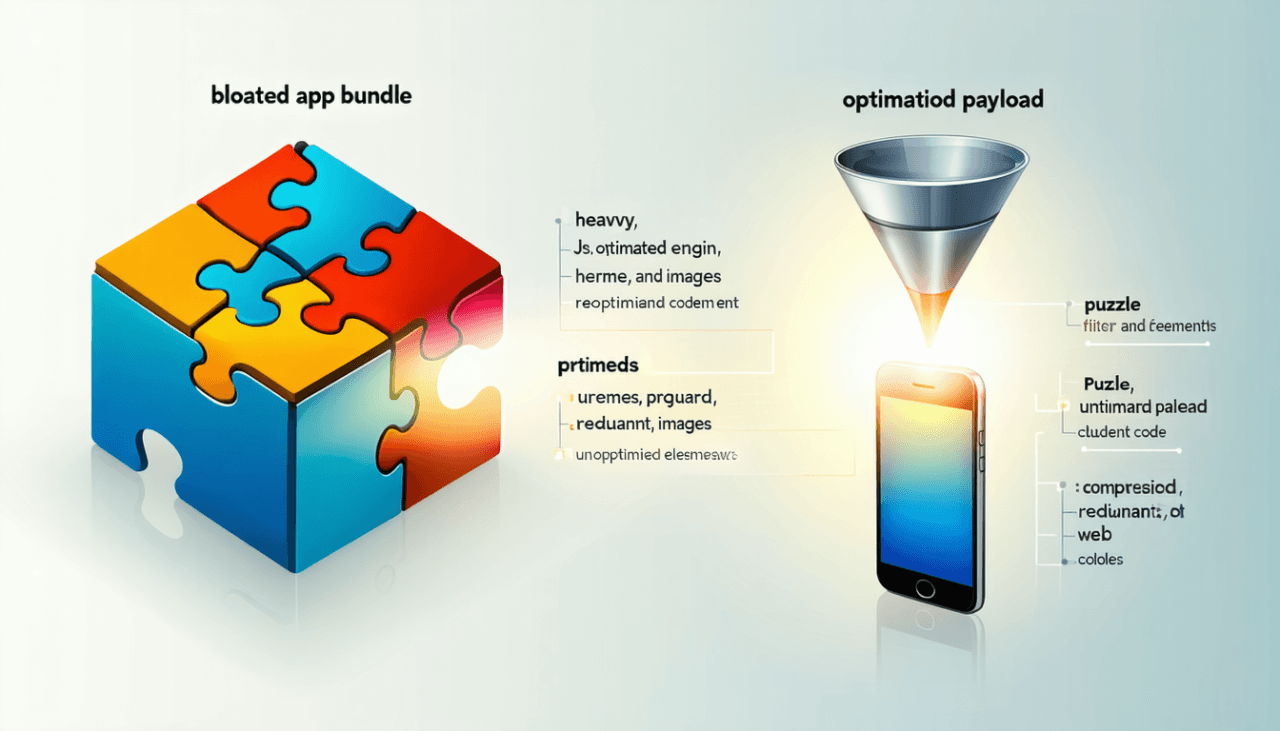

The introduction of the WordPress REST API (/wp-json/) revolutionized the platform, transforming it from a simple blogging CMS into a robust framework capable of powering modern decoupled (headless) web applications and custom mobile apps. However, out of the box, the REST API is an absolute goldmine for AI data scrapers, as it leaves an immense amount of internal data exposed to the public without requiring any authentication whatsoever.

Bots do not need to parse your complex HTML structure, download CSS, or execute your heavy JavaScript to read your content. They can simply send a lightweight HTTP GET request to endpoints like yourwebsite.com/wp-json/wp/v2/posts and instantly receive your entire content catalog. This includes publishing dates, tags, categories, and full article text, all beautifully formatted in structured JSON. This clean, raw data format is exactly what AI training pipelines prefer.

Furthermore, malicious scrapers can query /wp-json/wp/v2/users to enumerate the exact usernames of your site’s authors and administrators. Providing an attacker with a verified list of usernames gives them half of the credentials needed to execute a successful targeted brute-force attack.

Practical Implementation

Unless your business architecture actively utilizes a headless frontend that absolutely requires public, anonymous API access, you must rigorously restrict the REST API so that sensitive endpoints are only available to securely authenticated (logged-in) users.

You can implement this defense by adding a custom function to your WordPress architecture:

PHP

// Hook into the REST API authentication pipeline

add_filter( 'rest_authentication_errors', 'secure_restrict_rest_api_access' );

function secure_restrict_rest_api_access( $result ) {

// If a previous security check has already produced an error, pass it through unchanged

if ( ! empty( $result ) ) {

return $result;

}

// Check if the current entity making the request is logged into WordPress

if ( ! is_user_logged_in() ) {

// Return a standard HTTP 401 Unauthorized status for anonymous requests

return new WP_Error(

'rest_unauthorized',

'Critical Security Notice: Access to the REST API is restricted to authenticated users only.',

array( 'status' => 401 )

);

}

// If the user is authenticated, allow the API request to proceed

return $result;

}

Business Context and ROI: Consider a publishing client of ours who operates a premium, subscription-based financial news outlet. They realized that their deeply researched, paywalled articles were appearing on AI aggregator tools almost instantly after publication. Upon auditing their infrastructure, we discovered that while their HTML frontend had a robust, impenetrable paywall, their WordPress REST API was fully exposed. Bots were fetching the raw article content in JSON format, bypassing the paywall entirely. By locking down the REST API to authenticated subscribers, we immediately stopped the data hemorrhage and preserved their €30,000+ monthly recurring subscription revenue.

Enforce Bot-Resistant, Invisible Behavioral Authentication

Forms are the most vulnerable interaction points on your WordPress site. Login pages (wp-login.php), WooCommerce checkout forms, user registration portals, and comment sections are prime targets for AI bots executing credential stuffing and spam campaigns. Credential stuffing occurs when bots take massive lists of leaked usernames and passwords from unrelated corporate data breaches and rapidly test them against your login portal.

For years, the standard industry response to automated form submissions was the visual CAPTCHA—forcing users to identify traffic lights, crosswalks, motorcycles, or distorted text. Unfortunately, in 2026, CAPTCHAs are completely obsolete. Modern AI computer vision models can solve complex image-based puzzles faster and with a higher degree of accuracy than human beings. Furthermore, traditional visual CAPTCHAs create severe friction, frustrating legitimate customers, reducing digital accessibility, and drastically increasing shopping cart abandonment rates on e-commerce platforms.+2

To secure your entry points, you must upgrade to bot-resistant, invisible behavioral authentication systems.

Implementing Cryptographic Proof-of-Work Challenges

Solutions like advanced Cloudflare Turnstile or customized implementations of silent validation systems operate entirely in the background. They do not ask the user to click anything or solve frustrating puzzles. Instead, when a browser requests your page or attempts to submit a form, the security layer injects a highly obfuscated piece of JavaScript. This script forces the visitor’s device to perform a complex cryptographic calculation—a Proof-of-Work challenge—before the server accepts the HTTP request.

For a human user on a standard smartphone or laptop, this calculation takes roughly 50 to 100 milliseconds. The user notices absolutely no delay, resulting in a flawless, frictionless experience. However, for a malicious botnet operator trying to aggressively scrape 20,000 pages concurrently or test 50,000 passwords, forcing their automated servers to perform heavy cryptographic math for every single request spikes their CPU usage to unsustainable levels.

By forcing the bots to expend massive computational resources, you completely alter their economic incentives. If attacking your site costs the bot operator €100 in cloud compute power but the extracted data is only worth €5, they will abandon your domain and move on to a softer, unprotected target.

Furthermore, for all administrative accounts, Multi-Factor Authentication (MFA) or Passkeys (WebAuthn) should be strictly enforced. This renders automated credential stuffing mathematically impossible to execute, pushing the probability of a successful automated breach to ≈ 0.

Continuous Log Monitoring and Threat Intelligence via Python Automations

Static foundational defenses—such as blocking specific user agents, restricting APIs, and disabling legacy protocols—are absolutely essential. However, they are inherently reactive. To maintain a truly secure, enterprise-grade environment in the long term, you must have deep, granular visibility into what is actually hitting your server on a daily basis.

AI bot operators constantly adapt. They cycle their proxy IP addresses, dynamically spoof their user agents to mimic legitimate browsers (like Chrome or Safari), and throttle their own request velocity to blend in with normal human traffic, allowing them to bypass basic rate limits and standard WAF rules.

To catch advanced anomalies and stealthy scrapers, businesses must leverage automated log analysis. Web servers generate access logs (access.log) that record the exact details of every single request made to the server. By automating the parsing and analysis of these massive log files, you can detect highly sophisticated bots that fly under the radar. However, manually parsing through gigabytes of text logs is an impossible task for a human administrator.

This is an area where custom automation is revolutionary. At Tool1.app, we specialize in building custom Python automations to streamline complex business tasks, including proactive security monitoring and threat intelligence.

Using Python data processing libraries, we build lightweight daemon scripts that continuously stream server logs, mathematically model traffic patterns, and dynamically update firewall rules in real-time. We can even integrate powerful advanced reasoning LLMs, such as DeepThink, into the Python pipeline to perform semantic analysis on unusual query strings, predicting and blocking zero-day injection attacks based on contextual anomaly detection before they execute.

Practical Implementation Example

Here is a simplified, conceptual example of how a Python automation script can quickly parse an Nginx access log, identify stealthy IP addresses that are exceeding normal human behavioral thresholds, and prepare them for an automated firewall ban:

Python

import re

from collections import Counter

import json

import time

# Define the absolute path to your Nginx or Apache access log

LOG_FILE = '/var/log/nginx/access.log'

def analyze_threat_logs(file_path):

ip_counter = Counter()

# Advanced regular expression to accurately extract IP addresses from a combined log format

log_pattern = re.compile(r'^(d{1,3}.d{1,3}.d{1,3}.d{1,3})')

try:

with open(file_path, 'r') as file:

for line in file:

match = log_pattern.match(line)

if match:

ip = match.group(1)

# In advanced implementations, we ignore requests for static assets (.css, .jpg)

# to isolate hits targeting dynamic PHP processing where bots inflict the most damage

if ".css" not in line and ".js" not in line and ".png" not in line:

ip_counter[ip] += 1

except FileNotFoundError:

print(f"Critical Error: Log file not found at {file_path}")

return

print("--- Proactive Threat Intelligence Report ---")

print("-" * 50)

for ip, count in ip_counter.most_common(10):

# If an IP requests an impossibly high number of dynamic pages without assets, it is flagged

if count > 600:

print(f"CRITICAL ALERT: Bot identified at IP {ip} with {count} dynamic requests.")

# In a production environment, this script securely calls the WAF API

# to dynamically add the IP to a global drop list, isolating the threat instantly.

# requests.post('https://api.firewall.local/ban', data={'ip': ip})

else:

print(f"Monitoring: IP {ip} generated {count} requests.")

if __name__ == "__main__":

# In a true enterprise deployment, this runs continuously as a background systemd service

analyze_threat_logs(LOG_FILE)

By integrating custom Python automations into your backend infrastructure, you transform your WordPress site from a passive target into an active, self-healing security ecosystem. It autonomously learns from the traffic it receives, identifies malicious AI actors, and neutralizes them before they can degrade your server performance or steal your proprietary data. Leveraging Python for infrastructure defense gives you a massive, proactive advantage over standard, out-of-the-box WordPress setups.

Conclusion: Future-Proof Your Digital Infrastructure

The rapid integration of artificial intelligence into web scraping, data harvesting, and cyber-attacks is not a passing trend; it is the new baseline reality of the internet. As AI bots become faster, smarter, and more relentless in their pursuit of data, the passive security strategies of the past will inevitably fail.

By implementing intelligent rate limiting at the server edge, ruthlessly blocking rogue user agents, shutting down outdated amplification vectors like XML-RPC, deploying modern behavioral WAFs, locking down your REST API endpoints, utilizing silent cryptographic challenges, and automating your log analysis with custom Python scripts, you create a highly robust, multi-layered defense architecture.

Protecting your WordPress site from these advanced automated threats is not just an IT task—it is a critical, high-stakes business strategy. Every euro (€) saved on massive bandwidth overages, every piece of proprietary intellectual property secured, and every minute of server downtime avoided directly protects your bottom line and preserves your brand’s reputation.

Navigating these complex configurations requires extreme technical precision. A single misconfigured regex rule in an .htaccess file or a poorly designed REST API block can inadvertently lock out your legitimate human customers, break custom mobile applications, or crash your site entirely. Security implementations must be rigorously engineered, deployed in staging environments, and monitored by experienced software professionals.

Is Your Site Secure? Get a Comprehensive Security Audit Today

Do not wait for your server to buckle under the pressure of an AI bot swarm, or for your proprietary data to be compromised, before evaluating your defenses. If you are experiencing unexpected server load, suspect your valuable content is being aggressively scraped by language models, or simply want the ultimate peace of mind that comes with enterprise-grade infrastructure, you need specialized expertise.

The engineering team at Tool1.app is ready to assist. Whether you need an immediate, deep-dive security audit, a complete architectural hardening of your WordPress platform, the development of highly secure custom web applications, or custom Python automations tailored to streamline your business efficiency, we provide scalable solutions designed perfectly for the AI era. Contact Tool1.app today to schedule a detailed consultation. Let us fortify your digital infrastructure, block the bots, and ensure your business scales safely, securely, and profitably into the future.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.