Small Language Models for Mobile: The Ultimate Guide to On-Device AI

Table of Contents

- The Edge Intelligence Revolution

- The Iron Triangle of Mobile AI: Latency, Cost, and Privacy

- The 2026 Mobile Model Landscape

- Technical Integration: iOS (Swift & Core ML)

- Technical Integration: Android (MediaPipe & AI Edge)

- Advanced Architecture: On-Device RAG

- Cost Analysis and ROI

- The Deployment Pipeline: From Training to Device

- Real-World Use Cases

- Conclusion: The Future is Local

- Show all

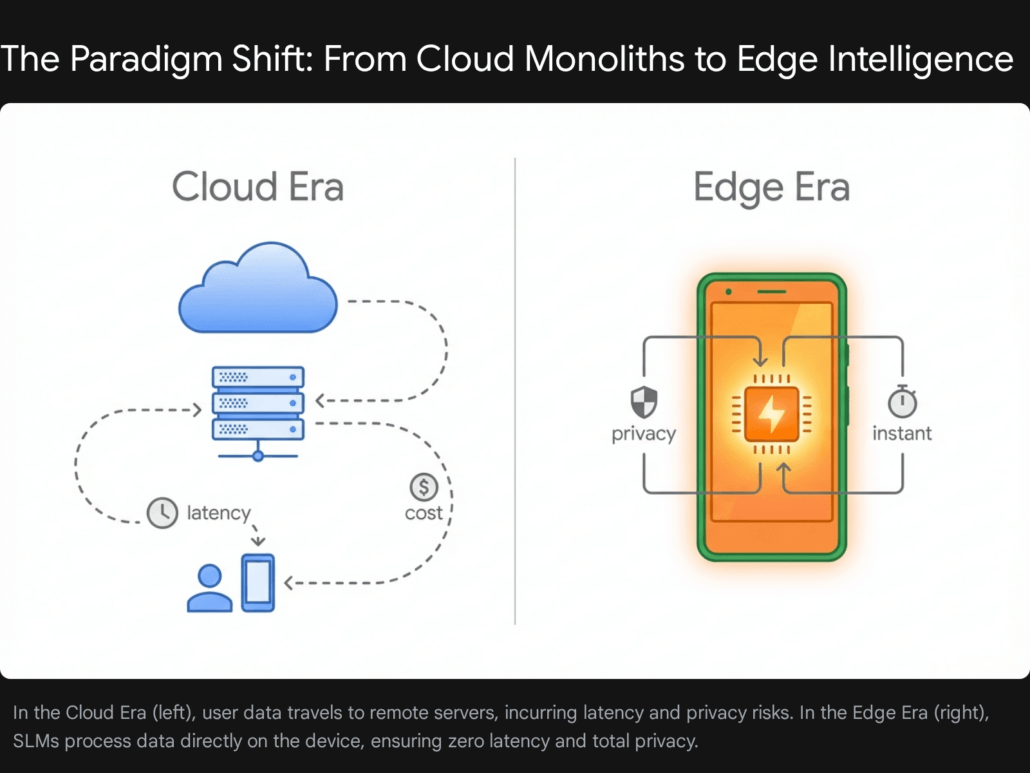

The Edge Intelligence Revolution

The trajectory of artificial intelligence has historically been defined by a philosophy of “bigger is better.” For the better part of a decade, the industry measured progress in trillions of parameters, requiring colossal data centers, energy consumption rivaling small nations, and a tethered connection to the cloud. However, as we navigate the technological landscape of 2026, a counter-movement has not only emerged but has become the dominant force in consumer applications: the rise of Small Language Models (SLMs).

This shift represents more than a mere optimization; it is a fundamental architectural realignment of how intelligence is delivered to the end-user. We are moving from a centralized model, where intelligence resides in distant server farms, to a decentralized model, where powerful reasoning capabilities are embedded directly into the devices in our pockets. The capability to run high-fidelity generative AI on mobile devices—smartphones, tablets, and wearables—has transitioned from a research curiosity to a business imperative.

For mobile developers, product architects, and CTOs, this transition offers an escape from the constraints of cloud-dependency. The latency of network round-trips, the exorbitant costs of token-based billing at scale, and the looming specter of data privacy regulations have created a “cloud ceiling” for mobile innovation. SLMs shatter this ceiling. By leveraging the massive parallel processing power of modern Neural Processing Units (NPUs) found in chipsets like the Apple A18 Pro and Qualcomm Snapdragon 8 Elite, applications can now perform complex reasoning, translation, and content generation locally, instantly, and privately.

At Tool1.app, we observe this transformation daily. Developers are no longer asking if they should deploy on-device models, but how to manage the complex lifecycle of quantization, conversion, and hardware-specific optimization. This report serves as a definitive guide to this new era, dissecting the technical realities, business economics, and strategic advantages of Small Language Models for mobile.

Defining the “Small” in SLM

In the context of 2026 hardware, the definition of an SLM has crystalized around parameter counts that balance reasoning capability with memory bandwidth constraints. While “Large” Language Models (LLMs) like GPT-4 or Claude 3.5 Opus span hundreds of billions or trillions of parameters, SLMs targeted for mobile deployment typically fall within the 1 billion to 7 billion parameter range.

This specific range is not arbitrary; it is dictated by the physical RAM available on consumer devices. A 7-billion parameter model, quantized to 4-bit precision, requires approximately 3.5 to 4.5 GB of RAM to load. Given that high-end smartphones in 2026 typically ship with 12GB to 16GB of unified memory (shared between CPU, GPU, and NPU), a 7B model represents the upper limit of what can run comfortably alongside the operating system and other active applications. Models in the 1B to 3B range, such as Google’s Gemma 3n 2B or Microsoft’s Phi-3 Mini, sit in the “sweet spot,” consuming only 1-2 GB of memory while delivering performance that rivals the cloud-based giants of just a few years ago.

It is crucial to understand that “small” does not imply “incapable.” Through techniques like knowledge distillation—where a massive teacher model trains a smaller student model—and training on high-quality, textbook-grade datasets, SLMs have achieved remarkable density of intelligence. They are specialized instruments, honed for efficiency and specific domain tasks, rather than blunt, general-purpose instruments.

The Iron Triangle of Mobile AI: Latency, Cost, and Privacy

Why are enterprises and developers rushing to move AI workloads to the edge? The motivation is driven by three distinct pressures that cloud-based solutions struggle to alleviate: Latency, Cost, and Privacy. These three factors form the “Iron Triangle” of mobile AI deployment, and SLMs are the only technology that successfully addresses all three simultaneously.

Latency: The Physics of User Experience

In the realm of mobile user experience (UX), speed is not merely a feature; it is the foundation of usability. The threshold for perceived instantaneity in human-computer interaction is approximately 100 milliseconds. Traditional cloud-based LLM interactions often violate this threshold by orders of magnitude.

When a mobile app relies on a cloud API, the data flow involves:

- Upload: Sending the prompt and context (often including images or large text blocks) to the server.

- Queueing: Waiting for the cloud inference provider to allocate a GPU.

- Processing: The heavy lifting of token generation.

- Download: Streaming the generated tokens back to the device.

This round-trip often results in latencies ranging from 2 to 5 seconds, depending on network conditions. In a fluctuating 5G or 4G environment, or worse, on public Wi-Fi, this can degrade to 10 seconds or total failure. Such delays break the “flow state” of the user, making features like real-time grammar correction, voice translation, or predictive UI feel sluggish and disjointed.

On-device SLMs eliminate the network variable entirely. The data travels from the device’s memory to the NPU and back, a distance measured in micrometers rather than miles. Current benchmarks on 2025-2026 hardware demonstrate the efficacy of this approach:

- Apple Silicon Performance: Research indicates that Llama-3-8B models running on M-series chips can achieve decoding speeds of approximately 33 tokens per second. This is faster than the average human reading speed, creating an experience of immediate responsiveness.

- Snapdragon Capabilities: Qualcomm’s Snapdragon 8 Elite NPU has demonstrated throughputs exceeding 100 tokens per second for optimized models, and up to 11,000 tokens per second during the pre-fill phase (processing the initial prompt).

This capability unlocks “real-time” AI applications that were previously impossible. An app can now analyze a live video feed and generate descriptive commentary frame-by-frame, or translate a spoken conversation with zero lag, creating a seamless interaction that feels magical rather than mechanical.

Privacy: The Regulatory and Trust Advantage

As digital privacy regulations tighten globally (GDPR in Europe, CCPA in California, various AI Acts), the liability associated with processing user data in the cloud grows. For applications dealing with sensitive categories—health, finance, legal, or personal communications—sending data to a third-party inference provider presents a significant compliance hurdle and a security risk.

On-device SLMs offer a solution through data sovereignty. When the inference happens locally:

- Data never leaves the device: The user’s photos, health metrics, and financial records are processed in system memory and immediately discarded or stored in a local encrypted sandbox. There is no transmission, and thus no interception risk.

- Reduced Attack Surface: Cloud databases are high-value targets for cyberattacks. Distributed data storage across millions of individual devices, protected by hardware-backed security enclaves (like Apple’s Secure Enclave or Android’s Knox), is inherently more resilient to mass breaches.

- User Trust: In an era of skepticism regarding how tech giants use data to train future models, on-device AI offers a clear contract: “Your data trains nothing; it only serves you.” This transparency is becoming a competitive differentiator for consumer apps.

Cost: Escaping the “Token Tax”

The economic model of cloud AI is based on marginal cost: every single interaction costs money. Whether it is per-token billing (e.g., $0.03 per 1k tokens) or provisioned throughput units, the cost scales linearly with usage. For a successful app with millions of active users, this “success tax” can be debilitating.

Consider a mobile application with 1 million daily active users, where each user performs just 5 AI interactions per day. In a cloud model, the developer pays for 5 million transactions daily. If the app goes viral, costs explode instantly, potentially outpacing revenue growth.

On-device AI fundamentally alters this cost structure:

- Shift to Fixed Costs: The cost of on-device AI is front-loaded in the engineering phase (training, fine-tuning, quantization, and integration). Once the model is deployed to the user’s device, the marginal cost of inference is zero for the developer.

- Distributed Compute: The developer is effectively leveraging the distributed computing power of their user base. The user “pays” for the inference with a microscopic fraction of their battery life and device processor time, a trade-off most are willing to accept for free, private, and fast features.

- Predictability: CFOs favor predictable operational expenses (OpEx). On-device models remove the volatility of cloud billing, allowing for stable financial planning regardless of usage spikes.

Cloud LLM vs. On-Device SLM: The Business Impact Matrix

Indicates strategic advantage (Winner)

| Feature | Cloud LLM | On-Device SLM |

|---|---|---|

| Latency | Dependent on network connection. Can suffer from server latency and traffic. | Eliminated network latency. Instant, real-time responses. |

| Cost Model | Pay-per-token (Variable). Unpredictable and escalates rapidly at scale. | Engineering/Hardware (Fixed). Predictable operating costs after initial investment. |

| Privacy | Data sent to cloud. Third-party security reliance; compliance risks. | Data stays on device. Full control; sensitive info never leaves. |

| Offline Access | Requires active internet connection. Fails without connectivity. | Full offline capability. Works anywhere without network. |

| Implementation | Low barrier to entry. Fast iteration; managed by providers. | High upfront engineering. Requires optimization for hardware constraints. |

Comparison of Cloud LLMs and On-Device SLMs across critical dimensions. SLMs offer predictable costs and superior privacy, while Cloud LLMs provide infinite scale but higher latency and variable costs.

Offline Reliability

Finally, the mobile context is inherently unstable. Users enter elevators, board subways, fly in airplanes, and travel to rural areas. A cloud-dependent AI feature effectively breaks in these scenarios. On-device SLMs provide offline reliability. A translation app must work in a foreign country without data roaming; a document summarizer must work on a flight. SLMs ensure that the core value proposition of the application remains intact regardless of connectivity, a critical factor for retention and utility.

The 2026 Mobile Model Landscape

The hardware readiness for SLMs has been matched by a rapid evolution in model architecture. The landscape in 2026 is populated by highly capable, open-weight models designed specifically for the edge. Developers must navigate these options to select the engine that best fits their application’s needs.

Google: Gemma 3n and Gemini Nano

Google has established a formidable presence in the mobile AI space with its dual strategy of open models and system-integrated services.

- Gemma 3n: This is Google’s flagship family of open models for the edge. The “n” stands for efficiency. Available in sizes like 2B and 4B (effective parameters), Gemma 3n is notable for being multimodal by design. Unlike previous generations that required separate vision encoders, Gemma 3n natively understands text, images, and audio. It utilizes MatFormer architecture, a nested structure that allows developers to deploy a single model that can scale its compute usage up or down (e.g., acting as a 2B model to save battery or a 4B model for higher accuracy) without swapping weights.

- Gemini Nano: This is the system-level model built into Android 14 and higher (via AICore). It is a managed service, meaning the OS handles the model updates and execution. It is highly optimized for Google’s Tensor and partner chips but offers less flexibility for developers regarding fine-tuning compared to Gemma.

Microsoft: The Phi Series

Microsoft’s Research division has consistently pushed the boundaries of what small models can do. The Phi series (Phi-3, Phi-4) is renowned for its “textbook quality” training data. By curating highly educational and logical training corpora, Microsoft has produced models with parameter counts as low as 3.8 billion that rival the reasoning capabilities of legacy models 10x their size (like GPT-3.5).

- Context Window: Phi-3 Mini stands out with a massive context window option (up to 128k tokens), allowing it to ingest and analyze entire books or long legal documents entirely on-device.

- Cross-Platform: Microsoft heavily invests in the ONNX Runtime, making Phi models exceptionally easy to deploy across both iOS and Android using a unified codebase.

Meta: Llama 3.2

Meta continues to set the standard for the open-source community with Llama 3.2. Recognizing the gap in edge-ready models, Meta released 1B and 3B parameter versions of Llama 3 specifically optimized for ARM processors.

- Ecosystem Support: Due to its popularity, Llama enjoys the broadest tooling support. From

llama.cppto Core ML Tools and Qualcomm’s AI Stack, virtually every optimization pipeline prioritizes Llama compatibility first. - Instruction Tuning: The 3B model excels at following complex instructions and maintaining persona, making it a favorite for chat-based applications and role-playing agents.

Technical Integration: iOS (Swift & Core ML)

For iOS developers, the gateway to on-device intelligence is Core ML, Apple’s machine learning framework. Over the past few iterations, Apple has transformed Core ML from a simple inference engine into a robust platform for Generative AI, specifically optimizing for the Transformer architecture that underpins all modern LLMs.

The Core ML Pipeline: From PyTorch to ANE

Integrating an SLM like Llama 3 on iOS involves a distinct pipeline. You cannot simply drop a PyTorch file into Xcode; it must be converted and optimized.

Model Selection & Export: The process begins with a PyTorch model, typically sourced from Hugging Face. The first step is to trace this model to create an intermediate representation.

Quantization: This is the most critical step for mobile. A standard 3-billion parameter model in 16-bit floating point (FP16) precision requires approximately 6GB of RAM. This is too heavy for an iPhone running other apps. Developers must use quantization to reduce the precision of the model’s weights.

- Int4 (4-bit) Quantization: This compresses the model to roughly 1.5GB to 2GB with negligible accuracy loss.

- Palettization: Apple provides advanced techniques like palettization (lookup-table compression) which are highly optimized for the Apple Neural Engine (ANE), allowing for efficient memory bandwidth usage.

Conversion: Using coremltools, the python library provided by Apple, the quantized model is converted into the .mlpackage format. This package contains the model weights, metadata, and the compute graph structure optimized for Apple Silicon.

Handling State: The KV Cache

A significant challenge in running Transformers on-device is managing the Key-Value (KV) Cache. In a Transformer, generating the next word requires paying attention to all previous words. Re-computing this “attention” for the entire history for every new token is computationally expensive (O(n^2) complexity). The KV Cache stores these computations so they don’t need to be repeated.

Historically, Core ML required developers to manually manage these massive state buffers, passing them in and out of the model for every prediction, which incurred significant memory copying overhead. Modern Core ML introduces Stateful Models, allowing the model to persist the KV cache in memory internally between inference calls.

Here is a conceptual overview of how to interact with a stateful Llama 3 model using Swift and the new MLTensor API concepts:

Swift

// Conceptual Swift implementation for Stateful Llama 3 Inference

import CoreML

// 1. Initialize the model

// The model is configured to hold state (KV Cache) internally.

let config = MLModelConfiguration()

config.computeUnits =.all // Utilizes CPU, GPU, and Neural Engine

let llamaModel = try Llama3_2_3B(configuration: config)

// Create a state object that persists across predictions

let state = llamaModel.makeState()

func generateText(prompt: String) async throws {

// 2. Tokenize Input

// (Assuming a standard tokenizer library is available)

var inputIDs = Tokenizer.encode(prompt)

// 3. Generation Loop

var isFinished = false

while!isFinished {

// Wrap input as MLTensor (multi-dimensional array)

let inputTensor = try MLTensor(shape:, scalars: inputIDs)

// 4. Predict

// We pass the 'state' object. The model updates the KV cache in-place.

let output = try await llamaModel.prediction(inputIds: inputTensor, state: state)

// 5. Decode Output

// The output logits are processed to select the next token

let nextTokenID = Sampler.greedy(logits: output.logits)

let nextWord = Tokenizer.decode(nextTokenID)

print(nextWord, terminator: "")

// Prepare for next loop

inputIDs = // Only feed the new token back in

if nextWord == "<|end_of_text|>" {

isFinished = true

}

}

}

Note: This code simplifies error handling and complex sampling (Top-K/Top-P) for clarity, but illustrates the core pattern of stateful inference.

Optimizing for the Apple Neural Engine (ANE)

To achieve the 30+ tokens per second speeds seen in benchmarks, models must be tuned to run on the ANE rather than just the GPU. The ANE is a specialized NPU designed for high-throughput, low-power matrix multiplication.

- Linear Quantization: While the GPU handles floating point well, the ANE excels at integer math. Ensuring your model uses linear quantization (mapping floats to integers linearly) rather than complex non-linear schemes is key to “staying on the ANE” and avoiding fallback to the CPU.

- Flexible Shapes: Defining input shapes that match the ANE’s preferred block sizes (e.g., multiples of 16 or 32) can prevent costly memory padding operations during runtime.

Technical Integration: Android (MediaPipe & AI Edge)

The Android ecosystem is diverse, requiring a flexible approach. Google provides the AI Edge suite, which includes MediaPipe for broad compatibility and AICore for deep system integration.

MediaPipe LLM Inference API

For most developers, MediaPipe is the recommended path. It abstracts the complexity of TensorFlow Lite and provides a cross-platform API that works on standard Android devices (not just Pixels).

The Workflow:

- Conversion: Use the MediaPipe Python converter to transform a Hugging Face model (like Gemma or Phi-2) into a TensorFlow Lite Flatbuffer (

.tfliteor.taskfile). This step handles the conversion of PyTorch weights to TFLite tensors and applies necessary quantization (typically Int8 or Int4). - Packaging: The resulting

.binfile is bundled with the app or downloaded on first launch. - Inference: The

LlmInferenceJava/Kotlin API provides a simple interface for text generation.

Code Concept:

Kotlin

// Android (Kotlin) Implementation using MediaPipe

// 1. Configure the Inference Options

val options = LlmInferenceOptions.builder()

.setModelPath("/data/local/tmp/gemma-2b-it-gpu-int4.bin") // Path to model

.setMaxTokens(1024)

.setResultListener { partialResult, done ->

// 2. Handle Streaming Results

// This callback runs on the UI thread, allowing real-time text updates

runOnUiThread {

textView.append(partialResult)

}

}

.build()

// 3. Create the Inference Engine

val llmInference = LlmInference.createFromOptions(context, options)

// 4. Generate Response asynchronously

llmInference.generateResponseAsync("Summarize this email in 3 bullet points.")

LoRA: Adapting Models on the Fly

A major advantage of the Android ecosystem is the robust support for Low-Rank Adaptation (LoRA). Fine-tuning a full 2B parameter model for a specific task (e.g., medical terminology) is expensive and produces a large file. LoRA creates a small “adapter” file (often just 10-50MB) that sits on top of the base model.

Tool1.app Strategy: Developers can ship a single base model (e.g., Gemma 2B) and multiple small LoRA adapters.

- Scenario: A travel app.

- Base Model: Generic English understanding.

- Adapter A: “Spanish Translation” (loaded when user selects Spanish).

- Adapter B: “Menu Decoder” (loaded when user opens the camera). This keeps the app size manageable while offering specialized capabilities. The

LlmInferenceAPI supports loading these adapter weights dynamically at runtime.

System-Level AI with AICore

For apps targeting flagship devices (like the Pixel 9 or Galaxy S25), Android 14+ introduces AICore. This allows apps to access a shared, system-managed instance of Gemini Nano.

- Benefit: Zero app bloat. You don’t bundle the model; the OS provides it.

- Limitation: You are restricted to the Gemini model family and device availability is limited to high-end tiers.

- Implementation: Developers use the

GenerativeAIclient in the Android SDK to send prompts to the system service, similar to a network API call but routed locally.

Advanced Architecture: On-Device RAG

Running a model is only step one. To make it truly useful, it needs access to data. Retrieval Augmented Generation (RAG) is the architecture that bridges the gap between a generic model and the user’s private data (emails, notes, PDFs).

The On-Device RAG Stack

Implementing RAG on mobile requires a miniature version of the server-side stack, running entirely within the app sandbox.

- Vector Database: This stores the semantic “fingerprints” (embeddings) of the user’s data. ObjectBox and SQLite (with vector search extensions) are the leading choices for mobile. They allow for fast similarity searches among thousands of text chunks.

- Embedder Model: You need a small, fast model to convert text into vectors. Models like Gecko (Google) or NLEmbedding (Apple) are optimized for this. They run in milliseconds and produce compact vectors.

- The Reasoner (SLM): The SLM (Gemma/Llama) receives the retrieved data as context and answers the user’s question.

The Data Flow

Consider a “Personal Knowledge Base” app that lets users chat with their saved PDF documents.

- Ingestion (Background Task):

- The app extracts text from a PDF.

- The text is split into chunks (e.g., 500 words).

- The Embedder Model converts each chunk into a vector.

- Vectors are stored in ObjectBox.

- Retrieval (User Action):

- User asks: “What is the warranty period mentioned in the manual?”

- The app converts this question into a vector.

- ObjectBox performs a nearest-neighbor search to find the 3 most relevant chunks from the PDF.

- Generation:

- The app constructs a prompt: “Context: [Chunk 1][Chunk 2][Chunk 3]. Question: What is the warranty period?”

- The SLM generates the answer: “The warranty period is 2 years.”

Tool1.app Role: Building this pipeline from scratch is complex. Tool1.app offers an SDK that manages the vector store and embedding generation, providing a simple retrieveAndGenerate(query) method for developers, abstracting the underlying math.

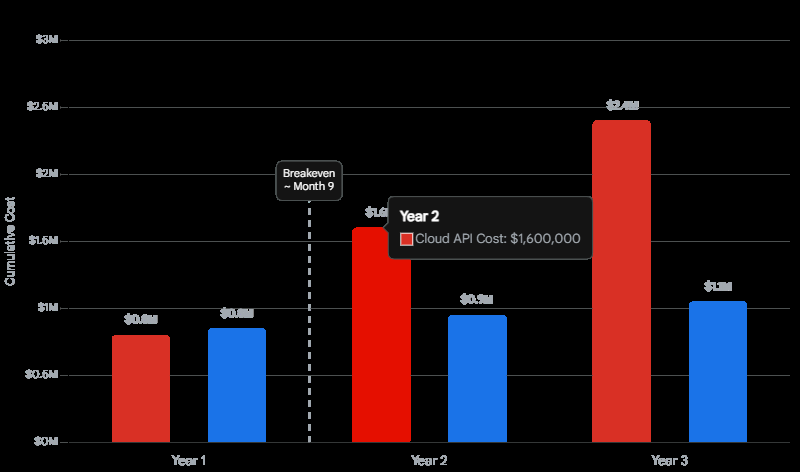

Cost Analysis and ROI

While the technical capabilities are impressive, the business case for SLMs is often decided by the bottom line. The shift from cloud to edge represents a fundamental change in unit economics.

Cloud vs. Edge TCO

Total Cost of Ownership (TCO) for AI features involves comparing Variable Costs (Cloud) against Fixed Costs (Edge).

- Cloud Scenario: Costs are low initially but scale linearly. For an app with 1 million users generating 1 million requests daily, assuming a cost of $0.002 per request (blended input/output token cost), the daily burn is $2,000. Annually, this is $730,000. If the user base doubles, the cost doubles.

- Edge Scenario: Costs are high initially. Engineering time to quantize, optimize, and integrate the model might cost $150,000 in salaries and R&D. However, once deployed, the cost of serving 1 million daily requests is $0. The infrastructure is the user’s phone.

The Break-Even Point

For high-volume applications, the break-even point—where the investment in edge AI pays off compared to cloud bills—can be as short as 6-9 months. Beyond this point, the edge model generates pure savings, improving margins significantly.

The Deployment Pipeline: From Training to Device

A common misconception is that mobile AI begins with the mobile device. In reality, the success of an on-device deployment is determined by the pipeline that precedes it. This workflow transforms a massive, server-grade model into a lean, mobile-ready engine.

Step 1: Base Model & Fine-Tuning

The journey starts with a base model (e.g., Llama 3.2 3B). While capable, it may not know the specifics of a domain (e.g., legal precedents or medical coding).

- Full Fine-Tuning: Rarely done for mobile due to the size of the resulting weights.

- LoRA (Low-Rank Adaptation): This is the preferred method. We freeze the main model and train only a tiny set of difference parameters. This results in an “adapter” file that is lightweight and modular.

Step 2: Optimization & Quantization

This is the “compression” phase. The goal is to reduce file size without destroying intelligence.

- Activation-Aware Weight Quantization (AWQ): This advanced technique identifies the “most important” weights—the 1% that contribute most to the model’s accuracy—and keeps them at higher precision (e.g., FP16), while crushing the rest to 4-bit integers. This preserves reasoning capabilities far better than blind truncation.

- Pruning: Techniques like structured pruning remove entire attention heads or neurons that are statistically redundant, physically shrinking the model architecture.

Step 3: Conversion & Runtime

The optimized weights are then converted into the format required by the target runtime:

- Core ML (iOS): Converts to

.mlpackage. Optimizations here focus on “fusing” operations (combining math steps) to reduce memory access on the Apple Neural Engine. - TFLite (Android): Converts to

.tfliteFlatbuffers. - ONNX (Cross-Platform): Converts to

.onnx.

Tool1.app automates this entire pipeline. Instead of hiring a team of ML engineers to manually script quantization and conversion steps, Tool1.app provides a CI/CD-style workflow: upload your LoRA adapter, select your target devices (e.g., “iPhone 15 & Pixel 8”), and the platform automatically generates the optimized binaries for each.

Real-World Use Cases

The theoretical benefits of SLMs translate into tangible value across various industries.

Field Service & Manufacturing

Technicians working on oil rigs, in mines, or deep in server basements often operate in “zero-connectivity” zones.

- Challenge: Accessing complex technical manuals or troubleshooting guides without internet.

- SLM Solution: An app equipped with a multimodal SLM (Gemma 3n) and a local vector database of manuals. The technician points their camera at a pressure valve. The SLM identifies the component and, using RAG, retrieves the specific reset procedure from the local docs. The interaction is instant and requires no data connection.

Healthcare: Ambient Intelligence

Physicians suffer from burnout due to hours spent on Electronic Health Record (EHR) documentation.

- Challenge: Automating note-taking without violating patient privacy (HIPAA) by sending audio to the cloud.

- SLM Solution: An “Ambient Scribe” app running on the doctor’s tablet. The audio is transcribed locally. An SLM processes the transcript to extract symptoms, diagnosis, and prescription plans, formatting them into standard medical notes. No audio ever leaves the room. The privacy guarantee is absolute.

Global Travel & Commerce

- Challenge: Language barriers and lack of data roaming plans for travelers.

- SLM Solution: A translation app that enables fluid, bi-directional voice conversation in real-time. Unlike old “phrasebook” apps, the SLM understands nuance, idiom, and context, facilitating genuine communication. E-commerce apps use visual SLMs to let users scan products in a store to instantly see reviews, sustainability scores, and price comparisons overlaid in AR.

Conclusion: The Future is Local

The era of relying solely on massive, omniscient cloud models is drawing to a close. While cloud intelligence will always have a place for the heaviest reasoning tasks, the future of mobile applications lies in a hybrid approach: massive intelligence in the cloud for the 1% of difficult queries, and highly capable, ultra-fast Small Language Models on the device for the 99% of daily interactions.

By embracing SLMs, developers are not just saving money or ticking a compliance box; they are building a user experience that feels fundamentally different. Applications that respond at the speed of thought, work anywhere on Earth, and respect the sanctity of user data will define the next decade of mobile computing.

The transition to edge AI is complex, involving new toolchains, hardware considerations, and optimization strategies. However, with platforms like Tool1.app simplifying the deployment pipeline, the barrier to entry has never been lower. The tools are ready. The hardware is ready. The only remaining question is: what will you build when intelligence is free, instant, and everywhere?

Ready to deploy your first On-Device Model? Explore the(#) and start building today.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.