Using the Gemini API for Automated Financial Data Analysis

Table of Contents

- The Evolution of Context and Reasoning in Financial Artificial Intelligence

- Processing Modalities: Native PDF Vision versus CSV Code Execution

- The Technical Blueprint: Uploading and Querying Financial Documents

- Enforcing Deterministic Predictability: The Necessity of Structured JSON Output

- Navigating Global Accounting Standards: IFRS versus US GAAP

- Deep Dive: Transformative Business Use Cases for AI Financial Analysis

- Managing the Economics: Understanding API Pricing Strategy in 2026

- Navigating the Frontier of Financial Automation

- Partner with Tool1.app to Build Your AI Financial Infrastructure

- Show all

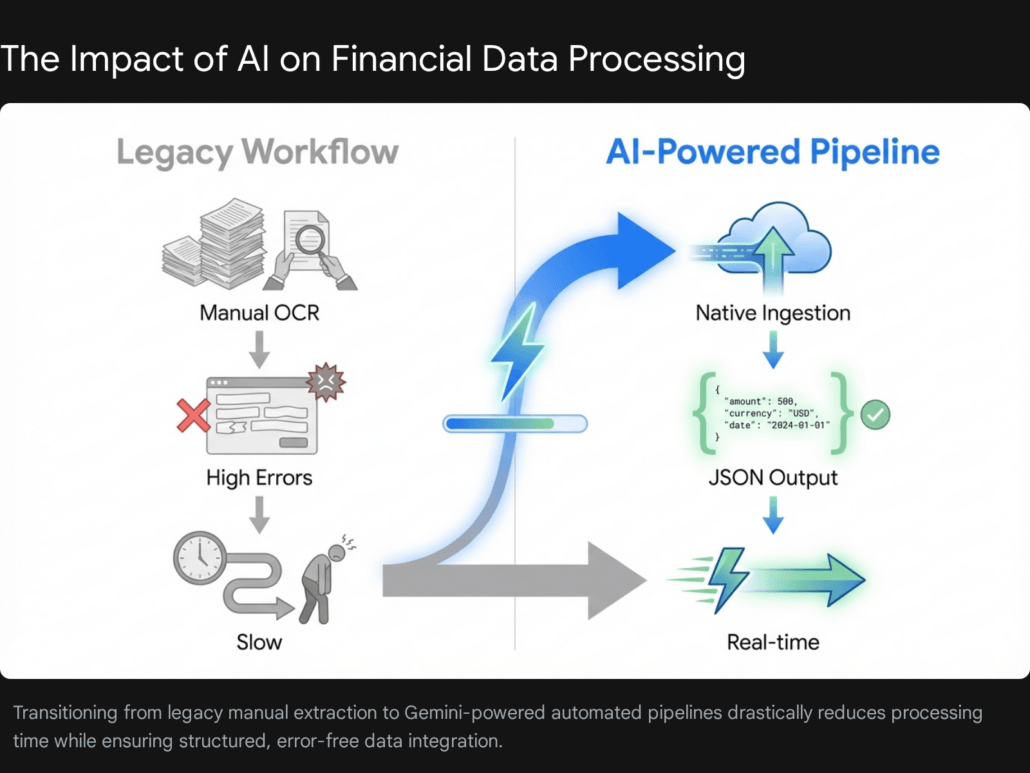

The global financial ecosystem runs on an overwhelming and ever-expanding volume of unstructured data. From dense quarterly earnings reports, sprawling balance sheets, and complex regulatory filings to environmental impact assessments and compliance dossiers, the sheer mass of information severely bottlenecks critical business decision-making. For decades, institutions have relied on armies of analysts and brittle, legacy Optical Character Recognition (OCR) systems to manually extract, verify, and standardize this data. These traditional methods routinely fail when confronted with complex table layouts, nested footnotes, and the highly nuanced jargon inherent to global finance.

Today, the technological paradigm has fundamentally shifted. Large multimodal foundational models are no longer merely conversational chatbots; they have evolved into highly capable, autonomous reasoning engines that can ingest entire financial repositories and output perfectly structured, machine-readable databases. Utilizing the Gemini API for financial analysis represents a cutting-edge business application that directly impacts the bottom line by accelerating due diligence, automating strict regulatory compliance reporting, and uncovering hidden market insights that human analysts might overlook.

At Tool1.app, we frequently consult with enterprise clients who struggle to bridge the massive gap between their unstructured document repositories and their structured financial databases. By integrating advanced artificial intelligence models into their workflows, we help them transform static PDFs, raw CSVs, and legacy spreadsheets into dynamic, real-time intelligence. This comprehensive technical report will explore the mechanics of using the Gemini API to automate your financial data pipelines, detailing everything from document ingestion and multimodal processing to enforcing strict JSON outputs for seamless enterprise system integration.

The Evolution of Context and Reasoning in Financial Artificial Intelligence

To fully grasp why the Gemini API is uniquely positioned to revolutionize financial data analysis, one must first examine the underlying architecture of modern foundational models, specifically focusing on the massive expansion of context windows and the introduction of advanced multimodal reasoning algorithms.

Financial analysis rarely happens in a vacuum. Evaluating a corporate entity’s performance requires cross-referencing the current quarter’s regulatory filings with the previous year’s annual report, while simultaneously analyzing an accompanying earnings call transcript and a supplementary dataset of historical market pricing. Until recently, artificial intelligence models were severely limited by their context windows—the strict maximum amount of information they could hold in their short-term memory at any given time. When financial documents exceeded this limit, developers were forced to build complex, error-prone Retrieval-Augmented Generation (RAG) pipelines that chopped documents into small semantic chunks, often destroying the broader context of the financial narrative.

With the introduction of the Gemini 3 and Gemini 3.1 Pro models in early 2026, developers now have access to a staggering context window of up to two million tokens. To contextualize this massive scale in a business environment, two million tokens equate to roughly 1,500 pages of dense financial text, multiple hours of audio from earnings calls, or over 30,000 lines of complex spreadsheet data. This effectively eliminates the absolute necessity for fragmented RAG systems for single-entity deep-dive analysis. Financial engineers can now pass an entire library of a company’s financial history into the prompt simultaneously, allowing the model to perform holistic, comparative analysis across multiple reporting periods with unprecedented accuracy.

Technical evaluations of the Gemini architecture reveal extraordinary recall capabilities. Diagnostic probing demonstrates that the model maintains a near-perfect 99.7% recall rate when finding specific data points hidden within a one-million-token document. Even when the context is pushed to ten million tokens in experimental environments, the model retains a 99.2% recall rate. The negative log-likelihood (NLL)—a metric where a lower value demonstrates better prediction accuracy—follows a highly predictable power-law trend up to the one-million-token mark for complex documents, indicating almost no degradation in performance as the input size scales.

Furthermore, the latest iterations of the Gemini API introduce advanced, multi-step reasoning capabilities. Models like Gemini 3.1 Pro achieve unprecedented scores on complex logic benchmarks by utilizing dedicated thinking tokens. The model architecture features an internal “LLM-in-the-middle” summarizer that distills complex chain-of-thought processes, allowing the model to reason through quantitative relationships faster than previous generations. In a financial context, this means the model does not merely pattern-match text; it actively reasons through complex accounting variables. If a balance sheet states a specific net income, and a separate footnote buried fifty pages later details a one-time restructuring charge and a tax asset valuation allowance, the model can synthesize these completely disparate data points to calculate an accurate Adjusted EBITDA with human-like analytical rigor.

Processing Modalities: Native PDF Vision versus CSV Code Execution

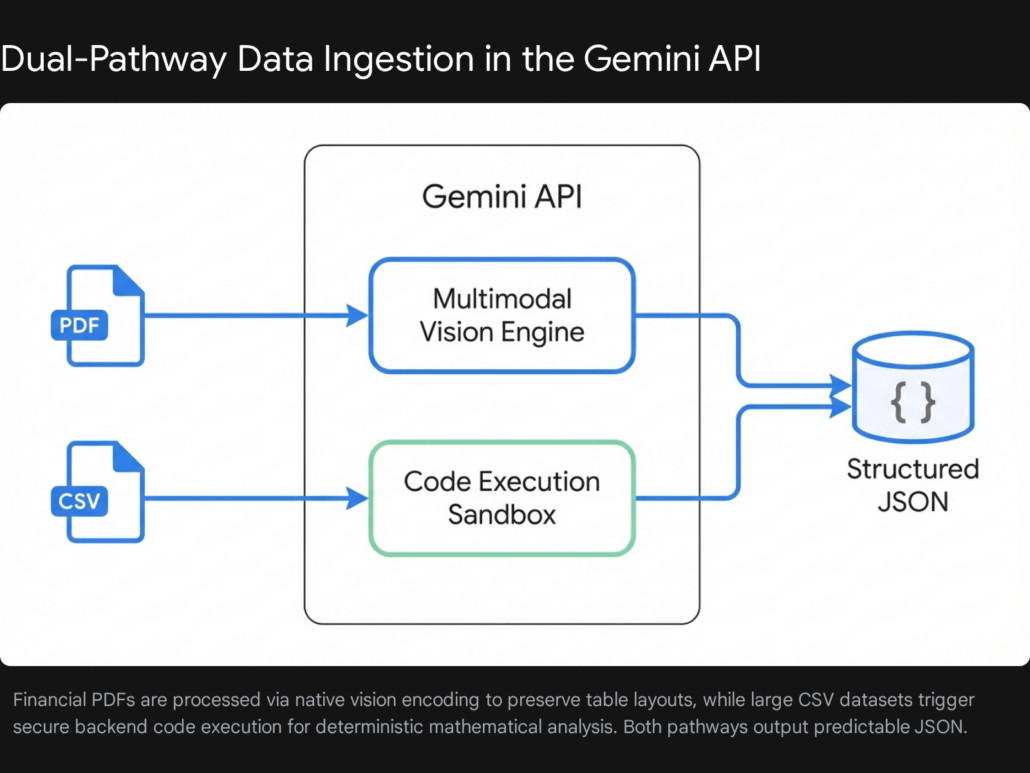

A critical architectural decision when building an automated financial data pipeline is understanding how the API handles radically different file formats. Financial data typically arrives in two distinct forms: unstructured visual documents (such as PDFs, scanned prospectuses, and annual reports) and raw structured datasets (such as CSVs, Excel files, and database exports). The Gemini API processes these modalities using two completely different underlying mechanisms, and architecting your application to leverage these differences is crucial for maximizing accuracy and reliability.

The Power of Native Multimodal PDF Processing

Historically, extracting structured data from a financial PDF required a brittle, multi-stage pipeline. First, an OCR engine would attempt to extract the raw text, and second, that flattened text would be passed to a Natural Language Processing model. This legacy approach routinely destroys the spatial relationship of the data. When an OCR engine flattens a complex, multi-column balance sheet, the columns misalign, the hierarchical indentation of the line items is lost, and the mathematical association between a financial metric (e.g., “Accounts Payable”) and its corresponding numerical value (e.g., “€4.2 Billion”) is entirely severed.

The Gemini API bypasses legacy OCR technology entirely through native multimodal visual encoding. When you pass a PDF to the model, it does not merely read the extracted text string; it “sees” the document exactly as a human analyst would. It inherently understands the spatial layout of the page, the visual hierarchy of bolded headers, the rigid grid structure of complex financial tables, and the semantic relationship between a complex pie chart and its accompanying visual legend.

This multimodal understanding allows financial developers to issue highly complex, spatially aware natural language queries. For example, a system prompt can instruct the model to: “Analyze the consolidated statement of operations on page 14. Extract all operating expenses for the third quarter, strictly maintaining the hierarchical relationship between the parent categories and their sub-line items as visually depicted in the table.” Because the model processes the pixels and the text simultaneously, it can easily distinguish between a column representing 2025 data and a column representing 2026 data, even if the table headers are visually offset or complexly formatted.

Handling High-Volume CSV Data via Code Execution

While PDFs are treated as rich visual topographies, CSV files and spreadsheets are ingested by the model as flat text arrays. If you upload a massive CSV file containing thousands of rows of daily stock prices, transactional ledgers, or historical bond yields, the model must read it sequentially.

While large language models are highly adept at linguistic reasoning, they are inherently probabilistic engines, making them prone to hallucinations when asked to perform massive-scale, deterministic arithmetic directly in their neural weights. Asking an LLM to mentally calculate the standard deviation or the compound annual growth rate of 5,000 rows of pricing data will often result in plausible but mathematically incorrect answers.

For rigorous CSV data analysis, best practices dictate leveraging the Gemini API’s Code Execution Sandbox capabilities. Instead of asking the model to guess the mathematical answer, you prompt the model to act as a data scientist. The model reads the schema of the CSV, writes a customized Python script utilizing libraries like pandas or NumPy to perform the required mathematical aggregations, executes that code in a secure, isolated backend sandbox, and returns the mathematically perfect statistical output.

This hybrid approach represents the pinnacle of modern financial automation. It marries the deterministic, error-free precision of Python execution with the dynamic, natural language reasoning of generative artificial intelligence. The model can even be instructed to evaluate the output of its own code, re-write the script if an error occurs, and provide a plain-English summary of the statistical findings alongside the raw calculated data.

The Technical Blueprint: Uploading and Querying Financial Documents

When dealing with enterprise-grade financial analysis, software engineers are rarely dealing with small text snippets. Annual reports, initial public offering prospectuses, and syndicated loan agreements are massive files, often exceeding tens of megabytes in size. Passing these massive documents directly inline as base64-encoded strings within a REST API payload is highly inefficient. It severely increases network latency, consumes excessive bandwidth, and strictly limits the application’s ability to reference the exact same document across multiple, independent queries.

The established standard best practice for production environments is to utilize the dedicated Gemini Files API. This robust service allows developers to upload large media files—supporting documents up to 100MB per individual PDF—directly to secure backend servers, where they are temporarily cached for 48 hours. Upon successful upload, the API returns a Uniform Resource Identifier (URI) that developers can then reference seamlessly in their generation prompts.

Furthermore, recent architectural updates to the Gemini ecosystem have drastically streamlined enterprise data integration. Developers can now bypass the manual upload step entirely if their financial data already resides within cloud infrastructure. The API supports direct integration with Google Cloud Storage (GCS) buckets, allowing you to pass GCS URIs natively. Additionally, it supports fetching documents via secure, pre-signed HTTPS URLs from external cloud providers, eliminating the need to download massive financial datasets to your own application backend just to forward them to the AI model.

Below is a practical, production-ready Python implementation demonstrating how to upload a corporate earnings report and perform an initial structural analysis. This is the exact type of robust, scalable infrastructure our engineering team at Tool1.app frequently architects for our financial sector partners, ensuring high availability and secure data handling.

Python

import os

import time

from google import genai

from google.genai import types

# Initialize the modern Gemini client architecture

# Ensure your GOOGLE_API_KEY is securely stored in your environment variables

client = genai.Client(api_key=os.getenv("GOOGLE_API_KEY"))

def upload_and_analyze_financial_report(file_path: str):

print(f"Initiating secure upload for {file_path} via the Files API...")

# Upload the document via the specialized Files API

# This decouples the heavy file transfer from the actual inference request

uploaded_file = client.files.upload(file=file_path)

print(f"File uploaded successfully. Server URI assigned: {uploaded_file.uri}")

# Financial documents are notoriously large and complex.

# It is a mandatory best practice to verify the processing state

# before executing a query to ensure the multimodal encoder has fully ingested the PDF.

while True:

file_info = client.files.get(name=uploaded_file.name)

if file_info.state.name == "ACTIVE":

print("Document processing complete. The file is ready for multimodal analysis.")

break

elif file_info.state.name == "FAILED":

raise Exception("Critical Error: Document processing failed on the remote server.")

print("Backend processing document... polling state in 5 seconds.")

time.sleep(5)

# Construct the highly specific analytical prompt

# Modular prompts targeting specific sections yield more actionable results

prompt = """

You are an expert financial analyst acting as an automated intelligence agent.

Review this comprehensive quarterly earnings report.

1. Identify the main macroeconomic headwinds and tailwinds mentioned by the executive management team.

2. Summarize the company's current liquidity position, explicitly extracting the numerical

values for total cash, cash equivalents, and short-term investments.

3. Identify any subtle changes in the 'Risk Factors' section compared to standard historical guidance.

"""

print("Executing Gemini 3.1 Pro deep-reasoning analysis...")

# Generate content using the flagship model for complex reasoning and high recall

response = client.models.generate_content(

model='gemini-3.1-pro-preview',

contents=[

types.Part.from_uri(uri=uploaded_file.uri, mime_type="application/pdf"),

prompt

]

)

print("n--- Automated Financial Analysis Output ---")

print(response.text)

# Security and Storage Best Practice:

# Explicitly delete the file from the server when the pipeline completes

client.files.delete(name=uploaded_file.name)

print("nTemporary financial document securely purged from the server.")

# Example pipeline execution

# upload_and_analyze_financial_report("Q3_2026_Consolidated_Financial_Results.pdf")

This specific architecture ensures that the heavy lifting of document ingestion is entirely decoupled from the actual neural inference request. This drastically improves response times and system stability when executing complex multi-turn chats or running parallel, asynchronous queries against the same master financial document.

Enforcing Deterministic Predictability: The Necessity of Structured JSON Output

Extracting a beautifully written, natural language summary of a corporate balance sheet is an impressive demonstration of artificial intelligence, but it is largely useless for rigorous enterprise software automation. If your goal is to automatically update a corporate risk dashboard, trigger a high-frequency trading algorithm, or populate an immutable compliance database, the AI model must return data in a strictly typed, predictably formatted programmatic structure.

This requirement introduces the concept of “Controlled Generation” or “JSON Mode,” which is arguably the most critical feature of the Gemini API for business applications. By defining a rigorous Response Schema in your API call, you forcefully constrain the large language model. You force it to abandon conversational pleasantries, markdown formatting, and unpredictable text generation, compelling it to output nothing but valid, parseable JSON that adheres exactly to your predefined data contract.

Recent enhancements to the Gemini API have introduced deep, native support for JSON Schema, including full out-of-the-box compatibility with modern data validation libraries like Pydantic for Python and Zod for JavaScript. By defining your expected financial metrics as rigorous Pydantic models, you guarantee absolute type safety. The API will ensure that numerical values are returned strictly as floats or integers, dates are returned as standardized ISO strings, and complex nested arrays are properly formatted.

Furthermore, the API now supports advanced JSON Schema keywords, including anyOf for conditional union structures, $ref for recursive schemas, and strict definitions for additionalProperties. By explicitly setting additionalProperties to false, you completely eliminate the risk of the model hallucinating unexpected fields—preventing the model from appending a random, fabricated key-value pair to a sensitive financial payload.

Architecting the Automation Pipeline: A Pydantic Code Example

Let us examine a highly practical real-world scenario. You are tasked with extracting core metrics from a complex Profit & Loss (P&L) statement embedded deep within an unstructured, 200-page PDF report. Here is how you enforce strict JSON output using the Gemini API and Pydantic validation to create an unbreakable data extraction pipeline.

Python

import os

from google import genai

from pydantic import BaseModel, Field

from typing import List, Optional

client = genai.Client(api_key=os.getenv("GOOGLE_API_KEY"))

# 1. Define the rigorous data schema using Pydantic

# This acts as the strict blueprint the AI must follow

class FinancialMetric(BaseModel):

metric_id: str = Field(description="A unique, snake_case identifier (e.g., net_revenue, gross_margin)")

metric_name: str = Field(description="The exact textual name of the metric as it appears in the PDF table")

value_in_millions_eur: float = Field(description="The numerical value extracted, converted strictly to millions of Euros")

is_estimate: bool = Field(description="Set to True if the numerical value includes hedging words like 'approximately', 'projected', or 'guidance'")

class BalanceSheetExtraction(BaseModel):

company_name: str

reporting_period: str = Field(description="The fiscal quarter and year, e.g., Q3 2026")

original_currency_used: str = Field(description="The original currency found in the document before EUR conversion")

core_metrics: List[FinancialMetric] = Field(description="A comprehensive list of all extracted line items")

auditor_notes_summary: Optional[str] = Field(description="A brief summary of any specific risk factors or going concern notices mentioned in the footnotes. Can be null.")

def extract_structured_financials(file_uri: str):

# 2. Craft a highly specific, directive system prompt

prompt = """

You are an automated, high-precision financial data extraction agent.

Analyze the provided financial PDF document in its entirety.

Locate the consolidated balance sheet and the income statement.

Extract all key financial metrics exactly as requested by the schema.

CRITICAL INSTRUCTION: If the original values in the report are not in Euros (€),

you must mathematically approximate the conversion to Euros based on standard,

recent exchange rates. You must note the original currency in the 'original_currency_used' field.

Be absolutely precise with the numerical values. Do not hallucinate data that is not present.

"""

print("Executing strict JSON-constrained data extraction...")

# 3. Execute the API call with Controlled Generation configuration

response = client.models.generate_content(

model='gemini-3.1-pro-preview',

contents=[

client.files.get(name=file_uri), # Assuming the document is already actively cached

prompt

],

config={

'response_mime_type': 'application/json',

'response_schema': BalanceSheetExtraction,

'temperature': 0.1 # Crucial: Extremely low temperature enforces highly deterministic, non-creative extraction

}

)

# The response is guaranteed by the API to exactly match the Pydantic schema

# It can be parsed directly into a dictionary, passed to a frontend dashboard, or saved via a database ORM

extracted_data = response.parsed

return extracted_data

By setting the execution temperature to an exceptionally low value (e.g., 0.1) and relying strictly on the response_schema parameter, you fundamentally transform a probabilistic language model into a deterministic, highly reliable data extraction engine. This exact technical methodology forms the robust backbone of the custom automation solutions we build for our enterprise partners at Tool1.app, allowing them to process thousands of complex regulatory filings overnight with absolute zero human intervention.

Navigating Global Accounting Standards: IFRS versus US GAAP

When building an automated extraction tool, software engineers must account for the critical reality that global financial data is not universally standardized. A machine learning pipeline must be explicitly programmed—via its system prompts, its context windows, and its JSON schemas—to recognize, interpret, and handle the profound differences in international accounting frameworks. Failing to account for these differences will result in an AI model generating comparative analyses that are fundamentally flawed and financially dangerous.

The two dominant frameworks governing corporate finance are the International Financial Reporting Standards (IFRS) and the Generally Accepted Accounting Principles (GAAP).

IFRS is a truly global standard, currently mandated or permitted in over 140 jurisdictions around the world. This includes the entirety of the European Union. For example, in nations like Bulgaria, the application of IFRS is strictly required by law for all listed companies, banks, insurance agencies, and large unlisted limited liability entities. Conversely, GAAP is primarily restricted to the United States, governed by the Financial Accounting Standards Board (FASB) and mandated by the Securities and Exchange Commission (SEC) for domestic publicly traded firms.

The core philosophical distinction between these two systems severely impacts how an artificial intelligence model should extract and interpret financial data. US GAAP is fundamentally a rules-based system, offering highly detailed, industry-specific guidelines that leave very little room for creative interpretation. IFRS, on the other hand, is heavily principles-based, relying on broad guidelines that require the professional judgment and interpretation of the accounting team to reflect the true economic reality of a transaction.

For developers building an automated AI pipeline using the Gemini API, this philosophical divergence manifests in several highly technical data extraction challenges:

| Accounting Metric | IFRS (International & EU Standard) | US GAAP (United States Standard) | AI Extraction Challenge & Prompt Requirement |

| Inventory Valuation | Prohibits the Last In, First Out (LIFO) method. Permits FIFO and weighted-average cost. | Permits LIFO, FIFO, and specific identification methods. | If the AI is extracting Cost of Goods Sold (COGS) to calculate profit margins, it must recognize the accounting method. Using LIFO during inflationary periods artificially lowers reported net income. An AI comparing a European (IFRS) company to an American (GAAP) company must be explicitly prompted to flag LIFO usage, otherwise, the automated comparative margin analysis is invalid. |

| Asset Revaluation | Permits companies to revalue certain fixed assets (like property or equipment) upward to reflect current fair market value, provided it can be reliably measured. | Strictly prohibits upward revaluations. Assets must be recorded and kept at historical cost, minus depreciation. | If your JSON schema extracts “Total Asset Value,” the Gemini API must be instructed to aggressively scan the document’s footnotes for revaluation disclosures. The raw top-line numbers between the two frameworks are not apples-to-apples, and the AI must normalize them. |

| Development Costs | Internal development costs can be capitalized (recorded as an asset on the balance sheet) if specific strict criteria are met demonstrating future economic benefit. | Internal development costs must generally be expensed immediately as incurred on the income statement. | Extracting R&D expenditures requires the AI to understand that under IFRS, a highly innovative company might look more profitable on paper in the short term because costs are capitalized rather than expensed. The prompt must instruct the model to separate capitalized vs. expensed R&D. |

To programmatically handle these discrepancies, your Pydantic validation schema should always include a mandatory field for accounting_framework_detected. Furthermore, the foundational system prompt should explicitly instruct the Gemini API to scan the document’s introductory notes and auditor opinions to classify the report’s framework before extracting a single numerical value.

Deep Dive: Transformative Business Use Cases for AI Financial Analysis

The theoretical and architectural capabilities of the Gemini API are undeniably impressive, but their true enterprise value is only realized when applied to specific, high-friction business workflows. Financial teams deal with highly specialized regulatory environments, immense time pressure, and deeply siloed data structures. Here are the most impactful, real-world use cases where large-context multimodal models are currently driving massive return on investment.

1. Automating EU CSRD and ESG Compliance Reporting

Environmental, Social, and Governance (ESG) reporting is currently undergoing a massive, disruptive regulatory shift. In 2026, the European Union’s Corporate Sustainability Reporting Directive (CSRD) regulations drastically altered the corporate compliance landscape. While recent “Omnibus” agreements and revised thresholds have adjusted the scope—focusing mandatory, heavy reporting primarily on mega-corporations with over 1,000 employees and a net turnover exceeding €450 million—the ripple effects throughout the economy are massive.

Even smaller, out-of-scope Small and Medium Enterprises (SMEs) are facing immense, unavoidable pressure. Large enterprise clients are legally mandated to track their Scope 3 emissions (the emissions produced by their entire supply chain). Consequently, these large corporations are demanding highly granular sustainability data from every small vendor and partner they work with.

The fundamental challenge with ESG reporting is that the required data is notoriously fragmented, unstructured, and qualitative. A company must pull raw energy consumption data from hundreds of facility PDF utility bills, aggregate employee diversity metrics from legacy HR software exports, and parse supply chain ethical audits from third-party vendor CSV files.

Using the Gemini API, businesses can architect an “ESG Data Detective” pipeline. The AI system can autonomously ingest hundreds of disparate internal policy documents, cross-reference them directly against the dense, complex European Sustainability Reporting Standards (ESRS), and automatically map internal unstructured data to the required regulatory frameworks. The model can highlight critical data gaps, use its reasoning capabilities to validate numerical carbon claims against qualitative narrative statements to prevent accidental “greenwashing” inconsistencies, and generate the complete first draft of the compliance report. Industry data suggests that deploying LLMs for this specific task can reduce total ESRS reporting time and manual labor by up to 50%.

2. Streamlining KYC and AML Due Diligence Workflows

Know Your Customer (KYC) and Anti-Money Laundering (AML) compliance is a massive, labor-intensive industry. In the United States and Europe alone, approximately four million professionals work in compliance and auditing functions. These professionals spend countless hours manually sifting through vast amounts of unstructured data: international passports, complex corporate registry documents, proof of address utility bills in foreign languages, and dense legal ownership structures. Traditional workflows involve manually reading these documents and keying the data into a central silo—a process inherently limited by human capacity and highly prone to fatigue-induced errors.

The Gemini API’s native multimodal capabilities are perfectly engineered for this exact problem. An automated pipeline can ingest a scanned, low-resolution PDF of a complex foreign corporate registry. The vision encoder bypasses the visual noise, autonomously extracts the Ultimate Beneficial Owners (UBOs), accurately identifies their complex tiered ownership percentages, and outputs a structured, relational JSON web.

This JSON data can then be programmatically fired against international sanctions and politically exposed persons (PEP) watchlists in milliseconds. Because the model possesses deep reasoning capabilities, it can also be prompted to flag semantic anomalies or suspicious patterns in the documents that might indicate fraudulent activity, acting as an intelligent, tireless first line of defense before a human compliance officer ever touches the file.

3. Accelerated Equity Research and Market Surveillance

For professionals in investment banking, venture capital, and private equity, the speed of insight is the ultimate competitive advantage. When a publicly traded company releases its quarterly earnings, armies of analysts scramble to read the reports, update their financial models, and compare the new data against previous management guidance and competitor performance.

By leveraging the massive two-million-token context window of Gemini 3.1 Pro, an investment firm can completely automate this grueling process. An application can be programmed to instantly fetch a newly published 10-Q filing from the SEC EDGAR database the moment it drops. It can then upload that new filing alongside the company’s previous eight quarterly filings, the last three annual 10-K reports, and transcripts from competitor earnings calls.

The prompt can instruct the API to perform a deep longitudinal analysis: “Identify any subtle, semantic changes in the ‘Risk Factors’ section compared to the previous three quarters. Extract the specific revenue figures for the Artificial Intelligence hardware division across all provided documents, calculate the compound quarter-over-quarter growth rate, and identify any discrepancies between management’s stated goals and the raw numerical data. Format the entire output as a JSON array.” What previously took a senior analyst several hours of tedious cross-referencing is accomplished by the API with higher accuracy in under thirty seconds.

Managing the Economics: Understanding API Pricing Strategy in 2026

When architecting scalable, enterprise-grade AI software, understanding the economic unit economics of API calls is just as critical as writing clean, efficient code. Processing massive financial PDFs and thousands of rows of CSV data consumes a significant number of computational tokens. Blindly pushing all your data to the most expensive, heavily parameterized model without a strategic architecture can quickly erode the profit margins of your software application.

Google offers the Gemini API through two primary gateways: Google AI Studio (designed for rapid developer prototyping) and Vertex AI (designed for robust, enterprise cloud infrastructure). In 2026, Google solidified its model tiering strategy, creating a very clear architectural choice for developers between maximum reasoning depth (the Pro tier) and high-speed cost efficiency (the Flash tier).

To effectively budget for an automated financial analysis pipeline, consider the following approximate pricing structures (converted to Euros to reflect the European business context, utilizing an approximate conversion rate where applicable).

The Heavyweight Reasoning Engine: Gemini 3.1 Pro

Gemini 3.1 Pro (and its immediate predecessor 3 Pro) is the flagship reasoning engine. It operates with a slower time-to-first-token but delivers profound analytical depth. It should be strictly reserved for highly complex tasks: deep comparative analysis across multiple annual reports, navigating highly ambiguous regulatory texts (like ESRS framework mapping), and executing complex mathematical logic where absolute precision is required.

- Standard Input Costs (Contexts ≤ 200,000 tokens): Approximately €1.84 per 1 million tokens.

- Standard Output Costs (Contexts ≤ 200,000 tokens): Approximately €11.04 per 1 million tokens.

- Long Context Premium (Contexts > 200,000 tokens): If your pipeline is pushing massive document libraries that breach the 200k threshold (scaling up to the 2M limit), the computational complexity increases significantly. Consequently, the price effectively doubles to roughly €3.68 per 1 million input tokens and €16.56 per 1 million output tokens.

The High-Volume Workhorse: Gemini 3 Flash

The release of the Gemini 3 Flash model redefined the economics of the AI market. Despite being positioned as a “budget” or “lite” model, benchmark testing reveals it actually outperforms older generation flagship models on numerous coding and data extraction tasks, while operating at blazing, sub-second speeds. For high-volume, routine financial tasks—such as extracting five specific fields from tens of thousands of standard invoices, formatting clean CSV ledger data, or rapidly summarizing daily market news feeds—Flash is the economically superior and architecturally correct choice.

- Input Costs: Approximately €0.46 per 1 million tokens.

- Output Costs: Approximately €2.76 per 1 million tokens.

Strategic Cost Mitigation: Implementing Context Caching

For financial software applications, users will frequently query the exact same large document repeatedly. For example, you might upload a dense 500-page IPO prospectus and allow an investment team to ask fifty different questions about it in a conversational chat interface over the course of a week. Paying the heavy input token cost to re-process all 500 pages on every single query is architecturally inefficient and financially ruinous.

To solve this economic bottleneck, the Gemini API supports Context Caching. Developers can upload the massive financial document and programmatically “pin” it to the cache. You pay a nominal, one-time hourly storage fee (approximately €4.14 per 1 million tokens per hour), but all subsequent queries against that cached document receive a massive discount on input token costs—often resulting in a 50% to 75% reduction in API spend. Implementing robust context caching is a mandatory architectural requirement for any production-grade financial application dealing with large document repositories.

Navigating the Frontier of Financial Automation

The deep integration of the Gemini API into corporate financial workflows is not merely a localized technological upgrade; it represents a fundamental, irreversible shift in how organizations process human knowledge. The unprecedented ability to pass millions of tokens of unstructured text, complex multimodal PDF tables, and raw CSV ledger data into an autonomous reasoning engine that mathematically guarantees perfectly structured JSON output solves one of the oldest, most expensive, and most frustrating problems in enterprise data management.

However, the technology is only as effective as its specific implementation. Building resilient, production-ready AI pipelines requires deep, multi-disciplinary expertise. It requires mastery of prompt engineering to guide the model’s logic, rigorous Pydantic schema validation to prevent hallucinations, asynchronous API orchestration to manage latency, and sophisticated cost-optimization strategies like context caching to ensure economic viability. It requires a nuanced architectural understanding of exactly when to deploy the heavy, deliberate reasoning of Gemini 3.1 Pro, and when to leverage the sheer, cost-effective speed of Gemini 3 Flash.

As international regulatory burdens like the EU CSRD continue to expand, and the pace of global financial markets perpetually accelerates, the organizations that thrive in the coming decade will be those that successfully automate their data ingestion pipelines. By doing so, they free their highly paid human analysts to focus on high-level strategic decision-making rather than the tedious, error-prone drudgery of manual data entry.

Partner with Tool1.app to Build Your AI Financial Infrastructure

Automate your data analysis workflow. Tool1.app can integrate powerful AI into your financial systems, transforming your static documents into actionable, structured intelligence. Our dedicated team of expert software engineers and AI specialists possesses deep, battle-tested experience in building custom, highly secure, and immensely scalable data pipelines using the absolute latest large language model technologies, including the advanced Gemini API.

Whether your enterprise needs to automate complex ESG compliance reporting, streamline international KYC due diligence, or build a bespoke, lightning-fast internal dashboard for rapid equity research and market surveillance, we have the technical acumen and industry knowledge to deliver. We handle the intense complexities of multimodal document parsing, strict JSON schema enforcement, backend Python code execution environments, and secure cloud infrastructure deployment so your leadership team can focus entirely on your core business objectives.

Do not let your most valuable market insights remain trapped and inaccessible within unstructured PDFs and sprawling, unmanageable spreadsheets. Contact Tool1.app today for a comprehensive technical consultation, and let us architect a custom artificial intelligence or Python automation solution tailored specifically to conquer your enterprise’s unique financial data challenges.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.