The AI Regulation Landscape: How the EU AI Act Affects Your Custom App

Table of Contents

- The 2026 Compliance Timeline: A Critical Status Check

- Defining the Scope: Is Your App High-Risk?

- The High-Risk Compliance Stack: A Technical Blueprint

- Engineering Transparency: Chatbots and GenAI (Article 50)

- Conformity Assessments: Proving You Follow the Rules

- Post-Market Monitoring and Continuous Compliance

- SME Support: Accessing Sandboxes

- Strategy: Your Roadmap to August 2026

- Conclusion: Compliance is a Competitive Advantage

- Show all

The digital frontier is closing. For the past decade, software development—particularly in the realm of artificial intelligence and automation—has operated in a permissive environment where innovation outpaced regulation. That era effectively ends in 2026. As we stand today, in February 2026, the European Union’s Artificial Intelligence Act (EU AI Act) has transitioned from a theoretical policy debate into a hard-coded operational reality. The “grace periods” are evaporating, and the compliance clock is ticking toward the most consequential deadline in the history of algorithmic regulation: August 2, 2026.

For business owners, CTOs, and product managers, this shift represents a fundamental change in how software must be architected, deployed, and maintained. It is no longer sufficient to ask, “Does it work?” or “Is it profitable?” The mandatory question is now, “Is it compliant?” The penalties for getting this wrong are existential—fines reaching up to €35 million or 7% of global annual turnover , and perhaps more damaging, the forced withdrawal of non-compliant systems from the EU market.

At Tool1.app, we have spent the last year re-engineering our development pipelines to anticipate these shifts. We understand that compliance is not just legal paperwork; it is a technical challenge that requires precise engineering solutions. Whether you are running a custom Python automation for credit scoring or a GenAI-powered customer service chatbot, the rules of the game have changed. This comprehensive guide is designed to cut through the legal noise and provide a practical, operational roadmap for navigating the 2026 compliance landscape.

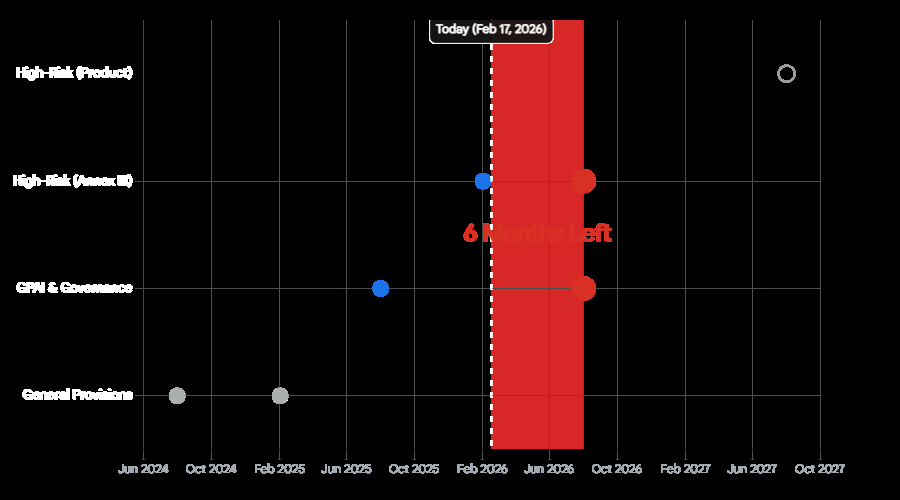

The 2026 Compliance Timeline: A Critical Status Check

Understanding the timeline is the first step in risk mitigation. The EU AI Act does not apply all at once; it rolls out in phased waves. However, the wave that hits in August 2026 is a tsunami for enterprise software.

The “Red Letter Date”: August 2, 2026

While the initial bans on “unacceptable risk” AI (such as social scoring and manipulative behavioral techniques) came into force in February 2025 , the vast majority of business applications fall into the “High-Risk” category or the “Transparency” category. For High-Risk AI Systems (HRAIS) listed in Annex III, the full weight of the regulation applies on August 2, 2026.

This means that by the summer of 2026, any software currently in development or operation that falls under these categories must be fully compliant. There is no “beta testing” for compliance after this date. Systems found non-compliant can be immediately subjected to enforcement actions by national competent authorities.

Immediate Legacy System Impact

A critical nuance often missed by developers is the treatment of legacy systems. The AI Act applies to operators of high-risk AI systems that were placed on the market before August 2026 if those systems undergo “significant design changes”. This creates a “compliance trigger” for your existing software maintenance. If you release a major version update to a legacy HR screening tool or a credit scoring algorithm after August 2026, you may inadvertently trigger the full compliance requirements for the entire system.

Furthermore, for General Purpose AI (GPAI) models that were already on the market before August 2025, the compliance transition period extends to August 2027. However, this exception is narrow and applies specifically to the models themselves (like the foundation model), not necessarily the applications built on top of them. Application developers using GPAI APIs must still adhere to the downstream obligations relevant to their specific use case by the 2026 deadline.

Defining the Scope: Is Your App High-Risk?

The EU AI Act adopts a risk-based approach, classifying systems into four categories: Unacceptable Risk, High Risk, Limited Risk, and Minimal Risk. For software agencies and their clients, the most dangerous ambiguity lies in the distinction between “Limited” and “High” risk. Misclassifying a High-Risk system as Limited Risk is the primary vector for regulatory liability.

The Logic of Classification

Classification is not determined by the complexity of the code (e.g., neural networks vs. decision trees) but by the intended purpose and the impact on fundamental rights.

Deep Dive: High-Risk Use Cases (Annex III)

If your custom application falls into one of the critical areas listed in Annex III, you face the heaviest compliance burden. These areas are chosen because they significantly impact people’s life chances, safety, or access to services.

Employment and Worker Management

This is a massive sector for custom software development. Any AI system used for recruitment or selection of natural persons is High-Risk.

- Examples: Resume parsing tools that filter candidates based on keywords; algorithms that rank job applicants; video interview analysis software that infers “personality traits”; automated tools for promotion, task allocation, or termination decisions.

- The Nuance: Even a simple Python script that sorts CVs by “years of experience” could be considered High-Risk if it serves as the primary filter for candidate selection, effectively automating a gatekeeping function.

Education and Vocational Training

AI systems used to determine access to education or to assess students are High-Risk.

- Examples: Automated proctoring systems for exams; grading algorithms; tools that assign students to specific learning tracks based on performance.

- Implication: EdTech platforms must now prove their grading algorithms are free from bias and do not disadvantage protected groups.

Essential Private and Public Services

This category captures a broad swath of the FinTech and InsurTech industries.

- Credit Scoring: AI systems used to evaluate the creditworthiness of natural persons or establish their credit score are High-Risk. This applies to traditional banks but also to “Buy Now, Pay Later” apps, peer-to-peer lending platforms, and alternative credit scoring models using non-traditional data (e.g., social media activity).

- Insurance: Risk assessment and pricing tools for life and health insurance are High-Risk.

- Exception: Systems used solely for the detection of financial fraud are generally exempt from the High-Risk classification. This is a critical distinction for FinTech developers.

Critical Infrastructure

AI systems intended to be used as safety components in the management and operation of road traffic or the supply of water, gas, heating, and electricity are High-Risk.

- Examples: Algorithms controlling traffic lights; predictive maintenance systems for power grids where failure could disrupt supply.

The “Prohibited” Zone: Where No App Can Go

Before discussing compliance, we must identify non-starters. The following practices are banned as of February 2025. No amount of documentation can make these legal :

- Social Scoring: Evaluating individuals based on social behavior for general purposes.

- Untargeted Scraping: Building facial recognition databases by scraping images from the internet or CCTV (e.g., Clearview AI model).

- Emotion Recognition: Using AI to infer emotions in workplaces or educational institutions. This effectively bans “AI interview coaches” that analyze candidate confidence or “student attention trackers” in schools.

- Biometric Categorization: Systems that infer sensitive data (race, political opinion, religion, sexual orientation) from biometric data.

- Real-time Remote Biometric Identification (RBI): Using facial recognition in public spaces for law enforcement is largely banned, with very narrow exceptions for serious crimes and terrorism.

The High-Risk Compliance Stack: A Technical Blueprint

For companies building High-Risk AI Systems, compliance is an engineering challenge that requires a new “stack” of capabilities. You must build these features into your product before August 2026. At Tool1.app, we recommend treating these requirements as non-functional requirements (NFRs) akin to security or scalability.

A. Risk Management System (Article 9)

The AI Act requires a “continuous iterative process” for risk management throughout the entire lifecycle of the system. This is not a one-off spreadsheet; it is a dynamic system.

- Requirement: Identify and analyze known and foreseeable risks to health, safety, and fundamental rights.

- Implementation: Integrate risk assessment triggers into your CI/CD pipeline. Every time the model is retrained or a significant code change occurs, the risk profile must be re-evaluated.

- Mitigation Hierarchy: You must attempt to eliminate risks by design first. If that is not possible, implement mitigation measures. Finally, if risks remain, you must inform the user (deployer).

B. Data Governance and Lineage (Article 10)

Article 10 is perhaps the most technically demanding section for data scientists. It requires that training, validation, and testing datasets be subject to appropriate data governance.

- Data Quality: Datasets must be “relevant, representative, free of errors and complete”. Note the “free of errors” requirement—in machine learning, this is statistically impossible. The consensus interpretation is that this means “free of systematic errors” or curation errors, rather than requiring perfect data.

- Bias Detection: You are legally mandated to examine your data for potential biases.

- The GDPR Interplay: Uniquely, Article 10(5) of the AI Act allows providers to process special categories of personal data (e.g., race, ethnicity, health data) if strictly necessary for the purpose of bias detection and correction. This is a major exception to the general GDPR prohibition on processing sensitive data.

- Safeguards: To use this exception, you must apply state-of-the-art security, pseudonymization, and strict access controls. The data cannot be used for any other purpose and must be deleted once bias correction is complete.

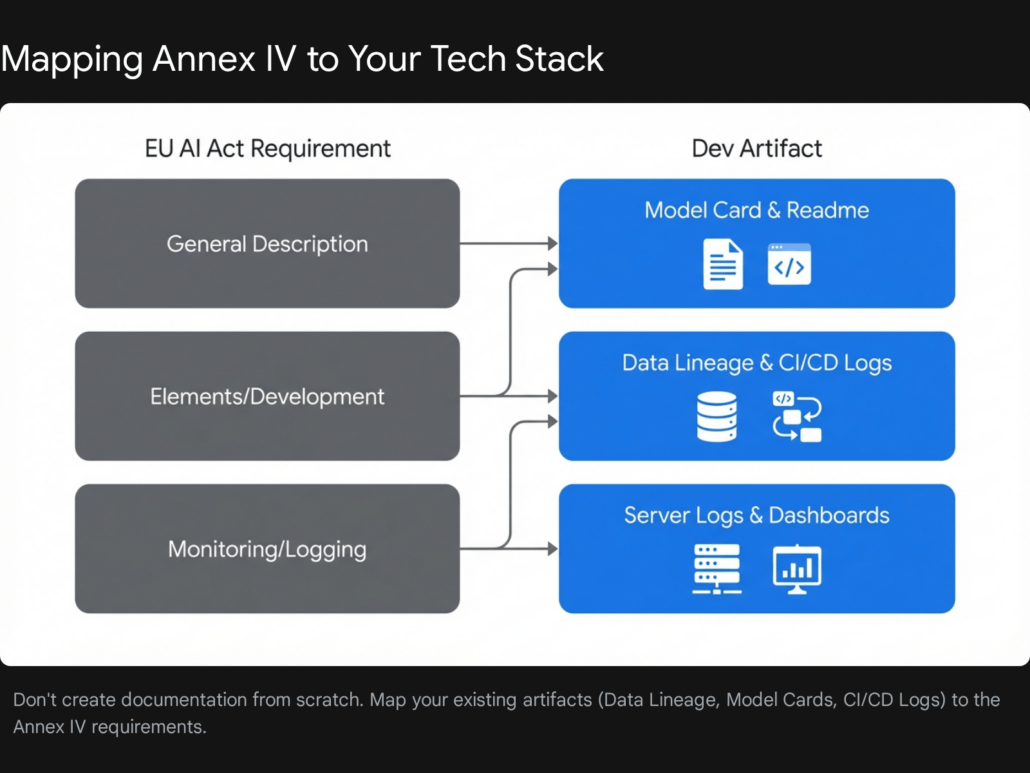

C. Technical Documentation (Annex IV)

You must maintain a comprehensive technical file that demonstrates compliance. This is effectively a “flight recorder” for your AI development process.

- Architecture: A detailed description of the system elements, data flows, and interactions with other software.

- Model Details: Algorithms used, design choices, and the rationale behind them.

- Data Sheets: Descriptions of the training data sources, preprocessing steps, and cleaning methodologies.

- Version Control: The Act requires you to track the “version of the system reflecting its relation to previous versions”. Tool1.app advises implementing semantic versioning for models (e.g.,

v1.2.0) alongside your code versioning.

D. Record Keeping and Automatic Logging (Article 12)

Your software must automatically generate logs to ensure traceability.

- Retention: Logs must be kept for a period appropriate to the intended purpose, typically at least 6 months.

- Content: The logs must record the start/stop times of use, the input data, the reference databases used (for biometric systems), and the output/decision of the system.

- JSON Schema: A compliant log entry might look like a JSON object containing

timestamp,model_id,input_hash,output_decision, andconfidence_score. This enables post-incident analysis if the AI makes a harmful decision.

E. Human Oversight (Article 14)

High-Risk AI cannot be fully autonomous. It must be designed to be effectively overseen by natural persons.

- The “Stop Button”: The system must include a mechanism for the human overseer to interrupt the system or hit a “stop” button.

- The Override: A human must be able to disregard, override, or reverse the AI’s output. For example, in a credit scoring app, the loan officer must have a UI button to “Approve Loan” even if the AI recommends “Reject,” provided they document the reason.

- Automation Bias: The interface must be designed to minimize “automation bias”—the tendency of humans to blindly trust the machine. This can be achieved by showing confidence intervals or “reasoning” highlights rather than just a binary “Yes/No” recommendation.

Engineering Transparency: Chatbots and GenAI (Article 50)

For many of Tool1.app‘s clients, the most visible impact of the AI Act is on consumer-facing interfaces. Article 50 imposes strict transparency obligations on AI systems that interact with humans or generate content.

The “I Am A Robot” Disclosure

If you deploy a chatbot, you must inform the user that they are interacting with an AI system, unless it is “obvious from the context”.

- What is Obvious? A chatbot named “CustomerSupportBot” might be obvious. A realistic avatar named “Alice” that uses colloquial language is likely not obvious.

- Best Practice: Do not rely on the “obviousness” exemption. It is safer to include a clear disclosure.

- UX Pattern: The disclosure should appear at the start of the interaction. A persistent badge or a “Powered by AI” label in the chat header is also a strong design pattern.

Deepfakes and Synthetic Content

Any AI system that generates synthetic audio, image, video, or text must mark the outputs as artificially generated in a machine-readable format.

- Visible Label: For deepfakes (content that resembles existing persons/places), you must disclose the artificial nature to the viewer.

- Invisible Watermarking: The “machine-readable” requirement implies the use of technologies like C2PA (Coalition for Content Provenance and Authenticity). You should embed metadata into the file headers of generated images or audio that identifies the content as AI-generated. This allows platforms and browsers to identify the provenance of the media.

Designing for Compliance: A UX Comparison

Effective transparency is not just about legal text; it is about user experience. A compliant interface explicitly sets expectations.

- Non-Compliant: A chat window opens with “Hi, I’m Sarah! How can I help you?” with no other indicators. The user assumes ‘Sarah’ is human.

- Compliant: A chat window opens with “Hi, I’m an AI Assistant. How can I help you?” The interface includes a visible badge labeled “AI System” and a link to the terms of use explaining the AI’s limitations.

Conformity Assessments: Proving You Follow the Rules

Building a compliant system is half the battle; proving it is the other half. Article 43 outlines the Conformity Assessment procedures.

Internal Control vs. Notified Body

- Internal Control (Annex VI): For most High-Risk systems (including Annex III Point 1, and Points 2-8), providers can perform a self-assessment based on internal control. This means you do not necessarily need an external auditor to certify your software, provided you rigorously follow the harmonized standards.

- Notified Body (Annex VII): If you are building a High-Risk system for biometric identification (Annex III Point 1) or if you have not fully applied harmonized standards, you must undergo a third-party assessment by a “Notified Body” (an accredited audit firm).

The Procedure

- verify Quality Management System (QMS): Ensure your QMS complies with Article 17.

- Examine Technical Documentation: Review your documentation against Annex IV requirements.

- Verify Monitoring: Ensure your post-market monitoring plan is consistent with the technical design.

- Declaration of Conformity: Once satisfied, you draw up an EU Declaration of Conformity and affix the CE marking to your system.

Post-Market Monitoring and Continuous Compliance

Compliance does not end at deployment. Article 72 mandates a “Post-Market Monitoring System”. You must actively and systematically collect data on your system’s performance in the real world.

The Feedback Loop

- Performance Metrics: Are the accuracy rates holding up? Is the model drifting?

- Incident Reporting: You must report “serious incidents” (e.g., a breach of fundamental rights, or a system failure endangering safety) to the national competent authorities immediately.

- Updates: The monitoring plan serves as the trigger for the continuous risk management process. If logs show a spike in “low confidence” decisions for a specific demographic, this feeds back into your Article 10 bias analysis and Article 9 risk assessment, prompting a model retrain.

SME Support: Accessing Sandboxes

The EU recognizes that these regulations impose a heavy burden on Small and Medium Enterprises (SMEs) and startups. To mitigate this, the AI Act creates specific support mechanisms.

Regulatory Sandboxes (Articles 57-58)

By August 2026, every EU Member State must establish at least one AI Regulatory Sandbox.

- What is it? A controlled environment where you can develop, train, test, and validate your AI system before placing it on the market, under the direct supervision of the competent authority.

- The Benefit: Testing in a sandbox provides legal certainty. If you follow the sandbox plan and the authority’s guidance, you are shielded from administrative fines for infringements of the AI Act.

- Priority Access: SMEs and startups have priority access to these sandboxes. This is a massive strategic advantage. If you are building a novel credit scoring or HR tool, applying for a sandbox spot can validate your compliance strategy before you face market risks.

Simplified Documentation

The European Commission is tasked with establishing a simplified technical documentation form specifically targeted at the needs of small and micro-enterprises. While the final template is yet to be released (expected by 2026), this will likely streamline the bureaucratic aspects of Annex IV for smaller players.

Strategy: Your Roadmap to August 2026

The transition from non-compliant to compliant is a multi-month process. Tool1.app recommends the following strategic roadmap:

Phase 1: Audit and Classification (Months 1-2)

- Inventory: List every AI model in your organization.

- Classify: Use the Annex III criteria to determine which are High-Risk.

- Gap Analysis: For High-Risk systems, assess your current logging, data governance, and documentation against the AI Act requirements.

Phase 2: Technical Remediation (Months 3-4)

- Build the “Black Box”: Implement the Article 12 logging schema.

- UI Overhaul: Redesign interfaces to include Article 14 human oversight mechanisms (overrides/stops) and Article 50 transparency notices.

- Data Cleaning: Audit your training data for bias and document the lineage.

Phase 3: Documentation and Assessment (Months 5-6)

- Compile the Technical File: Assemble the Annex IV documentation.

- Risk Assessment: Conduct a formal risk assessment and document mitigation strategies.

- Conformity: Perform the internal control assessment and sign the Declaration of Conformity.

Conclusion: Compliance is a Competitive Advantage

The EU AI Act is often viewed as a burden, but it also creates a market of “trustworthy AI.” In a world where users are increasingly skeptical of “black box” algorithms, being able to prove that your software is transparent, fair, and safe is a powerful differentiator.

The deadline of August 2, 2026, is immovable. The engineering work required to meet it—building risk management systems, implementing robust logging, and redesigning user interfaces—cannot be done overnight. The time to start is now.

Ensure Your Software is Compliant. Schedule a Consultation Today.

Navigating Annex III requirements while maintaining code velocity is a challenge, but you don’t have to choose between innovation and compliance. At Tool1.app, we build custom software solutions that are robust, scalable, and regulatory-ready. Whether you need to retrofit a legacy Python application or build a new compliant-by-design AI platform, our team has the expertise to guide you.

Contact Tool1.app today to discuss your AI strategy or audit your current systems for EU AI Act compliance.

Leave a Reply

Want to join the discussion?Feel free to contribute!

Join the Discussion

To prevent spam and maintain a high-quality community, please log in or register to post a comment.